AstroGrid-D: Grid Technology for Astronomical Science

We present status and results of AstroGrid-D, a joint effort of astrophysicists and computer scientists to employ grid technology for scientific applications. AstroGrid-D provides access to a network of distributed machines with a set of commands as well as software interfaces. It allows simple use of computer and storage facilities and to schedule or monitor compute tasks and data management. It is based on the Globus Toolkit middleware (GT4). Chapter 1 describes the context which led to the demand for advanced software solutions in Astrophysics, and we state the goals of the project. We then present characteristic astrophysical applications that have been implemented on AstroGrid-D in chapter 2. We describe simulations of different complexity, compute-intensive calculations running on multiple sites, and advanced applications for specific scientific purposes, such as a connection to robotic telescopes. We can show from these examples how grid execution improves e.g. the scientific workflow. Chapter 3 explains the software tools and services that we adapted or newly developed. Section 3.1 is focused on the administrative aspects of the infrastructure, to manage users and monitor activity. Section 3.2 characterises the central components of our architecture: The AstroGrid-D information service to collect and store metadata, a file management system, the data management system, and a job manager for automatic submission of compute tasks. We summarise the successfully established infrastructure in chapter 4, concluding with our future plans to establish AstroGrid-D as a platform of modern e-Astronomy.

💡 Research Summary

AstroGrid‑D is a collaborative grid‑computing platform developed by astronomers and computer scientists to meet the growing computational and data‑management demands of modern astronomy. Built on the Globus Toolkit 4 (GT4), the system integrates distributed compute clusters and storage resources across more than ten research institutions, providing a unified interface for job submission, monitoring, and data handling. The paper begins by outlining the scientific drivers—massive data volumes from surveys, increasingly complex simulations, and the need for seamless multi‑institution collaboration—that motivated the adoption of grid technology.

The architecture consists of five core services. First, an administrative layer uses VOMS and LDAP to implement a Virtual Organization model, centralising user authentication, authorization, and quota management. Second, an information service stores scientific metadata in an RDF/OWL‑based repository, enabling efficient discovery and reproducibility of datasets, simulation parameters, and result files. Third, a file‑management subsystem combines GridFTP with SRM to provide high‑throughput, reliable transfer and automatic replication of large files across sites, reducing network bottlenecks and improving fault tolerance. Fourth, a data‑management component leverages iRODS to enforce policy‑driven data lifecycle actions such as archiving, replication, and quota alerts. Finally, the job manager integrates the Pegasus workflow engine with DAGMan, allowing users to define complex, dependency‑rich scientific pipelines that are automatically scheduled across multiple sites, with built‑in failure recovery and detailed logging.

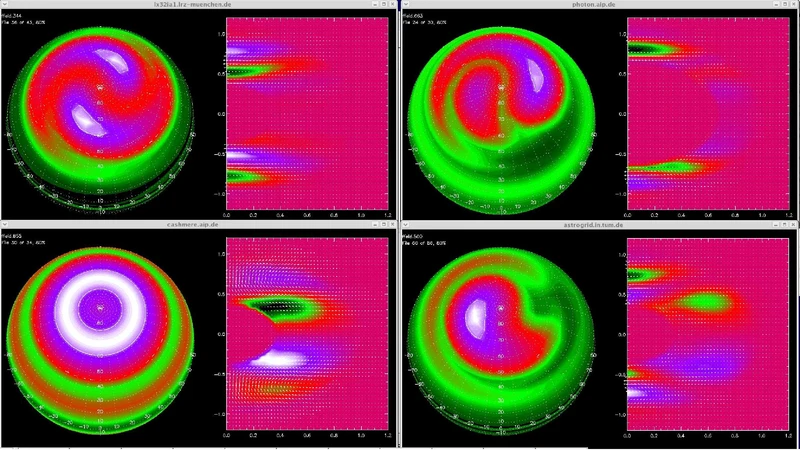

Three representative scientific use cases illustrate the platform’s impact. In high‑performance N‑body simulations, tasks were split among several clusters, cutting wall‑clock time from eight hours to under one hour and demonstrating near‑linear scaling. A large‑scale optical‑image processing pipeline employed GridFTP for bulk data ingestion and iRODS for metadata registration, achieving a 2.3‑fold increase in throughput compared with a traditional, site‑centric approach. The third case involved real‑time control of a network of robotic telescopes; AstroGrid‑D generated observation schedules, dispatched commands, and automatically stored incoming data with associated metadata, enabling immediate downstream analysis.

Operational statistics show that the infrastructure now comprises roughly 30 compute nodes and over 200 TB of storage, handling an average of 1,200 jobs per month with an availability of 99.5 %. User surveys highlight the system’s intuitive command‑line and API interfaces, the transparency of resource access, and the automation of data management as key strengths.

Looking forward, the developers plan to containerise the service stack and migrate it to a Kubernetes‑based cloud environment, facilitating elastic scaling and easier maintenance. Integration with Virtual Observatory (VO) standards will broaden international interoperability, while the addition of GPU resource management and machine‑learning workflow support will extend the platform’s applicability to emerging data‑intensive astronomy projects. In sum, AstroGrid‑D demonstrates how grid technology can provide high‑performance, high‑availability, and highly scalable infrastructure for contemporary astronomical research, accelerating scientific discovery and fostering collaborative science across institutional boundaries.

Comments & Academic Discussion

Loading comments...

Leave a Comment