Phase transitions in semisupervised clustering of sparse networks

Predicting labels of nodes in a network, such as community memberships or demographic variables, is an important problem with applications in social and biological networks. A recently-discovered phase transition puts fundamental limits on the accura…

Authors: Pan Zhang, Cristopher Moore, Lenka Zdeborova

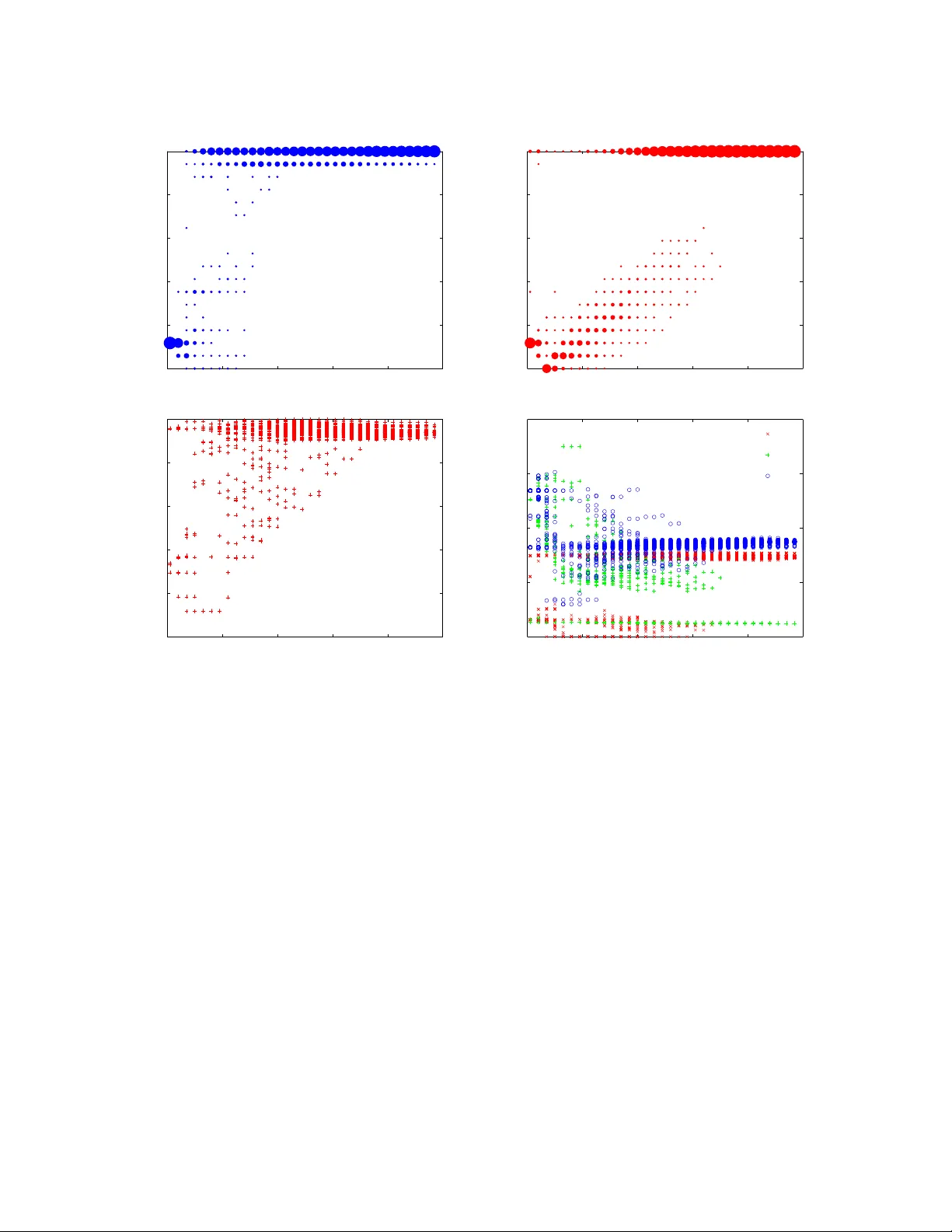

Phase transitions in semisup ervised clustering of sparse net w orks P an Zhang 1 , Cristopher Mo ore 1 , and Lenk a Zdeb orov´ a 2 1) Santa F e Institute, Santa F e, New Mexic o 87501, USA 2) Institut de Physique Th´ eorique, CEA Saclay and URA 2306, CNRS, Gif-sur-Yvette, F r anc e. Predicting lab els of nodes in a netw ork, such as comm unity mem b erships or demographic v ariables, is an imp ortan t problem with applications in so cial and biological net works. A recently-disco vered phase transition puts fundamental limits on the accuracy of these predictions if we hav e access only to the netw ork topology . Ho wev er, if we kno w the correct lab els of some fraction α of the no des, w e can do b etter. W e study the phase diagram of this “semisup ervised” learning problem for netw orks generated by the sto c hastic blo ck model. W e use the cavit y metho d and the associated b elief propagation algorithm to study what accuracy can b e achiev ed as a function of α . F or k = 2 groups, we find that the detectabilit y transition disapp ears for any α > 0, in agreement with previous work. F or larger k where a hard but detectable regime exists, w e find that the easy/hard transition (the p oin t at which efficient algorithms can do b etter than chance) b ecomes a line of transitions where the accuracy jumps discontin uously at a critical v alue of α . This line ends in a critical point with a second-order transition, b ey ond whic h the accuracy is a contin uous function of α . W e demonstrate qualitatively similar transitions in tw o real-world netw orks. I. INTR ODUCTION Comm unity or mo dule detection, also known as no de clustering, is an imp ortant task in the study of biological, so cial and technological net works. Man y metho ds ha ve b een prop osed to solv e this problem, including sp ectral clustering [1–3]; mo dularit y optimization [4–7]; statistical inference using generative mo dels, such as the sto c hastic blo c k mo del [8–10] and a wide v ariety of other metho ds, e.g. [6, 11, 12]. See [13] for a review. It was shown in [8, 9] that for sparse netw orks generated by the sto c hastic blo c k mo del [14], there is a phase transition in communit y detection. This transition was initially established using the cavit y metho d, or equiv alen tly b y analyzing the b ehavior of b elief propagation. It was recently established rigorously in the case of t wo groups of equal size [15 – 17]. In this case, b elo w this transition, no algorithm can lab el the no des b etter than chance, or even distinguish the netw ork from an Erd˝ os-R´ en yi random graph with high probability . In terms of b elief propagation, there is a factorized fixed p oint where every no de is equally lik ely to b e in every group, and it b ecomes globally stable at the transition. F or more than t w o groups, there is an additional regime where the factorized fixed p oint is locally stable, but another, more accurate, fixed point is lo cally stable as well. This regime lies b etw een tw o spinodal transitions: the easy/hard transition where the factorized fixed point b ecomes lo cally unstable, so that efficient algorithms can ac hieve a high accuracy (also known as the Kesten-Stigum transition or the robust reconstruction threshold) and the transition where the accurate fixed p oin t first app ears (also known as the reconstruction threshold). In b etw een these t wo, there is a first order phase transition, where the Bethe free energy of these tw o fixed p oin ts cross. This is the detectabilit y transition, in the sense that an algorithm that can search exhaustively for fixed p oin ts—which would tak e exp onential time—w ould choose the accurate fixed p oint abov e this transition. How ever, b elo w this transition there are exp onen tially many comp eting fixed p oints, each corresp onding to a cluster of assignments, and even an exp onen tial-time algorithm has no w ay to tell which is the correct one. (Note that, of these three transitions, the detectabilit y transition is the only true thermo dynamic phase transition; the others are dynamical.) In betw een the first order phase and easy/hard transitions, there is a “hard but detectable” regime where the comm unities can b e identified in principle; if we could p erform an exhaustive searc h, w e would choose the accurate fixed p oint since it has low er free energy . In Ba y esian terms, the correct blo ck mo del has larger total likelihoo d than an Erd˝ os-R´ en yi graph. How ever, the accurate fixed p oin t has a very small basin of attraction, making it exp onen tially hard to find—unless we hav e some additional information. Here w e model this additional information as a so-called semisup ervise d learning problem (e.g. [18]) where w e are giv en the true lab els of some small fraction α of the no des. This information shifts the location of these transitions; in essence, it destabilizes the factorized fixed p oin t, and pushes us tow ards the basin of attraction of the accurate one. As a result, for some v alues of the block model parameters, there is a discon tinuous jump in the accuracy as a function of α . Roughly speaking, for very small α our information is lo cal, consisting of the known no des and go od guesses ab out nodes in their vicinity: but at a certain α b elief propagation causes this information to percolate, giving us high accuracy throughout the netw ork. As w e v ary the blo ck mo del parameters, this line terminates at the p oint where the tw o spino dals and first order phase transitions all meet. A t that critical p oint there is a second-order phase transition, and beyond that p oint the accuracy is a contin uous function of α . 2 Semisup ervised learning is an imp ortan t task in machine learning, in settings where hidden v ariables or lab els are exp ensiv e and time-consuming to obtain. Semisup ervised comm unity detection was studied in sev eral previous pap ers [19–21]. The conclusion of [19] was that the detectability transition disappears for any α > 0. Later [20] suggested that in some cases it surviv es for more than tw o groups. How ever, b oth these works were based on an appro ximate (zero temp erature replica symmetric) calculation that corresponds to a far-from-optimal algorithm; moreo ver, it is kno wn to lead to unphysical results in man y other mo dels suc h as graph coloring (which is a special case of the sto c hastic blo ck mo del) or random k -SA T [22, 23]. In the presen t pap er w e inv estigate semisup ervised comm unity detection using the cavit y metho d and b elief prop- agation, which in sparse graphs is b eliev ed to b e Bay es-optimal in the limit n → ∞ . F rom a physics p oin t of view, our results settle the question of what exactly happ ens in the semisupervised setting, including how the reconstruc- tion, detectability , and easy/hard transitions v ary as a function of α . F rom the p oin t of view of mathematics, our calculations provide non-trivial conjectures that we hop e will b e amenable to rigorous pro of. Our calculations follo w the same metho dology as those carried out in tw o other problems: • Study of the ide al glass tr ansition by r andom pinning. An imp ortant prop ert y of Bay es-optimal inference is that the true configuration cannot b e distinguished from other configurations that are sampled at random from the p osterior probabilit y measure. This is why considering a disordered system similar to the one in this pap er and fixing the v alue or p osition of a small fraction of no des in a randomly c hosen equilibrium configurations is formally the same problem to semisup ervised learning. The analysis of systems with pinned particles w as done in order to b etter understand the formation of glasses in [24, 25]. • Analysis of b elief pr op agation guide d de cimation. belief propagation with decimation is a v ery in teresting solver for a wide range of random constraint satisfaction problems. Its p erformance was analyzed in [26, 27]. If we decimate a fraction α of the v ariables (i.e., fix them to particular v alues) this affects the further p erformance of the algorithm in a wa y similar to semisup ervised learning. F or random k -SA T, a large part of this picture has been made rigorous [28]. Our hop e is that similar tec hniques will apply to our results here. As a first step, very recen t work [29] sho ws that semisup ervised learning do es indeed allow for partial reconstruction b elo w the detectabilit y threshold for k > 4 groups. The pap er is organized as follows. Section I I includes definitions and the description of the sto c hastic blo c k mo del. In Sections II I w e consider semisupervised learning in the net works generated b y stochastic block mo del. In Section IV w e consider semisup ervised learning in tw o real-world netw orks, finding transitions in the accuracy at a critical v alue of α qualitativ ely similar to our analysis for the block mo del. W e conclude in Section V. I I. THE STOCHASTIC BLOCK MODEL, BELIEF PROP A GA TION, AND SEMISUPER VISED LEARNING The stochastic block mo del is defined as follo ws. No des are split in to k groups, where each group 1 ≤ a ≤ k contains an exp ected fraction q a of the no des. Edge probabilities are given b y a k × k matrix p . W e generate a random netw ork G with n no des as follows. First, we choose a group assignmen t t ∈ { 1 , . . . , k } n b y assigning each no de i a lab el t i ∈ { 1 , ..., k } chosen indep enden tly with probability q t i . Bet w een eac h pair of no des i and j , we then add an edge b et w een them with probability p t i ,t j . F or no w, we assume that the parameters k , q (a v ector denoting { q a } ), and p (a matrix denoting { q ab } ) are kno wn. The likelihoo d of generating G giv en the parameters and the lab els is P ( G, t | q , p ) = Y i q t i Y h ij i∈ E p t i ,t j Y h ij i6∈ E (1 − p t i ,t j ) , (1) the Gibbs distribution of the labels t , i.e., their p osterior distribution given G , can b e computed via Ba yes’ rule, P ( t | G, q , p ) = P ( G, t | q , p ) P s P ( G, s | q , p ) . (2) In this pap er, we consider sparse netw orks where p ab = c ab /n for some constant matrix c . In this case, the marginal probabilit y ψ i a that a giv en no de i has label t i = a can b e computed using belief propagation. The idea of BP is to replace these marginals with “messages” ψ i → ` a from i to eac h of its neighbors ` , which are estimates of these marginals based on i ’s in teractions with its other neigh b ors [30, 31]. W e assume that the neigh b ors of eac h node are conditionally 3 indep enden t of each other; equiv alently , w e ignore the effect of lo ops. F or the stochastic block mo del, we obtain the follo wing up date equations for these messages [8, 9]: ψ i → ` a = 1 Z i → ` q a e h a Y j ∈ ∂ i \ ` X b c ab ψ j → i b , (3) Here Z i → l is a normalization factor and h a is an adaptiv e external field that enforces the exp ected group sizes, h a = 1 n X i X b c ab ψ i b , (4) where the marginal probability that t i = a is giv en by ψ i a = 1 Z i q a e h a Y j ∈ ∂ i X b c ab ψ j → i b , (5) In the usual setting, we start with random messages, and apply the BP equations (3) un til w e reac h a fixed p oint. In order to predict the node labels, w e assign eac h no de to its most-likely lab el according to its marginal: b t i = argmax a ψ i a . Fixed p oints of the BP equations are stationary points of the Bethe free energy [30], which up to a constant is F Bethe = X h ij i∈ E log k X a,b =1 c ab ψ i → j a ψ j → i b − X i log k X a =1 q a e h a Y j ∈ ∂ i X { b } c ab ψ j → i b . (6) If there is more than one fixed p oin t, the one with the lo west F Bethe giv es an optimal estimate of the marginals, since F Bethe is minus the logarithm of the total likelihoo d of the block mo del. How ev er, as w e commen t ab ov e, if the optimal fixed p oin t has a small basin of attraction, then finding it through exhaustive search will take exp onential time. Analyzing stability of instabilit y of these fixed p oints, including the trivial or “factorized” fixed p oin t where ψ i → ` a = q a , leads to the phase transitions in the sto c hastic blo ck mo del described in [8, 9]. It is straigh tforward to adapt the ab o v e formalism to the case of semisup ervised learning. One uses exactly the same equations except that no des whose lab els hav e b een revealed hav e fixed messages. If w e know that t i = a ∗ , then for all ` we hav e ψ i → ` a = δ a,a ∗ . Equiv alently , we can define a local external field, replacing the global parameter q with q i in (3). Then q i a = δ a,a ∗ . In this pap er w e fo cus on a widely-studied special case of the sto chastic block mo del, also w ell-known as the plan ted partition mo del, where the groups are of equal size, i.e., , q a = 1 /k , and where c ab tak es only t wo v alues: c ab = ( c in if a = b c out if a 6 = b . (7) In that case, the av erage degree is c = ( c in + ( q − 1) c out ) /q . It is common to parametrize this mo del with the ratio = c out /c in . When = 0 is small, no des are connected only to others in the same group; at = 1 the net w ork is an Erd˝ os-R´ en yi graph, where every pair of no des is equally likely to be connected. W e assume here that the parameters k , c in , c out are known. If they are unknown, inferring them from the graph and partial information ab out the nodes is an interesting learning problem in its o wn righ t. One can estimate them from the set of kno wn edges, i.e., those where b oth endp oin ts hav e known lab els: in the sparse case there are O ( α 2 n ) suc h edges, or O ( n ) if the fraction of kno wn lab els α is constan t. Ho w ever, for α ∼ 10 − 2 , say , there are very few such edges un til n & 10 5 or 10 6 . Alternately , w e can learn the parameters using the exp ectation-maximization (EM) algorithm of [8, 9], whic h minimizes the Bethe free energy , or a h ybrid method where w e initialize EM with parameters estimated from the kno wn edges, if any . 4 0.1 0.2 0.3 0.4 0.5 0.6 10 1 10 2 10 3 c out /c in convergence time α =0 α =0.01 α =0.03 α =0.07 α =0.12 0.5 0.6 0.7 0.8 0.9 1 overlap 0.16 0.17 0.18 0.19 0.2 10 1 10 2 10 3 10 4 c out /c in convergence time α =0 α =0.0005 α =0.005 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 overlap FIG. 1: Overlap and conv ergence time of BP as a function of = c out /c in for different α , on netw orks generated by the sto c hastic blo c k mo del. On the left, k = 2, c = 3, and n = 10 5 . F or just tw o groups, the transition disapp ears for any α > 0. On the right, k = 10, c = 10, n = 5 × 10 5 . Here the easy/hard transition p ersists for small v alues of α , with a discontin uity in the ov erlap and a diverging con vergence time; this transition disapp ears at a critical v alue of α . c out /c in α 0.16 0.17 0.18 0.19 0.2 0 2 4 6 8 x 10 −3 0.1 0.2 0.3 0.4 0.5 0.6 c out /c in α 0.16 0.17 0.18 0.19 0.2 0 2 4 6 8 x 10 −3 2 2.5 3 3.5 4 FIG. 2: Left, ov erlap as a function of = c out /c in and α for netw orks with same parameters as in the righ t of Fig. 1. The heat map shows a line of discontin uities, ending at a second-order phase transition b eyond whic h the o verlap is a smooth function. Righ t, the logarithm (base 10) of the conv ergence time in the same plane, showing div ergence along the critical line. I II. RESUL TS ON THE STOCHASTIC BLOCK MODEL AND THE F A TE OF THE TRANSITIONS First we inv estigate semisup ervised learning for assortative netw orks, i.e., the case c in > c out . As shown in [8], in the unsup ervised case α = 0 there is a phase transition at c in − c out = k √ c , where the factorized fixed p oin t go es from stable to unstable. Belo w this transition the ov erlap, i.e., the fraction of correctly assigned no des, is 1 /k , no b etter than random chance. F or k ≤ 4 this phase transition is second-order: the ov erlap is contin uous, but with discontin uous deriv ative at the transition. F or k > 4, it becomes an “easy/hard” transition, with a discontin uity in the o v erlap when we jump from the factorized fixed p oin t to the accurate one. In b oth cases, the conv ergence time (the num b er of iterations BP takes to reach a fixed p oint) diverges at the transition. In Fig. 1 we show the o verlap achiev ed by BP for t wo different v alues of k and v arious v alues of α . In each case, w e hold the av erage degree c fixed and v ary = c out /c in . On the left, we ha v e k = 2. Here, analogous to the unsup ervised case α = 0, the ov erlap is a contin uous function of . Moreov er, for α > 0 the detectability transition disapp ears: the o verlap b ecomes a smo oth function, and the conv ergence time no longer diverges. This picture agrees qualitatively with the appro ximate analytical results in [19, 20]. 5 10 12 14 16 18 20 22 10 1 10 2 10 3 10 4 c convergence time α =0 α =0.01 α =0.03 α =0.07 α =0.12 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 overlap 12 13 14 15 16 0 0.02 0.04 0.06 c α easy/hard spinodal detectability FIG. 3: Left, ov erlap and BP conv ergence time in the planted 5-coloring problem as a function of the av erage degree c for v arious v alues of α . Righ t, the three transitions described in the text: the easy/hard or Kesten-Stigum transition where the factorized fixed p oin t becomes stable (blue), the lo w er spinodal transition where the accurate fixed point disapp ears (green), and the detectabilit y transition where the Bethe free energies of these fixed p oints cross (red). The hard but detectable regime, where BP with random initial messages do es no better than c hance but exhaustive search would succeed, is b etw een the red and blue lines. All three transitions meet at a critical point, b eyond which the ov erlap is a smo oth function of c and α . Here n = 10 5 . On the right-hand side of Fig. 1, w e sho w exp erimental results with k = 10. Here the easy/hard transition p ersists for sufficiently small α , with a discontin uity in the ov erlap and a diverging con v ergence time. A t a critical v alue of α , the transition disappears, and the ov erlap becomes a smooth function of ; b ey ond that p oin t the conv ergence time has a smooth peak but do es not diverge. Thus there is a line of discon tin uities, ending in a second-order phase transition at a critical p oin t. W e show this line in the ( α, )-plane in Fig. 2. On the left, w e see the discontin uity in the ov erlap, and on the right we see that the conv ergence time div erges along this line. Note that the authors of [20] also predicted the surviv al of the easy/hard discon tinuit y in the assortativ e case. Their appro ximate computation, ho wev er, ov erestimates the strength of the phase transition, and misplaces its p osition. In particular, it predicts the discontin uit y for all k > 2, whereas it holds only for k > 4. The full physical picture of what happ ens to the “hard but detectable” regime in the semisupervised case, and to the spino dal and detectability transitions that define it, is v ery interesting. T o explain it in detail we fo cus on the disassortativ e case, and sp ecifically the case of planted graph coloring where c in = 0. The situation for the assortative case is qualitatively similar, but for graph coloring the discontin uity in the o verlap is v ery strong and app ears for any k > 3, making these phenomena easier to see n umerically . Fig. 3, on the left, sho ws the ov erlap and conv ergence time of BP for k = 5 colors. In the unsup ervised case α = 0, there are a total of three transitions as we decrease c (making the problem of recov ering the planted coloring harder). The ov erlap jumps at the easy/hard spino dal transition, where the factorized fixed p oint b ecomes stable: this o ccurs at c = ( k − 1) 2 . A t the low er spino dal transition, the accurate fixed p oin t disapp ears. In b et ween these t wo spino dal transitions, b oth fixed points exist. Their Bethe free energies cross at the detectabilit y transition: b elo w this p oin t, ev en a Ba yesian algorithm with the luxury of exhaustiv e search would do no b etter than c hance. Th us the “hard but detectable” regime lies in b etw een the detectability and easy/hard transitions [22]. On the right of Fig. 3, we plot the tw o s pinodal transitions, and the detectability transition in b etw een them, in the ( c, α )-plane. W e see that these transitions p ersist up to a critical v alue of α ≈ 0 . 06. At that point, all three meet at a second-order phase transition, beyond which the o verlap is a smo oth function. The v ery same picture arises in the t wo related problems men tioned in the in tro duction, namely the glass transition with random pinning and BP-guided decimation in random k -SA T; see e.g. Fig. 1 in [24, 25] and Fig. 3 in [27]. Finally , in Fig. 4 w e plot the o verlap and conv ergence time for the planted 5-coloring problem in the ( c, α )-plane. Analogous to the assortative case in Fig. 2, but more visibly , there is a line of discontin uities in the o verlap along whic h the con vergence time div erges; the heigh t of the discontin uity decreases until we reach the critical p oin t. 6 c α 10 14 18 22 0 0.04 0.08 0.12 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 c α 10 14 18 22 0 0.04 0.08 0.12 1.5 2 2.5 3 3.5 4 FIG. 4: Overlap (top and left) and con vergence time (righ t) as a function of the a v erage degree c and the fraction of kno wn lab els α for the planted 5-coloring problem on net works with n = 10 5 . The height of the discontin uity decreases until we reac h the critical p oin t. The conv ergence time diverges along the discontin uit y . Compare Fig. 2 for the assortative case. IV. RESUL TS ON REAL-W ORLD NETW ORKS In this section we study semisup ervised learning in real-w orld netw orks. Real-world netw orks are of course not generated by the sto c hastic blo c k mo del; ho wev er, the blo ck mo del can often achiev e high accuracy for communit y detection, if its parameters are fit to the net work. T o explore the effect of semisup ervised learning, we set the parameters q a and c ab in tw o different wa ys. In the first wa y , the algorithm is given the b est p ossible v alues of these parameters in adv ance, as determined by the ground truth lab els: this is cheating, but it separates the effect of b eing given αn no de lab els from the pro cess of learning the parameters. In the second (more realistic) wa y , the algorithm uses the exp ectation-maximization (EM) algorithm of [8, 9], whic h minimizes the free energy . As discussed in Section I I, w e initialize the EM algorithm with parameters estimated from edges where b oth endpoints ha ve known lab els, if an y . W e test t wo real net works, namely a netw ork of p olitical blogs [32] and Zachary’s k arate club netw ork. The blog net work is comp osed of 1222 blogs and links b etw een them that were activ e during the 2004 US elections; h uman curators lab eled each blog as lib eral or conserv ative. In Fig. 5 we plot the ov erlap b et ween the inferred lab els and the ground truth lab els, with multiple indep enden t runs of BP with different initial lab els. This netw ork is known not to b e w ell-mo deled by the stochastic block model, since the highest-lik eliho o d SBM splits the nodes into a core-p eriphery structure with high-degree no des in the core and low-degree nodes outside it, instead of dividing the netw ork along p olitical lines [10]. Indeed, as the top left panel shows, even given the correct parameters, BP often falls into a core-p eriphery structure instead of the correct one. Ho wev er, once α is sufficien tly large, we mo ve into the basin of attraction of the correct division. 7 On the top right of Fig. 5, we see a similar transition, but now b ecause the EM algorithm succeeds in learning the correct parameters. There are t wo fixed p oin ts of the learning pro cess in parameter space, corresponding to the high/lo w degree division and the p olitical one. Both of these are lo cal minima of the free energy [33]. As α increases, the correct one b ecomes the global minimum, and its basin of attraction gets larger, until the fraction of runs that arriv e at the correct parameters (and therefore an accurate partition) b ecomes large. W e show this learning pro cess in the low er panels of Fig. 5. Since there are just t wo groups, q 1 determines the group sizes, where we break symmetry by taking q 1 to b e the smaller group. As α increases, we mo ve from q 1 = 0 . 3 to q 1 = 0 . 47. On the low er right, w e see the parameters c ab c hange from a core-p eriphery structure with c 22 > c 12 > c 11 to an assortativ e one with c 11 ≈ c 22 > c 12 . Our second example is Zac hary’s Karate club [34], whic h represents friendship patterns b etw een the 34 mem b ers of a universit y k arate club whic h split into tw o factions. As with the blog netw ork, it has tw o lo cal optima in parameter place, one corresp onding to a high/low degree division (which in the unsup ervised case has lo wer free energy) and the other in to the tw o factions [8]. W e again do t wo t yp es of experiments, one where the b est parameters q a , c ab kno wn in adv ance, and the other where w e learn these parameters with the EM algorithm. Our results are showin in Fig 6 and are similar to Fig. 5. As α increases, the o verlap improv es, in the first case b ecause the known lab els push us in to the basin of attraction of the correct division, and in the second case b ecause the EM algorithm finds the correct parameters. As discussed elsewhere [8, 10], the standard stochastic blo ck mo del p erforms p oorly on these net works in the unsup ervised case. It assumes a Poisson degree distribution within eac h communit y , and thus tends to divide nodes in to groups according to their degree; in contrast, these net works (and man y others) hav e hea vy-tailed degree distributions within communities. A b etter mo del for these netw orks is the degree-corrected sto chastic blo c k mo del [10], which ac hieves a large o verlap on the blog netw ork even when no lab els are known. W e emphasize that our analysis can easily b e carried out for the degree-corrected SBM as well, using the BP equations given in [35]. On the other hand, it is in teresting to observ e ho w, in the semisupervised case, ev en the standard SBM succeeds in recognizing the net work’s structure at a mo derate v alue of α . V. CONCLUSION AND DISCUSSION W e hav e studied semisupervised learning in sparse net w orks with belief propagation and the stochastic block mo del, fo cusing on ho w the detectability and easy/hard transitions dep end on the fraction α of kno wn no des. In agreemen t with previous work based on a zero-temp erature approximation [19, 20], for k = 2 groups the detectabilit y transition disapp ears for α > 0. How ever, for large k where there is a hard but detectable phase in the unsupervised case, the easy/hard transition p ersists up to a critical v alue of α , creating a line of discontin uities in the o verlap ending in a second-order phase transition. W e found qualitativ ely similar b eha vior in tw o real netw orks, where the ov erlap jumps discontin uously at a critical v alue of α . When the b est p ossible parameters of the blo ck model are known in adv ance, this happ ens when the basin of attraction of the correct structure b ecomes larger; when w e learn them with an EM algorithm as in [8, 9], it o ccurs b ecause the optimal parameters b ecome global minima of the free energy . In particular, ev en though the standard blo c k mo del is not a goo d fit to netw orks like the blog net work or the k arate club, where eac h comm unit y has a hea vy-tailed degree distributions, we found that at a certain v alue of α it switches from a core-p eriphery structure to the correct assortativ e structure. It w ould b e v ery in teresting to apply this formalism to active learning, where rather than learning the labels of a random set of αn nodes, the algorithm must choose which no des to explore. One approac h to this problem [36] is to explore the no de with the largest mutual information betw een it and the rest of the net work, as estimated b y Monte Carlo sampling of the Gibbs distribution, or (more efficiently) using b elief propagation. W e leav e this for future work. Ac knowledgmen ts C.M. and P .Z. were supp orted by AFOSR and DARP A under gran t F A9550-12-1-0432. W e are grateful to Florent Krzak ala, Elc hanan Mossel, and Allan Sly for helpful conv ersations. [1] U. V on Luxburg, Statistics and Computing 17 , 395 (2007). [2] M. E. J. Newman, Physical Review E 74 , 036104 (2006). 8 0 0.2 0.4 0.6 0.8 1 0.5 0.6 0.7 0.8 0.9 1 α Overlap 0 0.2 0.4 0.6 0.8 1 0.5 0.6 0.7 0.8 0.9 1 α Overlap 0 0.2 0.4 0.6 0.8 1 0.2 0.25 0.3 0.35 0.4 0.45 0.5 α q 1 0 0.2 0.4 0.6 0.8 1 0 50 100 150 200 α c 11 c 12 c 22 FIG. 5: Semisup ervised learning in a netw ork of p olitical blogs [32]. Different p oin ts corresp ond to indep endent runs with differen t initial lab els. On the top left, the b est p ossible parameters q a and c ab are given to the algorithm in adv ance. On the top righ t, the algorithm learns these parameters using an EM algorithm, seeded by the kno wn lab els. The b ottom panels show ho w the learned q 1 and c ab c hange as α increases, moving from a core-p eriphery structure where no des are divided according to high or lo w degree, to the correct assortative structure where they are divided along p olitical lines. [3] F. Krzak ala, C. Mo ore, E. Mossel, J. Neeman, A. Sly , L. Zdeb oro v´ a, and P . Zhang, Pro ceedings of the National Academy of Sciences 110 , 20935 (2013). [4] M. E. J. Newman and M. Girv an, Physical Review E 69 , 026113 (2004). [5] M. E. Newman, Physical Review E 69 , 066133 (2004). [6] A. Clauset, M. E. Newman, and C. Mo ore, Physical Review E 70 , 066111 (2004). [7] J. Duch and A. Arenas, Physical Review E 72 , 027104 (2005). [8] A. Decelle, F. Krzak ala, C. Mo ore, and L. Zdeb orov´ a, Physical Review E 84 , 066106 (2011). [9] A. Decelle, F. Krzak ala, C. Mo ore, and L. Zdeb orov´ a, Physical Review Lett. 107 , 065701 (2011). [10] B. Karrer and M. E. J. Newman, Ph ysical Review E 83 , 016107 (2011). [11] V. D. Blondel, J.-L. Guillaume, R. Lambiotte, and E. Lefebvre, Journal of Statistical Mechanics: Theory and Exp erimen t 2008 , P10008 (2008). [12] M. Rosv all and C. T. Bergstrom, Pro ceedings of the National Academ y of Sciences 105 , 1118 (2008). [13] S. F ortunato, Physics Rep orts 486 , 75 (2010). [14] P . W. Holland, K. B. Laskey , and S. Leinhardt, So cial Netw orks 5 , 109 (1983). [15] E. Mossel, J. Neeman, and A. Sly , preprint arXiv:1202.1499 (2012). [16] L. Massoulie, preprint arXiv:1311.3085 (2013). [17] E. Mossel, J. Neeman, and A. Sly , preprint arXiv:1311.4115 (2013). [18] O. Chap elle, B. Sc h¨ olkopf, A. Zien, et al., Semi-sup ervise d le arning , vol. 2 (MIT press Cambridge, 2006). [19] A. E. Allahv erdyan, G. V er Steeg, and A. Galsty an, Europhysics Letters 90 , 18002 (2010). [20] G. V. Steeg, C. Mo ore, A. Galsty an, and A. E. Allah verdy an, Europhysics Letters (to app ear), [21] E. Eaton and R. Mansbach, in Pr o c e e dings of the Twenty-Sixth AAAI Confer enc e on A rtificial Intel ligence (2012). [22] L. Zdeb oro v´ a and F. Krzak ala, Physical Review E 76 , 031131 (2007). 9 0 0.2 0.4 0.6 0.8 1 0.5 0.6 0.7 0.8 0.9 1 α Overlap 0 0.2 0.4 0.6 0.8 1 0.5 0.6 0.7 0.8 0.9 1 α Overlap 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 α q 1 0 0.2 0.4 0.6 0.8 1 0 5 10 15 20 α c 11 c 12 c 22 FIG. 6: Semisup ervised learning in Zachary’s k arate club [34], with exp erimen ts analogous to Fig. 5: in the upp er left, the optimal parameters are giv en to the algorithm in adv ance, while in the upp er right it learns them with an EM algorithm, giving the parameters shown in the bottom panels. As in the p olitical blog netw ork, the algorithm makes a transition from a core-p eriphery structure to the correct assortative structure. [23] M. M´ ezard and R. Zecchina, Physical Review E 66 , 056126 (2002). [24] C. Cammarota and G. Biroli, Pro ceedings of the National Academy of Sciences 109 , 8850 (2012). [25] C. Cammarota and G. Biroli, The Journal of Chemical Physics 138 , 12A547 (2013). [26] A. Montanari, F. Ricci-T ersenghi, and G. Semerjian, in Pr oc ee dings of the 45th A l lerton Confer enc e (2007), p. 352359. [27] F. Ricci-T ersenghi and G. Semerjian, Journal of Statistical Mechanics: Theory and Exp eriment 2009 , P09001 (2009). [28] A. Co ja-Oghlan, in Pr oc ee dings of the Twenty-Sec ond Annual ACM-SIAM Symp osium on Discr ete Algorithms (SIAM, 2011), pp. 957–966. [29] V. Kanade, E. Mossel, and T. Schramm, preprint arXiv:1404.6325 (2014). [30] J. Y edidia, W. F reeman, and Y. W eiss, in International Joint Conferenc e on Artificial Intel ligenc e (IJCAI) (2001). [31] M. M´ ezard and G. Parisi, Eur. Phys. J. B 20 , 217 (2001). [32] L. A. Adamic and N. Glance, in Pr o c e e dings of the 3r d International Workshop on Link Disc overy (ACM, 2005), pp. 36–43. [33] P . Zhang, F. Krzak ala, J. Reichardt, and L. Zdeb orov´ a, Journal of Statistical Mec hanics: Theory and Exp erimen t 2012 , P12021 (2012). [34] W. W. Zachary , Journal of Anthropological Research pp. 452–473 (1977). [35] X. Y an, J. E. Jensen, F. Krzak ala, C. Mo ore, C. R. Shalizi, L. Zdeb orov´ a, P . Zhang, and Y. Zhu, Journal of Statistical Mec hanics: Theory and Exp eriment (to app ear), [36] C. Mo ore, X. Y an, Y. Zhu, J.-B. Rouquier, and T. Lane, in Pr o c e e dings of KDD (2011), pp. 841–849.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment