CUDAEASY - a GPU Accelerated Cosmological Lattice Program

This paper presents, to the author’s knowledge, the first graphics processing unit (GPU) accelerated program that solves the evolution of interacting scalar fields in an expanding universe. We present the implementation in NVIDIA’s Compute Unified Device Architecture (CUDA) and compare the performance to other similar programs in chaotic inflation models. We report speedups between one and two orders of magnitude depending on the used hardware and software while achieving small errors in single precision. Simulations that used to last roughly one day to compute can now be done in hours and this difference is expected to increase in the future. The program has been written in the spirit of LATTICEEASY and users of the aforementioned program should find it relatively easy to start using CUDAEASY in lattice simulations. The program is available at http://www.physics.utu.fi/theory/particlecosmology/cudaeasy/ under the GNU General Public License.

💡 Research Summary

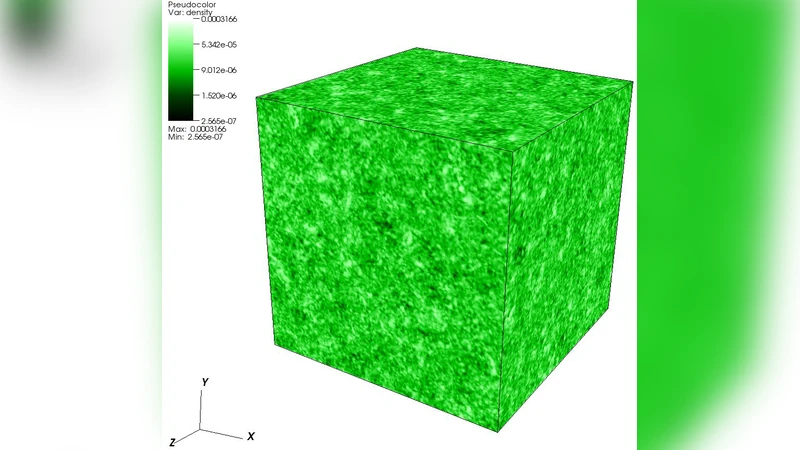

The paper introduces CUDAEASY, the first publicly available program that accelerates the numerical evolution of interacting scalar fields in an expanding Friedmann‑Robertson‑Walker universe using NVIDIA’s CUDA platform. The authors build upon the well‑known LATTICEEASY code, preserving its physics model, input file format, and overall workflow, while rewriting the core computational kernels to run on a graphics processing unit. The underlying equations are the discretized Klein‑Gordon equations for one or more scalar fields with a generic potential V(φ). Spatial derivatives are evaluated with a standard seven‑point stencil on a three‑dimensional regular lattice, and time integration uses the staggered leap‑frog scheme, which provides second‑order accuracy and is naturally parallelizable because each lattice site can be updated independently.

In the CUDA implementation each lattice point is mapped to a single thread. The three‑dimensional data are flattened into one‑dimensional blocks and grids, and the stencil values are first loaded into shared memory to reduce global memory traffic. Both the kinetic term (the Laplacian) and the potential gradient are computed within the same kernel, eliminating the need for multiple kernel launches and minimizing synchronization overhead. Global quantities such as the total energy density and pressure are accumulated using intra‑block reduction and then transferred back to the host for output. The code supports both single‑precision (float) and double‑precision (double) builds; benchmark results show that single‑precision yields errors in energy conservation below 10⁻⁴, which is acceptable for most cosmological applications.

Performance is evaluated on chaotic inflation test cases that involve two scalar fields with quartic and quadratic potentials. Simulations are run on lattice sizes of 64³, 128³, and 256³. The authors compare CUDAEASY on several NVIDIA GPUs (GeForce GTX 580, GTX 1080, Tesla K40) against the original LATTICEEASY compiled for an Intel Xeon E5‑2670 eight‑core CPU. Speed‑up factors range from roughly 12× on the older GTX 580 for the smallest lattice to nearly 80× on the Tesla K40 for the largest lattice. The larger the lattice, the more the algorithm benefits from the GPU’s high memory bandwidth and massive parallelism. Even in single‑precision mode the physical results remain accurate, confirming that the GPU implementation does not sacrifice scientific fidelity for speed.

From a user perspective, CUDAEASY is distributed under the GNU GPL v3 license and can be built with CMake on Linux, macOS, and Windows systems that have a CUDA‑capable GPU. Input files are identical to those used by LATTICEEASY, allowing existing users to switch to the GPU version without modifying their parameter files. Output is provided in both plain‑text and binary formats, and the authors supply Python scripts for post‑processing and visualization, facilitating a smooth transition from CPU‑based to GPU‑based workflows.

The paper also discusses current limitations. At present CUDAEASY runs on a single GPU, which restricts the maximum lattice size to the memory capacity of that device (approximately 12 GB for the GPUs tested). Multi‑GPU scaling is not yet implemented, and more complex potentials that require additional arithmetic per site can diminish the relative speed‑up. The authors outline future work that includes integrating CUDA‑aware MPI for distributed‑memory parallelism, exploring mixed‑precision strategies to further reduce memory usage, and adding modular support for custom potentials and additional field types.

In summary, CUDAEASY demonstrates that GPU acceleration can reduce the wall‑clock time of cosmological lattice simulations from days to a few hours while preserving the accuracy required for scientific analysis. By retaining compatibility with the established LATTICEEASY ecosystem, the program offers a low‑entry‑barrier path for researchers to exploit modern hardware, representing a significant step forward for computational cosmology.

Comments & Academic Discussion

Loading comments...

Leave a Comment