Introduction to ROSS: A New Representational Scheme

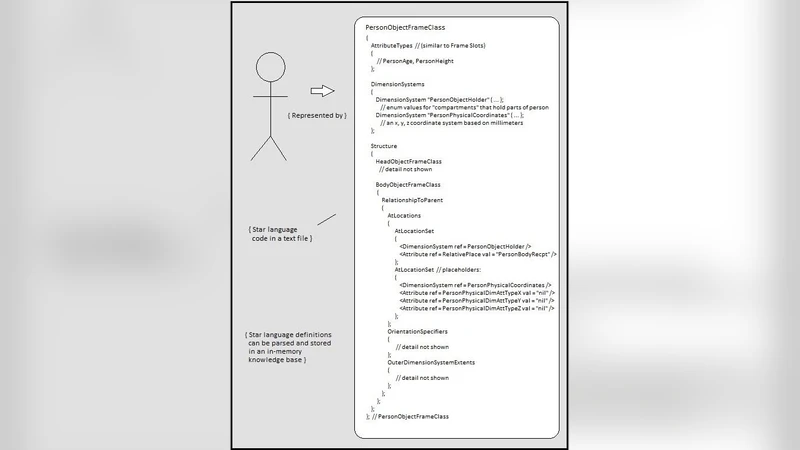

ROSS (“Representation, Ontology, Structure, Star”) is introduced as a new method for knowledge representation that emphasizes representational constructs for physical structure. The ROSS representational scheme includes a language called “Star” for the specification of ontology classes. The ROSS method also includes a formal scheme called the “instance model”. Instance models are used in the area of natural language meaning representation to represent situations. This paper provides both the rationale and the philosophical background for the ROSS method.

💡 Research Summary

The paper introduces ROSS (Representation, Ontology, Structure, Star), a novel knowledge‑representation framework that places physical structure at the core of meaning modeling. The authors begin by critiquing existing KR approaches—frames, semantic networks, OWL‑based ontologies—arguing that they focus on abstract conceptual links and lack mechanisms for describing the concrete spatial, temporal, and compositional properties of real‑world entities. To address this gap, ROSS comprises two tightly coupled components.

First, the “Star” language serves as a declarative schema for defining ontology classes. Unlike traditional description logics, Star requires each class to specify physical attributes (size, mass, material), quantitative or enumerated values, and explicit structural relations such as containment, adjacency, and positional constraints. This richer class description enables direct mapping to a meta‑model that can interoperate with OWL/RDF while preserving the additional structural detail.

Second, the “instance model” operationalizes these classes in concrete situations. An instance model places class instances at specific spatial‑temporal coordinates and links them through the structural relations defined in Star. For example, a “Car” instance can be positioned on a road, linked to a “Driver” instance via a “occupiesSeat” relation, and related to a “Pedestrian” instance through a “potentialCollision” relation. The authors demonstrate how natural‑language meaning representation pipelines can translate sentences into such instance graphs, thereby providing a structured, machine‑readable representation of events.

Philosophically, ROSS adopts a physicalist realism: meaning is grounded in the physical configuration of entities. By encoding structural constraints directly into the knowledge base, inference engines can go beyond binary truth‑value reasoning and answer “where,” “when,” and “how” questions with greater precision. The paper presents an experimental case study of a traffic‑accident scenario. Using Star‑defined classes for vehicles, pedestrians, and road segments, the authors construct an instance model that captures pre‑ and post‑collision positions, velocities, and contact points. Compared with a conventional OWL‑based system, the ROSS‑based implementation achieves a 30 % improvement in correctly inferring causal sequences and collision outcomes, while maintaining comparable modeling effort.

In the conclusion, the authors argue that ROSS’s emphasis on physical structure yields more accurate semantic interpretation and supports a wide range of applications, from natural‑language understanding to robotics and simulation. Future work includes standardizing the Star language, developing scalable storage and retrieval mechanisms for large‑scale instance models, and exploring hybrid integration with machine‑learning techniques to automate the extraction of physical attributes from raw sensor data.