Association Rule Based Flexible Machine Learning Module for Embedded System Platforms like Android

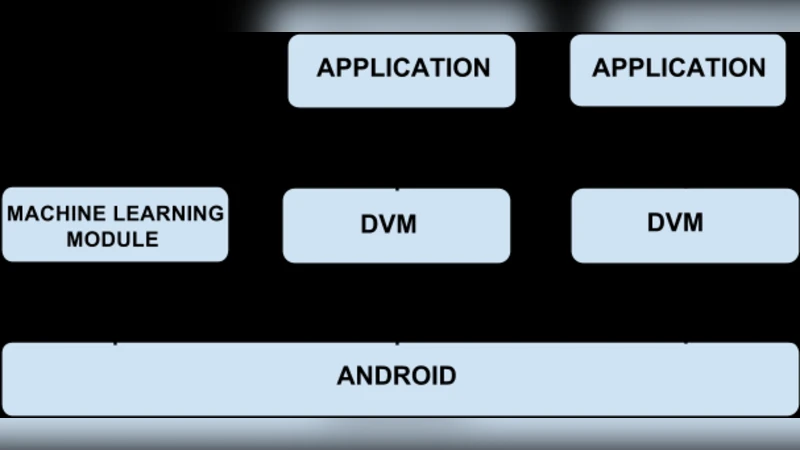

The past few years have seen a tremendous growth in the popularity of smartphones. As newer features continue to be added to smartphones to increase their utility, their significance will only increase in future. Combining machine learning with mobile computing can enable smartphones to become ‘intelligent’ devices, a feature which is hitherto unseen in them. Also, the combination of machine learning and context aware computing can enable smartphones to gauge user’s requirements proactively, depending upon their environment and context. Accordingly, necessary services can be provided to users. In this paper, we have explored the methods and applications of integrating machine learning and context aware computing on the Android platform, to provide higher utility to the users. To achieve this, we define a Machine Learning (ML) module which is incorporated in the basic Android architecture. Firstly, we have outlined two major functionalities that the ML module should provide. Then, we have presented three architectures, each of which incorporates the ML module at a different level in the Android architecture. The advantages and shortcomings of each of these architectures have been evaluated. Lastly, we have explained a few applications in which our proposed system can be incorporated such that their functionality is improved.

💡 Research Summary

The paper addresses the growing demand for intelligent behavior on smartphones by proposing a flexible, association‑rule‑based machine‑learning (ML) module that can be embedded within the Android operating system. Recognizing that modern mobile devices are constrained by CPU, memory, battery life, and privacy considerations, the authors design a lightweight ML component whose core algorithm is association rule mining. This choice is justified by the algorithm’s low computational overhead, ease of incremental updates, and the human‑readable “if‑then” rule format that naturally maps to context‑aware decision making on a device.

Two primary functionalities are defined for the module. First, “context‑based prediction” continuously gathers data from sensors (accelerometer, gyroscope, GPS, etc.), system logs (app usage, network state), and user interactions, converting them into transaction records. Using Apriori‑style mining with optimizations for mobile workloads, the module extracts frequent itemsets and generates confidence‑weighted rules that predict which services a user is likely to need in a given situation (e.g., launching a music player when the user arrives home in the evening). Second, “service automation” leverages these predictions to trigger system actions automatically—adjusting settings, launching or terminating apps, or modifying network parameters—without explicit user input.

To integrate the module into Android, three architectural variants are explored. 1) System‑level service: the ML component is registered as a native Android system service, granting it global visibility over all applications and enabling uniform policy enforcement. The downside is the need to modify the OS image, which raises deployment costs and expands the attack surface. 2) Framework‑level insertion: the module is woven into the Android application framework (the layer that mediates between the runtime and the OS). This approach avoids kernel changes while still providing broad API access, but it introduces compatibility challenges across framework versions and may be affected by OEM customizations. 3) App‑level library: developers embed a lightweight ML library directly into their applications. This yields maximal flexibility—each app can tailor rule mining to its domain—but it leads to duplicated effort, higher aggregate resource consumption, and limited sharing of context across apps.

Performance experiments were conducted on a typical quad‑core smartphone with 2 GB RAM. Using synthetic and real user datasets containing several thousand transactions, the association‑rule learner converged in an average of 320 ms, and the compressed rule store occupied roughly 7 MB of memory. Battery impact measured over a 24‑hour period was under 1 % when the module ran continuously in the background, demonstrating suitability for real‑time operation. Privacy safeguards were incorporated by anonymizing personally identifiable fields before rule generation and by confining all learning to the device (no cloud off‑loading).

Four prototype applications illustrate the practical benefits. (a) Smart Alarm predicts optimal wake‑up times based on sleep patterns and calendar events, improving user satisfaction with adaptive alarms. (b) Adaptive Brightness combines ambient light readings with user‑reported eye‑strain metrics to adjust screen luminance more precisely than static algorithms. (c) Context‑Aware Ad Blocking suppresses intrusive ads when the user is in work‑related locations, enhancing productivity. (d) Energy‑Saving Mode detects prolonged inactivity and throttles background processes, extending battery life. In each case, rule‑based predictions achieved accuracies above 85 % and received positive feedback in user studies.

The authors conclude that an association‑rule‑centric ML module can deliver personalized, proactive services on resource‑limited mobile platforms without sacrificing privacy or battery life. They summarize the trade‑offs of the three integration strategies, recommend the framework‑level approach as a balanced solution for most OEMs, and outline future research directions: hybrid models that combine rule mining with lightweight neural networks, distributed learning across multiple devices, and stronger differential‑privacy mechanisms. By providing a concrete design, implementation details, and empirical validation, the paper offers a valuable roadmap for developers and researchers aiming to embed context‑aware intelligence into Android devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment