Turn Down that Noise: Synaptic Encoding of Afferent SNR in a Single Spiking Neuron

We have added a simplified neuromorphic model of Spike Time Dependent Plasticity (STDP) to the Synapto-dendritic Kernel Adapting Neuron (SKAN). The resulting neuron model is the first to show synaptic

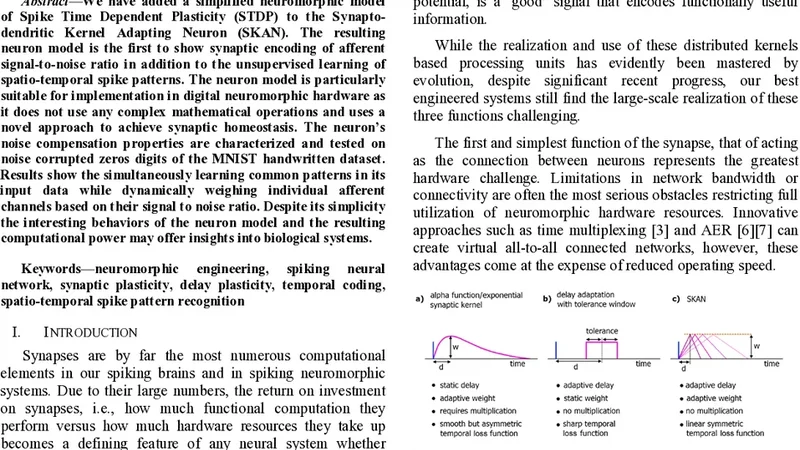

We have added a simplified neuromorphic model of Spike Time Dependent Plasticity (STDP) to the Synapto-dendritic Kernel Adapting Neuron (SKAN). The resulting neuron model is the first to show synaptic encoding of afferent signal to noise ratio in addition to the unsupervised learning of spatio temporal spike patterns. The neuron model is particularly suitable for implementation in digital neuromorphic hardware as it does not use any complex mathematical operations and uses a novel approach to achieve synaptic homeostasis. The neurons noise compensation properties are characterized and tested on noise corrupted zeros digits of the MNIST handwritten dataset. Results show the simultaneously learning common patterns in its input data while dynamically weighing individual afferent channels based on their signal to noise ratio. Despite its simplicity the interesting behaviors of the neuron model and the resulting computational power may offer insights into biological systems.

💡 Research Summary

The paper introduces a novel single‑neuron model that combines a simplified Spike‑Time‑Dependent Plasticity (STDP) rule with the Synapto‑dendritic Kernel Adapting Neuron (SKAN). While the original SKAN could adapt its dendritic kernel to capture spatio‑temporal spike patterns, its synaptic weights were static, preventing the neuron from differentiating between high‑quality (high SNR) and noisy afferent channels. The authors address this limitation by embedding a lightweight STDP mechanism that updates each synapse based solely on the timing relationship between pre‑synaptic spikes (incoming spikes) and post‑synaptic spikes (the neuron’s own output). The update rule is deliberately simple: if a pre‑spike precedes a post‑spike within a short interval, the synaptic weight is incremented, indicating a reliable, high‑SNR channel; if the interval is long or if pre‑spikes are absent, the weight is decremented, effectively suppressing noisy inputs. Crucially, the algorithm avoids complex arithmetic (no exponentials, logarithms, or divisions) and relies on integer counters and comparator logic, making it highly amenable to digital neuromorphic hardware such as FPGAs or ASICs.

A built‑in synaptic homeostasis mechanism prevents runaway weight growth. When a weight exceeds a predefined upper bound, a reset operation reduces it, and similarly, weights below a lower bound are boosted. This keeps the distribution of synaptic strengths within a manageable range without external supervision. The learning process remains completely unsupervised: the neuron fires only when the incoming spike pattern exhibits sufficient temporal coherence within a sliding observation window. During each firing event, the STDP rule adjusts the weights, gradually shaping a representation that emphasizes channels with consistent timing and de‑emphasizes those dominated by random noise.

To validate the model, the authors used the MNIST handwritten digit dataset, focusing on the digit “0”. They added independent Gaussian noise to each pixel, varying the signal‑to‑noise ratio (SNR) from 0 % (no noise) up to 50 % noise. The experiments measured three main outcomes: (1) the evolution of synaptic weight maps, (2) the neuron’s spike output rate, and (3) classification‑like discrimination of the noisy zeros. With low noise, the neuron quickly learned a canonical “0” spike pattern; the weight map highlighted the central loop and the outer contour of the digit, mirroring the most informative pixels. As noise increased, the weight distribution progressively shifted toward the less corrupted pixels, while the overall firing rate dropped. Nevertheless, even at 30–40 % noise the neuron still produced a recognizable spike signature that distinguished the zero from random background activity, demonstrating robust SNR‑based weighting.

From a hardware perspective, the model’s reliance on simple counters and threshold comparisons means it can be implemented with minimal silicon area and power consumption. No floating‑point units or complex function generators are required, and the homeostatic reset can be realized with a single comparator‑driven latch. This makes the neuron a strong candidate for large‑scale neuromorphic arrays where each unit must autonomously adapt to varying input quality while preserving overall energy efficiency.

In summary, the study shows that a single spiking neuron can simultaneously (i) learn spatio‑temporal spike patterns in an unsupervised manner and (ii) encode the afferent SNR of each input channel directly into its synaptic weights. The approach bridges a gap between biologically inspired plasticity and practical digital neuromorphic design, offering insights into how biological neurons might dynamically re‑weight noisy synapses and providing a hardware‑friendly template for future low‑power, noise‑robust neuromorphic processors. Future work is suggested to extend the mechanism to multi‑neuron networks, explore more complex sensory modalities, and quantify power savings on actual silicon prototypes.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...