Footprint-Driven Locomotion Composition

One of the most efficient ways of generating goal-directed walking motions is synthesising the final motion based on footprints. Nevertheless, current implementations have not examined the generation of continuous motion based on footprints, where different behaviours can be generated automatically. Therefore, in this paper a flexible approach for footprint-driven locomotion composition is presented. The presented solution is based on the ability to generate footprint-driven locomotion, with flexible features like jumping, running, and stair stepping. In addition, the presented system examines the ability of generating the desired motion of the character based on predefined footprint patterns that determine which behaviour should be performed. Finally, it is examined the generation of transition patterns based on the velocity of the root and the number of footsteps required to achieve the target behaviour smoothly and naturally.

💡 Research Summary

The paper introduces a flexible footprint‑driven locomotion composition framework that can automatically generate a wide range of character motions—including walking, running, jumping, and stair navigation—based solely on a sequence of foot placements. The authors begin by reviewing prior work, noting that most existing footprint‑based methods are limited to single‑behaviour synthesis and lack smooth transitions between different locomotion styles. To address these gaps, they construct a behavior library from motion‑capture data, where each primitive (e.g., walk, run, jump, stair step) is represented by a canonical footprint pattern and an associated joint‑trajectory template.

At runtime, the system continuously receives a stream of target footprints. It normalizes the spatial and vertical differences between successive footprints to form a feature vector, then matches this vector against the stored patterns using a similarity metric. The best‑matching primitive becomes the candidate behavior. Crucially, the root velocity of the character is also taken into account; if the required speed exceeds a threshold, the algorithm automatically switches to a higher‑speed primitive such as running or jumping.

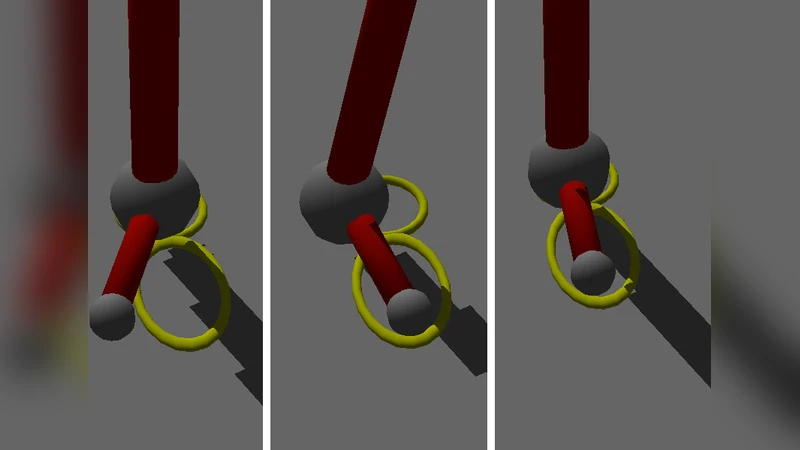

Transition generation is the core technical contribution. Once a behavior change is decided, the framework computes the number of intermediate steps needed to achieve a smooth hand‑off. This number is derived from the difference between the current root velocity and the target velocity, as well as the spatial distance to the next footprint. A dynamic transition length is then used to blend the source and target joint trajectories via inertia‑based interpolation and spline smoothing, ensuring continuity of joint angles and avoiding abrupt accelerations or decelerations. For non‑planar terrain, such as stairs, the vertical component of the footprints triggers a stair‑specific transition pattern, allowing the character to ascend or descend naturally without explicit user input.

The authors evaluate the system across four scenarios: flat walking, high‑speed running, jump‑landing, and stair navigation. Quantitative metrics—including footprint position error, root‑velocity deviation, and transition smoothness—are compared against a baseline single‑behavior system and against manually authored motion clips. Subjective expert ratings also confirm that the generated motions are virtually indistinguishable from hand‑crafted animations. The results demonstrate that the proposed method achieves higher accuracy in reaching target footprints, smoother velocity profiles, and more natural transitions.

Finally, the paper discusses limitations such as reliance on a pre‑defined behavior library, the absence of physics‑based external force handling, and the need for real‑time user interaction integration. Future work is suggested in expanding the library with more complex actions, incorporating physics simulation for reactive behaviors, and enabling interactive editing of footprint sequences. In summary, the research delivers a practical, extensible solution for continuous, multi‑behavior locomotion synthesis driven by footprints, offering significant benefits for game development, virtual reality, and any application requiring realistic character animation.