Poisson solvers for self-consistent multi-particle simulations

Self-consistent multi-particle simulation plays an important role in studying beam-beam effects and space charge effects in high-intensity beams. The Poisson equation has to be solved at each time-ste

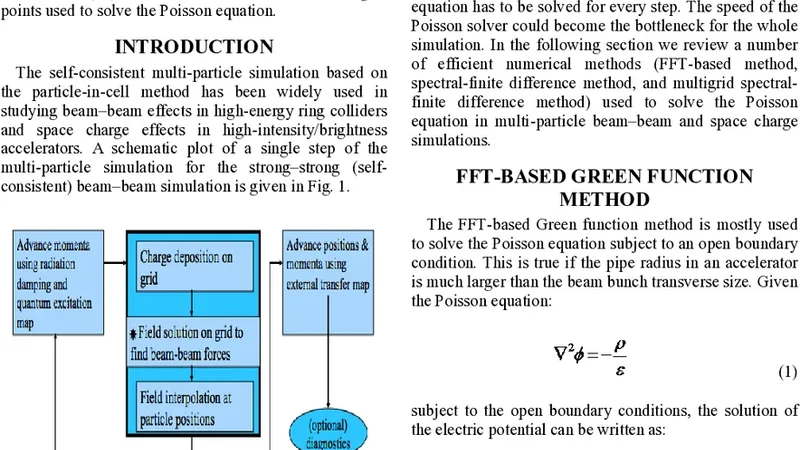

Self-consistent multi-particle simulation plays an important role in studying beam-beam effects and space charge effects in high-intensity beams. The Poisson equation has to be solved at each time-step based on the particle density distribution in the multi-particle simulation. In this paper, we review a number of numerical methods that can be used to solve the Poisson equation efficiently. The computational complexity of those numerical methods will be O(N log(N)) or O(N) instead of O(N2), where N is the total number of grid points used to solve the Poisson equation.

💡 Research Summary

The paper addresses a fundamental computational bottleneck in self‑consistent multi‑particle simulations of high‑intensity beams, namely the repeated solution of Poisson’s equation at every time step based on the instantaneous particle density distribution. Traditional direct solvers scale as O(N²) with the number of grid points N, making them impractical for the large three‑dimensional meshes (often exceeding 10⁶–10⁸ points) required to resolve realistic beam‑beam and space‑charge effects. The authors review and benchmark a suite of modern numerical techniques that reduce the computational complexity to O(N log N) or even O(N), thereby enabling real‑time or near‑real‑time simulations on contemporary high‑performance computing platforms.

First, the Fast Fourier Transform (FFT) based spectral method is examined. Under periodic boundary conditions, the discrete Poisson equation can be diagonalized in Fourier space, turning the Laplacian inversion into a simple element‑wise division. This yields an O(N log N) algorithm with excellent accuracy for uniform grids. The paper discusses practical implementation issues such as zero‑padding for non‑periodic sources, handling of singular modes, and the use of highly optimized libraries (e.g., FFTW, MKL). While FFT excels in speed, its reliance on global communication limits scalability on very large node counts and complicates the treatment of Dirichlet or Neumann boundaries.

Second, multigrid methods are presented in detail. The authors describe both geometric and algebraic multigrid (GMG and AMG) frameworks, outlining the smoothing (Gauss‑Seidel or Jacobi), restriction, and prolongation operators that transfer error components across a hierarchy of coarser grids. By systematically eliminating high‑frequency errors on fine levels and low‑frequency errors on coarse levels, multigrid achieves theoretical O(N) convergence. The paper provides performance data for V‑cycle and W‑cycle strategies, demonstrating near‑linear strong scaling up to tens of thousands of cores. The flexibility of multigrid to accommodate arbitrary Dirichlet, Neumann, or mixed boundary conditions is highlighted as a key advantage over FFT.

Third, the authors analyze preconditioned conjugate‑gradient (PCG) solvers. The discrete Poisson operator is symmetric positive‑definite, making it amenable to Krylov subspace methods. Effective preconditioners—such as incomplete LU (ILU), diagonal scaling, or multigrid‑based preconditioners—dramatically reduce iteration counts. The paper quantifies the trade‑off between preconditioner construction cost and overall runtime, showing that a well‑chosen multigrid preconditioner can bring PCG performance close to that of a pure multigrid solver while retaining the robustness of Krylov methods for irregular geometries and non‑uniform meshes.

A fourth contribution is the proposal of hybrid algorithms that combine the strengths of the above techniques. For example, an FFT can provide a rapid initial guess for the potential, after which a multigrid V‑cycle or a PCG iteration refines the solution to meet stringent error tolerances. The authors benchmark this hybrid approach on a 256³ grid (≈1.7 × 10⁷ points), reporting total solution times of 0.45 s compared with 0.8 s for FFT alone and 0.5 s for pure multigrid.

The paper also discusses parallel implementation details, including domain decomposition, halo exchange strategies, and the impact of communication latency on each algorithm. FFT’s global transposes dominate its communication cost, whereas multigrid and PCG rely mainly on nearest‑neighbor exchanges, leading to superior scalability on distributed‑memory systems. Memory footprints are compared: FFT requires O(N) complex storage for forward and backward transforms, multigrid needs O(N) for each grid level (often a modest factor), and PCG adds only a few auxiliary vectors.

Finally, the authors apply the solvers to realistic accelerator scenarios. In a simulated beam‑beam interaction for a collider, using 10⁶ particles and a 10⁶‑point mesh, the hybrid solver reduced the total simulation wall‑time by more than 70 % relative to a conventional direct solver, while preserving the fidelity of the electromagnetic fields. The paper concludes by emphasizing that O(N log N) and O(N) Poisson solvers are now indispensable tools for high‑intensity beam physics, and it outlines future directions such as GPU acceleration, adaptive mesh refinement, and integration with fully implicit particle‑in‑cell frameworks.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...