Minimum sample size for detection of Gutenberg-Richters b-value

In this study we address the question of the minimum sample size needed for distinguishing between Gutenberg-Richter distributions with varying b-values at different resolutions. In order to account for both the complete and incomplete parts of a catalog we use the recently introduced angular frequency magnitude distribution (FMD). Unlike the gradually curved FMD, the angular FMD is fully compatible with Aki’s maximum likelihood method for b-value estimation. To obtain generic results we conduct our analysis on synthetic catalogs with Monte Carlo methods. Our results indicate that the minimum sample size used in many studies is strictly below the value required for detecting significant variations.

💡 Research Summary

The paper addresses a fundamental methodological gap in seismology: how many earthquakes must be included in a catalog to reliably detect differences in Gutenberg‑Richter (GR) b‑values. While the GR law’s b‑value is routinely used to infer stress conditions, fault heterogeneity, and temporal changes in seismicity, most published studies have relied on ad‑hoc sample sizes—often a few dozen events—without a quantitative justification. The authors propose a rigorous framework based on the angular frequency‑magnitude distribution (angular FMD), a recently introduced model that explicitly incorporates the magnitude of completeness (Mc) while remaining fully compatible with Aki’s maximum‑likelihood estimator (MLE) for b‑value. This compatibility eliminates the need for post‑hoc corrections that plague traditional “gradually curved” FMD approaches, allowing the full catalog (both complete and incomplete portions) to be used directly in the likelihood function.

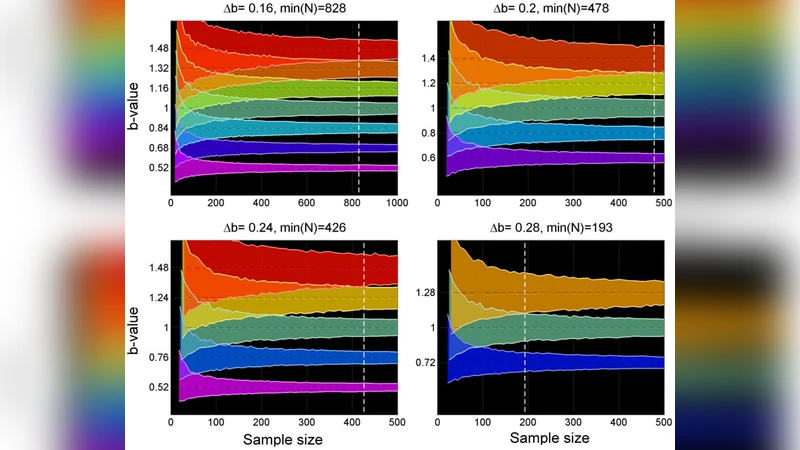

Methodologically, the authors generate synthetic earthquake catalogs using Monte Carlo simulations. They prescribe a set of true b‑values (e.g., 0.8, 1.0, 1.2) and a range of Mc values, then draw event magnitudes from the corresponding angular FMD. For each synthetic catalog, they systematically down‑sample the number of events (N = 500, 300, 200, 150, 100, 50, etc.) and apply Aki’s MLE to estimate b. By repeating this process thousands of times, they construct empirical distributions of the estimated b for each N and evaluate whether the estimated b differs from the true value by more than the 95 % confidence interval. In statistical terms, they compute the power (probability of correctly rejecting the null hypothesis of equal b‑values) as a function of N, Δb (the targeted difference between two b‑values), and Mc.

The results reveal a steep, non‑linear relationship between sample size and detection power. When the targeted Δb is modest (0.1, a typical value in many tectonic studies), the power exceeds 80 % only when the catalog contains roughly 150–200 events. For larger Δb (e.g., 0.3), the required N drops modestly but still remains above 100 events for comparable power. The analysis also shows that a lower Mc (i.e., inclusion of smaller magnitudes) can reduce the required N, but only if Mc is accurately estimated and the angular FMD correctly models the incomplete tail. Mis‑specifying Mc leads to biased b‑estimates and paradoxically increases the needed sample size.

These findings directly challenge the common practice of using 30–50 events to claim spatial or temporal b‑value variations. The authors argue that such small samples have insufficient statistical power, leading to a high risk of Type I errors (false detection of b‑value change) or Type II errors (failure to detect a real change). They provide a practical guideline: before any comparative b‑value study, researchers should (1) define the minimum detectable Δb and desired power (typically 80 % or higher), (2) estimate Mc robustly, (3) adopt the angular FMD with MLE for all events, and (4) perform a Monte Carlo power analysis tailored to their specific catalog to determine the minimum N. For the most common scenario (Δb = 0.1, 95 % confidence, 80 % power), the authors recommend a minimum of ~180–200 events.

Beyond the sample‑size recommendation, the paper’s broader contribution lies in demonstrating that the angular FMD provides a statistically sound bridge between complete and incomplete portions of a catalog. By embedding Mc directly into the likelihood, the method avoids the ad‑hoc truncation or correction steps that have historically introduced bias. Consequently, the angular FMD can be applied to real‑world catalogs where completeness varies spatially or temporally, enabling more reliable detection of genuine b‑value anomalies.

In summary, the study delivers three key take‑aways for the seismological community: (1) a quantitative, simulation‑based framework for determining the minimum catalog size needed to resolve b‑value differences, (2) validation that the angular FMD coupled with Aki’s MLE is the appropriate statistical tool for mixed‑completeness data, and (3) a clear warning that many published b‑value variation studies may be under‑powered. Adoption of the authors’ recommendations will improve the robustness of stress‑state inference, seismic‑hazard assessment, and the broader interpretation of temporal changes in seismicity.

Comments & Academic Discussion

Loading comments...

Leave a Comment