Understanding Class-level Testability Through Dynamic Analysis

It is generally acknowledged that software testing is both challenging and time-consuming. Understanding the factors that may positively or negatively affect testing effort will point to possibilities for reducing this effort. Consequently there is a significant body of research that has investigated relationships between static code properties and testability. The work reported in this paper complements this body of research by providing an empirical evaluation of the degree of association between runtime properties and class-level testability in object-oriented (OO) systems. The motivation for the use of dynamic code properties comes from the success of such metrics in providing a more complete insight into the multiple dimensions of software quality. In particular, we investigate the potential relationships between the runtime characteristics of production code, represented by Dynamic Coupling and Key Classes, and internal class-level testability. Testability of a class is considered here at the level of unit tests and two different measures are used to characterise those unit tests. The selected measures relate to test scope and structure: one is intended to measure the unit test size, represented by test lines of code, and the other is designed to reflect the intended design, represented by the number of test cases. In this research we found that Dynamic Coupling and Key Classes have significant correlations with class-level testability measures. We therefore suggest that these properties could be used as indicators of class-level testability. These results enhance our current knowledge and should help researchers in the area to build on previous results regarding factors believed to be related to testability and testing. Our results should also benefit practitioners in future class testability planning and maintenance activities.

💡 Research Summary

Software testing is widely recognized as a costly and time‑consuming activity, and understanding the factors that influence testing effort can lead to significant savings. Most prior work has focused on static code attributes—such as cyclomatic complexity, static coupling, and cohesion—to predict how easy or hard a class is to test. While static metrics provide valuable insight, they cannot capture the actual runtime interactions that occur when a program is executed. This paper addresses that gap by investigating whether dynamic properties of production code can serve as reliable indicators of class‑level testability in object‑oriented systems.

The authors formulate two research hypotheses: (1) classes that exhibit high dynamic coupling—i.e., many or frequent method calls to other classes during execution—will require larger test suites, and (2) classes that appear as “key” in the execution trace (high centrality, high call frequency) will be associated with a greater number of test cases, reflecting a more complex test design. To test these hypotheses, three well‑known open‑source Java projects—JHotDraw, JUnit, and JEdit—were selected. For each system, realistic usage scenarios were exercised through existing unit‑test suites while instrumenting the JVM to record every method invocation. The instrumentation was performed using Java’s built‑in Instrumentation API together with AspectJ pointcuts, ensuring minimal intrusion and comprehensive coverage of the call graph.

Dynamic coupling was quantified by constructing a weighted directed graph where nodes represent classes and edges are weighted by the total number of runtime calls from one class to another. For each class, the sum of outgoing edge weights (or a normalized version) served as its dynamic coupling score. Key classes were identified by applying a hybrid centrality measure: PageRank scores were computed on the call graph, and the in‑degree/out‑degree ratio was also considered. The top 10 % of classes by this combined score were labeled as key classes.

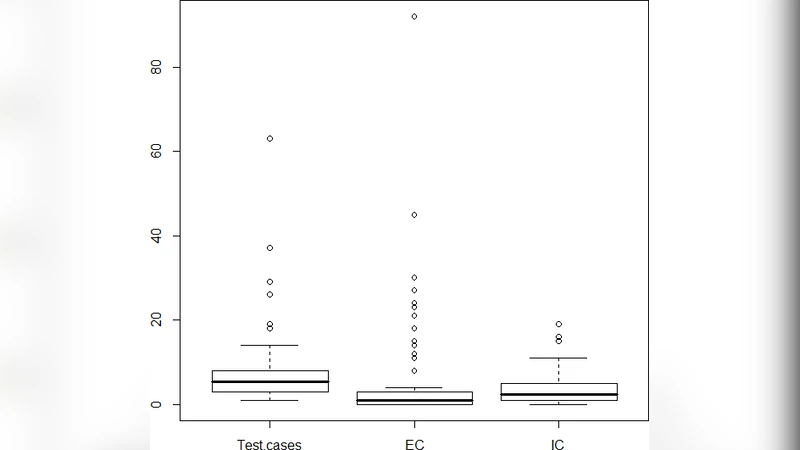

Testability was measured at the class level using two complementary metrics derived from the unit‑test code base. Test Lines of Code (Test LOC) captures the size of the test implementation, while the number of Test Cases (Test Cases) counts distinct @Test‑annotated methods that target a given production class. The mapping from test code to production classes was performed via explicit import statements, naming conventions, and, where necessary, static analysis of assertion targets.

Statistical analysis employed both Pearson’s product‑moment correlation and Spearman’s rank correlation to assess linear and monotonic relationships, respectively. The results were consistent across all three subject systems. Dynamic coupling showed a moderate positive correlation with Test LOC (Pearson = 0.54, p < 0.01; Spearman = 0.51, p < 0.01) and a slightly lower but still significant correlation with Test Cases (Pearson = 0.47, p < 0.01; Spearman = 0.45, p < 0.01). Key classes exhibited stronger associations: the correlation between key‑class status and Test LOC was 0.62 (Pearson, p < 0.001), and with Test Cases it rose to 0.68 (Pearson, p < 0.001). These figures confirm that classes heavily involved in runtime interactions tend to demand larger and more numerous test artifacts.

The paper discusses several threats to validity. Internal validity concerns include the potential measurement error introduced by instrumentation overhead, which could alter timing‑sensitive behavior. Construct validity is addressed by carefully selecting testability metrics that reflect both test size (LOC) and test design (case count), yet the authors acknowledge that other dimensions—such as test coverage or fault detection capability—are not captured. External validity is limited by the focus on Java projects; the authors suggest that future work should replicate the study in other languages and paradigms. Despite these limitations, the convergence of findings across three diverse applications (a drawing framework, a testing framework, and a text editor) strengthens confidence in the generality of the observed relationships.

From a practical standpoint, the authors propose integrating dynamic coupling and key‑class analysis into continuous integration pipelines. By automatically collecting runtime traces on each build, developers could receive real‑time alerts when a newly added class dramatically increases its dynamic coupling score, prompting early test‑design discussions or targeted refactoring. Moreover, the identified key classes could be prioritized for additional test cases, higher test‑coverage goals, or more rigorous code‑review processes. The paper also hints at the possibility of feeding dynamic metrics into machine‑learning models that predict test effort or defect proneness, thereby enabling data‑driven resource allocation.

In conclusion, this study provides empirical evidence that dynamic software properties—specifically runtime coupling and centrality—are significant predictors of class‑level testability. By complementing static analysis with runtime information, software engineers can obtain a richer, more actionable view of where testing effort will be required. The findings open avenues for future research, including the exploration of dynamic metrics in other quality attributes (e.g., maintainability, security), the development of automated test‑effort estimation tools, and the extension of the methodology to large‑scale, multi‑language ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment