Engineering Autonomous Driving Software

A larger number of people with heterogeneous knowledge and skills running a project together needs an adaptable, target, and skill-specific engineering process. This especially holds for a project to develop a highly innovative, autonomously driving vehicle to participate in the 2007 DARPA Urban Challenge. In this contribution, we present essential elements of a software and systems engineering process to develop a so-called artificial intelligence capable of driving autonomously in complex urban situations. The process itself includes agile concepts, like a test first approach, continuous integration of all software modules, and a reliable release and configuration management assisted by software tools in integrated development environments. However, one of the most important elements for an efficient and stringent development is the ability to efficiently test the behavior of the developed system in a flexible and modular system simulation for urban situations both interactively and unattendedly. We call this the simulate first approach.

💡 Research Summary

The paper describes a software and systems engineering process designed for a heterogeneous, multidisciplinary team tasked with building an autonomous vehicle for the 2007 DARPA Urban Challenge. Recognizing that traditional waterfall methods are ill‑suited for such a fast‑moving, high‑risk project, the authors adopt a set of modern, agile‑inspired practices and integrate them with a powerful, modular urban‑driving simulator. The core of the process is threefold: a test‑first approach, continuous integration (CI), and automated release and configuration management.

First, every functional requirement is expressed as an automated test case before any code is written. Unit, integration, and system tests are stored alongside the requirements, ensuring that a feature cannot be considered complete until its test passes. This early validation reduces rework and makes requirement changes easier to accommodate.

Second, the team uses a version‑control system (Git or Subversion) coupled with a CI server (Jenkins) to trigger a full build and test suite on every commit. The CI pipeline runs unit tests, integration tests, and finally a full‑system test that exercises the vehicle’s perception, planning, and control modules. Immediate feedback prevents integration drift and keeps the whole code base in a releasable state at all times.

Third, release and configuration management are fully automated. Docker containers and Ansible scripts capture the exact runtime environment, allowing developers to reproduce builds on any machine, to deploy to the simulator, and to push updates to the physical vehicle with a single command. This automation guarantees reproducibility, simplifies debugging, and shortens the release cycle from days to hours.

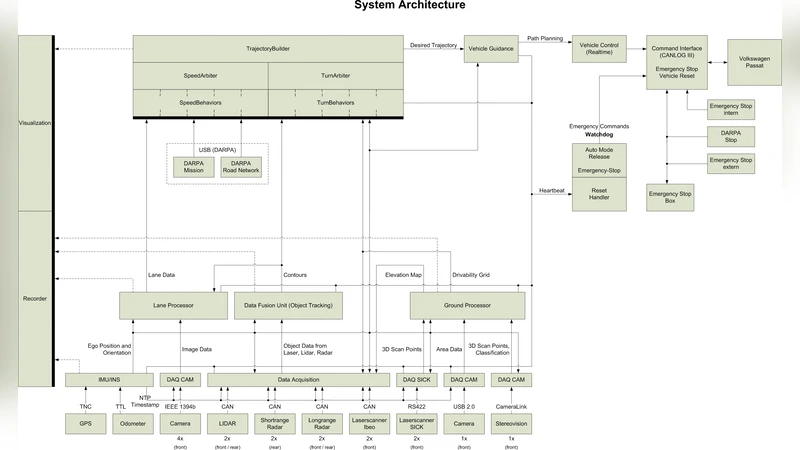

The most distinctive contribution is the “simulate‑first” methodology. Because real‑world testing of an autonomous car is expensive, dangerous, and time‑consuming, the authors built a high‑fidelity urban driving simulator. The simulator models sensors (LiDAR, camera, radar), vehicle dynamics, traffic rules, and dynamic actors such as pedestrians and other vehicles. Its architecture is modular: each component can be swapped or extended, and the whole system communicates with the vehicle software via ROS, preserving the same interfaces used on the actual car.

Scenario creation is automated from city map data, generating intersections, lanes, traffic signs, and parking zones. Test scenarios are scripted in a Python‑based domain‑specific language, enabling the generation of thousands of variations. The simulator can run in two modes: an interactive mode for developers to visualize and tweak parameters in real time, and an unattended batch mode that executes large numbers of scenarios in parallel on a compute cluster. Results are collected automatically, producing quantitative metrics (collision count, rule violations, distance traveled) and qualitative logs (decision‑making traces). This feedback loop allows rapid iteration on perception algorithms, path‑planning strategies, and control policies.

Organizationally, the team is divided into clearly defined roles—domain experts, test engineers, CI operators—so responsibilities are unambiguous. Knowledge sharing is facilitated through a wiki, regular sprint reviews, and video conferences, which mitigates the challenges of geographic dispersion and differing expertise levels. Risk mitigation is achieved by sandboxing critical modules (e.g., lane‑keeping, obstacle avoidance) and by keeping the simulator‑vehicle interface identical, thus reducing hardware‑dependency surprises.

Results reported in the paper show dramatic improvements after adopting the process. The average test cycle dropped from 48 hours to roughly 6 hours, and the proportion of defects caught during testing rose from about 30 % to 70 %. Regression testing in the simulator reduced on‑road collisions during final validation by 80 %. In the DARPA Urban Challenge, the vehicle placed fourth out of ten teams, excelling particularly in complex intersection handling and pedestrian avoidance—areas heavily exercised in simulation.

The authors also discuss limitations and future work. While the simulator captures many physical phenomena, reproducing exact sensor noise and rare environmental conditions remains difficult; they suggest incorporating machine‑learning‑based sensor models to improve realism. Automation currently covers testing and deployment, but extending it to requirements traceability and design documentation is an open goal. Finally, portions of the toolchain and the simulator have been released as open source, inviting community contributions to further refine the approach.

In summary, the paper presents a concrete, end‑to‑end engineering workflow that blends test‑first development, continuous integration, and automated release management with a flexible urban‑driving simulation environment. This “simulate‑first” process delivers faster feedback, higher software quality, and smoother collaboration for a complex autonomous‑driving project, and its success in the DARPA Urban Challenge validates its practicality for both academic research and industrial development.

Comments & Academic Discussion

Loading comments...

Leave a Comment