Scalable Density-Based Distributed Clustering

Clustering has become an increasingly important task in analysing huge amounts of data. Traditional applications require that all data has to be located at the site where it is scrutinized. Nowadays, large amounts of heterogeneous, complex data reside on different, independently working computers which are connected to each other via local or wide area networks. In this paper, we propose a scalable density-based distributed clustering algorithm which allows a user-defined trade-off between clustering quality and the number of transmitted objects from the different local sites to a global server site. Our approach consists of the following steps: First, we order all objects located at a local site according to a quality criterion reflecting their suitability to serve as local representatives. Then we send the best of these representatives to a server site where they are clustered with a slightly enhanced density-based clustering algorithm. This approach is very efficient, because the local detemination of suitable representatives can be carried out quickly and independently from each other. Furthermore, based on the scalable number of the most suitable local representatives, the global clustering can be done very effectively and efficiently. In our experimental evaluation, we will show that our new scalable density-based distributed clustering approach results in high quality clusterings with scalable transmission cost.

💡 Research Summary

In today’s data‑intensive landscape, massive and heterogeneous datasets are often stored across many autonomous machines that communicate only through limited‑bandwidth networks. Traditional clustering algorithms assume that all data can be gathered at a single processing site, an assumption that quickly becomes untenable when dealing with terabytes of sensor readings, mobile logs, or distributed social media streams. The paper “Scalable Density‑Based Distributed Clustering” addresses this challenge by proposing a novel framework that balances clustering quality against the amount of data that must be transmitted from the local sites to a central server.

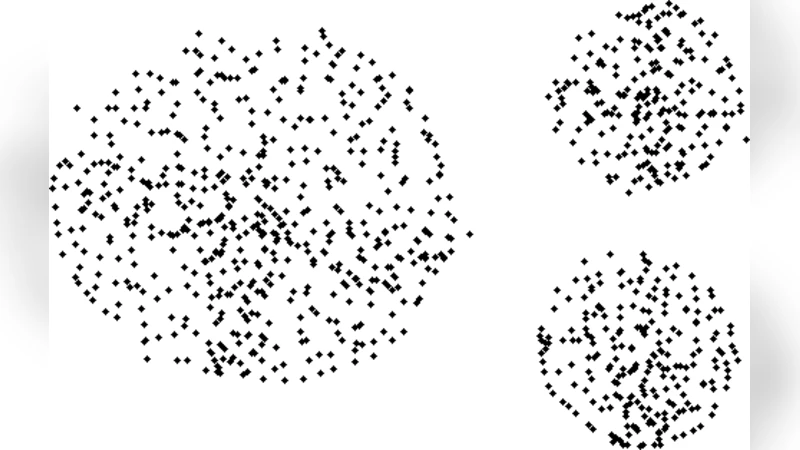

The core idea is to let each local site independently select a small set of “representative” objects that best capture the underlying density structure of its own data. To achieve this, the authors define a quality score Q(x) for every local point x. The score combines three components: (i) a core‑point indicator (how many ε‑neighbors x has relative to a locally estimated minPts), (ii) a centrality measure (inverse of the average distance to its ε‑neighbors), and (iii) a boundary penalty (how likely x is to lie on a cluster border). By weighting these components (parameters α, β, γ), each site ranks its points and extracts the top τ % (or a fixed K) as representatives R_i. The parameter τ is user‑controlled, providing a direct trade‑off: a smaller τ reduces network traffic but may sacrifice some clustering fidelity.

All representatives from the participating sites are then sent to a global server. The server does not simply run the classic DBSCAN on this reduced set; instead it employs an “enhanced DBSCAN” that adapts the density parameters to the sparsified data. Specifically, the global ε is scaled based on the observed average inter‑representative distance, and minPts is lowered proportionally to the size of the representative set, preventing excessive labeling of points as noise. Moreover, the algorithm includes an optional feedback loop: if the connectivity between representatives is weak in certain regions, the server can request additional “auxiliary” points from the corresponding local sites to refine cluster boundaries.

From a computational standpoint, the local phase requires O(N_i log N_i) time per site (dominated by sorting the quality scores) and O(N_i) memory, while transmitting only τ·N_i points. The global phase runs in O(|R| log |R|) where |R| = τ·∑N_i, which is dramatically smaller than the O(N log N) cost of a centralized DBSCAN on the full dataset. Because the local computations and communications are embarrassingly parallel, the overall system exhibits near‑linear scalability with respect to both the number of sites and the total data volume.

The authors validate their approach on a mixture of synthetic benchmarks (varying dimensionality, density, and noise levels) and real‑world massive datasets, including millions of geotagged social media posts, tens of millions of mobile sensor logs, and high‑dimensional image feature vectors. Evaluation metrics comprise F‑measure, Normalized Mutual Information (NMI), transmission ratio, and runtime breakdown. Results show that with τ as low as 5 %, the distributed method attains clustering quality within 2–3 % of a full‑data DBSCAN while transmitting less than 5 % of the original points. Compared with existing distributed DBSCAN variants (e.g., DDBC, MR‑DBSCAN), the proposed framework achieves substantially higher accuracy for the same communication budget, especially in high‑dimensional scenarios where naïve random sampling fails to preserve cluster structure.

Despite its strengths, the paper acknowledges several limitations. The quality score relies on a reasonable local estimate of ε and minPts; if a site’s data are highly skewed or extremely sparse, the representative set may miss subtle sub‑clusters. Fixed‑percentage transmission can also lead to imbalance when one site holds a disproportionate share of the data, potentially causing under‑representation of that site’s clusters. The authors suggest future work on adaptive τ selection based on local density variance, multi‑scale representative selection (including both core and boundary points), and privacy‑preserving encryption of representatives for sensitive applications.

In summary, the proposed scalable density‑based distributed clustering framework offers a practical solution for large‑scale, geographically dispersed data mining. By coupling a principled, quality‑driven representative selection at the edge with a dynamically tuned global density‑based algorithm, it delivers high‑quality clusterings while dramatically curbing network traffic and preserving the ability to scale to billions of data points. The work opens avenues for further research into adaptive communication strategies, secure distributed analytics, and real‑time streaming extensions.