Approximately Optimal Mechanism Design: Motivation, Examples, and Lessons Learned

Optimal mechanism design enjoys a beautiful and well-developed theory, and also a number of killer applications. Rules of thumb produced by the field influence everything from how governments sell wireless spectrum licenses to how the major search engines auction off online advertising. There are, however, some basic problems for which the traditional optimal mechanism design approach is ill-suited — either because it makes overly strong assumptions, or because it advocates overly complex designs. The thesis of this paper is that approximately optimal mechanisms allow us to reason about fundamental questions that seem out of reach of the traditional theory. This survey has three main parts. The first part describes the approximately optimal mechanism design paradigm — how it works, and what we aim to learn by applying it. The second and third parts of the survey cover two case studies, where we instantiate the general design paradigm to investigate two basic questions. In the first example, we consider revenue maximization in a single-item auction with heterogeneous bidders. Our goal is to understand if complexity — in the sense of detailed distributional knowledge — is an essential feature of good auctions for this problem, or alternatively if there are simpler auctions that are near-optimal. The second example considers welfare maximization with multiple items. Our goal here is similar in spirit: when is complexity — in the form of high-dimensional bid spaces — an essential feature of every auction that guarantees reasonable welfare? Are there interesting cases where low-dimensional bid spaces suffice?

💡 Research Summary

The paper surveys the emerging paradigm of approximately optimal mechanism design, arguing that traditional optimal design—while elegant and powerful—often fails to address real‑world constraints such as limited information, computational tractability, or simplicity of implementation. The author first revisits two canonical optimal mechanisms: the Vickrey auction, which maximally promotes social welfare in a single‑item setting, and Myerson’s auction, which maximally extracts expected revenue when bidders’ values are drawn from known distributions. Both examples illustrate the appeal of optimality but also expose the fragility of the approach when the underlying assumptions are relaxed.

The core contribution is a systematic framework for “approximate” design. One begins by fixing a design space (often restricted by side constraints like bounded communication, limited computational resources, or low‑dimensional bid formats) and an objective function (e.g., expected revenue or ex‑post welfare). A benchmark is then defined—typically the performance of an unconstrained, possibly highly complex optimal mechanism. The analyst’s task is to construct a mechanism within the constrained space that achieves a provable fraction of the benchmark’s value. This approach serves two purposes: it identifies simple mechanisms that are near‑optimal, and it quantifies the “price of simplicity” by measuring how far any constrained mechanism must fall short of the unconstrained optimum.

Two case studies instantiate the framework.

-

Revenue maximization in single‑item auctions with heterogeneous bidders.

When bidders’ value distributions differ, Myerson’s optimal auction requires precise knowledge of each distribution to compute virtual valuations. The paper studies a learning‑theoretic variant where the seller only observes a finite number of past valuation samples from each distribution. The benchmark is Myerson’s expected revenue; the goal is to achieve a (1 − ε) fraction of it using only s samples per bidder. For a single bidder, without regularity assumptions, no finite s guarantees any non‑trivial approximation for all distributions. Under standard regularity (tails no heavier than a power law), a polynomial‑in‑1/ε number of samples suffices. With multiple bidders, the required sample size scales with the number of bidders and the degree of heterogeneity, showing that detailed distributional knowledge is essential only when bidders are highly diverse. Thus, simple mechanisms such as posted‑price or reserve‑price auctions can be near‑optimal provided enough data is collected, but the amount of data needed is dictated by the underlying heterogeneity. -

Welfare maximization in multi‑item auctions.

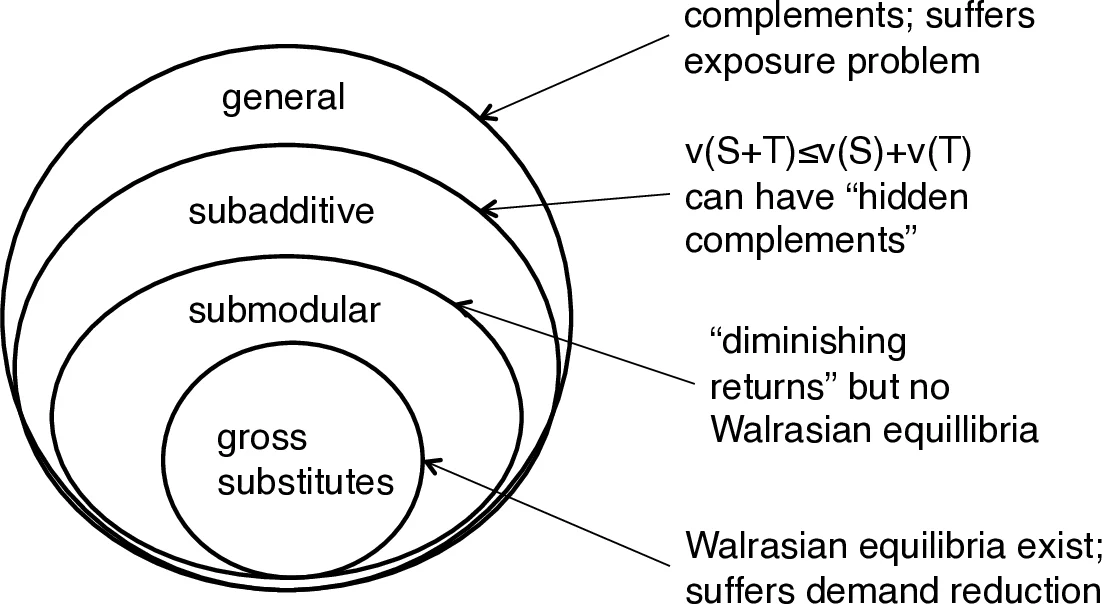

Here the complexity stems from the high‑dimensional bid space required when items are non‑identical and bidders have combinatorial preferences. The optimal mechanism (e.g., the VCG auction with appropriate ironing) is computationally intractable and demands full knowledge of each bidder’s valuation vector. The study contrasts this with mechanisms that restrict bidders to low‑dimensional reports—such as separate sealed‑bid auctions for each item or simple bundle‑price menus. When items are largely independent or when valuations satisfy subadditivity, these low‑dimensional mechanisms achieve a (1 − ε) fraction of the optimal welfare. Conversely, when items exhibit strong complementarities, any mechanism limited to low‑dimensional bids incurs a substantial welfare loss, proving that high‑dimensional communication is fundamentally required.

Across both studies, the paper demonstrates that approximate design can either validate the sufficiency of simple, implementable mechanisms or rigorously prove that complexity is unavoidable. The broader lesson is that the “price of simplicity” can be quantified: if a simple mechanism attains performance close to the unconstrained optimum, practitioners can safely adopt it; if not, the analysis supplies a formal justification for investing in richer communication or more detailed distributional learning.

Finally, the survey situates these results within a larger body of work spanning bounded communication, bounded computation, and limited distributional knowledge, highlighting how the approximation paradigm has become a unifying lens for recent advances in algorithmic mechanism design. The paper thus serves both as a conceptual roadmap and as a concrete demonstration of how approximation theory can bridge the gap between elegant optimal mechanisms and the messy constraints of real markets.

Comments & Academic Discussion

Loading comments...

Leave a Comment