Down-Sampling coupled to Elastic Kernel Machines for Efficient Recognition of Isolated Gestures

In the field of gestural action recognition, many studies have focused on dimensionality reduction along the spatial axis, to reduce both the variability of gestural sequences expressed in the reduced space, and the computational complexity of their processing. It is noticeable that very few of these methods have explicitly addressed the dimensionality reduction along the time axis. This is however a major issue with regard to the use of elastic distances characterized by a quadratic complexity. To partially fill this apparent gap, we present in this paper an approach based on temporal down-sampling associated to elastic kernel machine learning. We experimentally show, on two data sets that are widely referenced in the domain of human gesture recognition, and very different in terms of quality of motion capture, that it is possible to significantly reduce the number of skeleton frames while maintaining a good recognition rate. The method proves to give satisfactory results at a level currently reached by state-of-the-art methods on these data sets. The computational complexity reduction makes this approach eligible for real-time applications.

💡 Research Summary

The paper addresses a largely overlooked aspect of gesture recognition: dimensionality reduction along the temporal axis. While many prior works focus on spatial reduction (e.g., PCA, LPP, ISOMAP, joint selection), few have tackled the computational burden introduced by elastic distance measures such as Dynamic Time Warping (DTW), whose quadratic complexity in sequence length makes real‑time deployment difficult. The authors propose a two‑stage framework that first uniformly downsamples each motion sequence to a fixed number of frames L (e.g., 20–30) and then classifies the resulting fixed‑length series using a Support Vector Machine (SVM) equipped with a regularized DTW kernel (K_rdtw).

In the down‑sampling stage, long sequences are reduced by selecting frames at equal intervals; short sequences are up‑sampled by linear interpolation to meet the same length requirement. This operation transforms variable‑length time series into a constant‑size representation, reducing the DTW computation from O(T²)·O(k) to O(L²)·O(k), where T is the original length and k the spatial dimensionality (typically 57–90).

The second stage replaces the raw DTW distance, which is non‑metric and cannot serve directly as a positive‑definite kernel, with a regularized version introduced in earlier work. The regularized kernel replaces the min/max operators in DTW recursion with a summation, thereby considering not only the optimal alignment path but also near‑optimal paths. A stiffness parameter ν controls the contribution of sub‑optimal alignments, and a data‑dependent logarithmic scaling (α, β) normalizes kernel values across the training set. The final kernel is exponentiated (Gaussian‑type) and fed to LIBSVM for training.

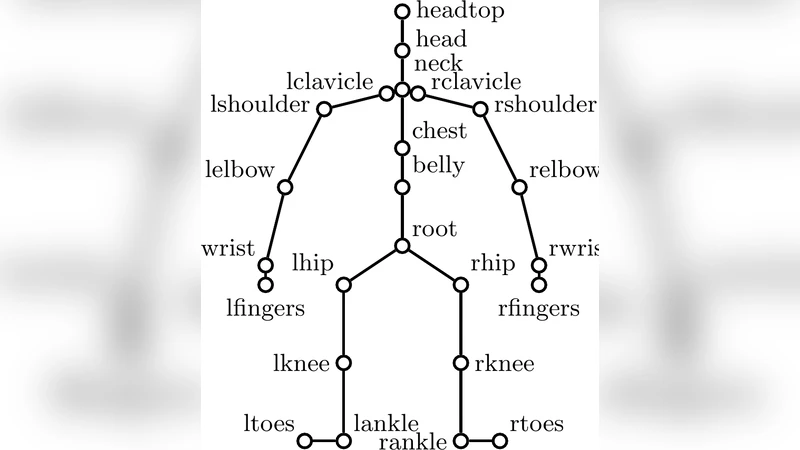

Experiments are conducted on two publicly available datasets that differ markedly in capture quality. The HDM05 dataset (120 Hz optical marker system) provides 31 joints (90‑dimensional vectors) and includes 11–16 gesture classes across 2–3 subjects for training and 2 subjects for testing. The MSR‑Action3D dataset (Kinect, 15 Hz) provides 20 joints (57‑dimensional vectors) and contains 20 gesture classes performed by 10 subjects. For each dataset, the authors evaluate several down‑sampling ratios (e.g., 1/2, 1/4, 1/8) and report classification accuracy as well as computational time.

Results show that with L set to roughly 20–30 frames, the method retains over 90 % of the accuracy achieved with the full‑length sequences while cutting the number of DTW operations by more than 70 %. The regularized DTW kernel (K_rdtw) performs on par with or slightly better than a standard RBF kernel built on Euclidean distances, especially when the temporal reduction is aggressive. Importantly, average classification time drops to the order of 5–10 ms per sequence, a dramatic improvement over conventional DTW‑based approaches that often require hundreds of milliseconds.

The contributions of the paper are threefold: (1) Demonstrating that simple uniform temporal down‑sampling effectively mitigates the quadratic cost of elastic distance measures without substantial loss of discriminative power. (2) Introducing a regularized DTW kernel that yields a positive‑definite similarity measure suitable for SVM optimization, with a tunable stiffness parameter to balance alignment flexibility. (3) Validating the approach on both high‑quality motion‑capture data and low‑cost Kinect data, thereby establishing its robustness across sensor modalities.

The authors suggest future work on adaptive sampling (varying L according to motion complexity), multi‑scale elastic kernels for continuous‑action recognition, and hybrid architectures that combine deep feature extraction with the proposed elastic kernel framework. Such extensions could further enhance accuracy while preserving the computational efficiency required for real‑time interactive applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment