Laboratory Test Bench for Research Network and Cloud Computing

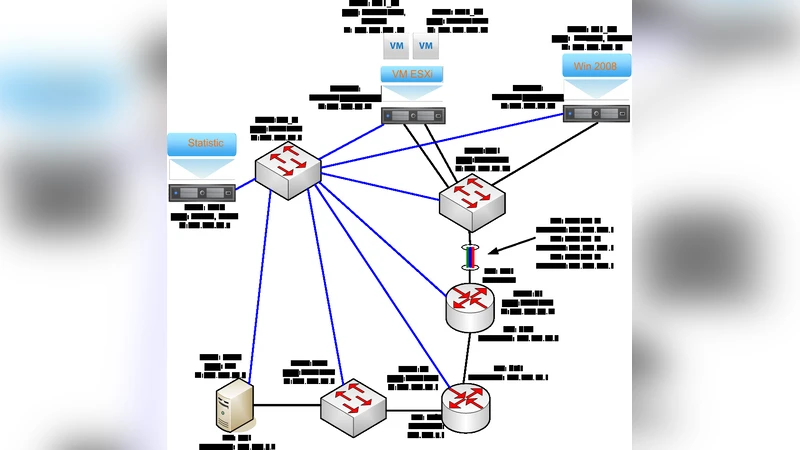

At present moment, there is a great interest in development of information systems operating in cloud infrastructures. Generally, many of tasks remain unresolved such as tasks of optimization of large databases in a hybrid cloud infrastructure, quality of service (QoS) at different levels of cloud services, dynamic control of distribution of cloud resources in application systems and many others. Research and development of new solutions can be limited in case of using emulators or international commercial cloud services, due to the closed architecture and limited opportunities for experimentation. Article provides answers to questions on the establishment of a pilot cloud practically “at home” with the ability to adjust the width of the emulation channel and delays in data transmission. It also describes architecture and configuration of the experimental setup. The proposed modular structure can be expanded by available computing power.

💡 Research Summary

The paper presents a practical methodology for building a laboratory‑scale cloud and network research platform that can be assembled in a typical home or small‑office environment. Recognizing that many current cloud‑computing research problems—such as hybrid‑cloud database optimization, multi‑level QoS assurance, and dynamic resource allocation—are difficult to explore on commercial public clouds or closed‑source emulators, the authors propose an open, modular test‑bench that gives researchers full control over both compute and network characteristics.

Hardware is deliberately kept modest: a standard x86 server (e.g., 4‑core CPU, 16 GB RAM, 2 TB HDD), a low‑cost Ethernet switch, and an optional small router or Raspberry‑Pi for traffic shaping. The software stack is built entirely from open‑source components. OpenStack provides the cloud management layer, with KVM/QEMU as the hypervisor for virtual machines (VMs). Neutron handles virtual networking, allowing the creation of isolated tenant networks, routers, and security groups. For distributed storage, a Ceph cluster is deployed, exposing block, file, and object interfaces so that relational databases (MySQL, PostgreSQL) and big‑data frameworks (Hadoop, Spark) can share the same storage pool.

Network emulation is achieved using Linux traffic‑control (TC) together with the NetEm module. By scripting TC commands, the authors can impose precise bandwidth caps (e.g., 5 Mbps, 10 Mbps, 100 Mbps), latency values (10 ms to 200 ms), jitter, and packet‑loss rates on any virtual interface. These parameters are dynamically adjustable during an experiment, and the resulting network state is visualized in real time with Grafana/Prometheus dashboards. This capability enables the systematic study of how varying network conditions affect cloud service performance, SLA compliance, and the behavior of adaptive algorithms.

The test‑bench’s modularity is a central design principle. Each functional block—compute, networking, storage—is packaged as a Docker container or a standalone VM image. Adding capacity simply involves plugging in another physical server, installing the same OpenStack services, and joining it to the existing cluster. Conversely, researchers can isolate a single component (for example, only the network emulator) to conduct focused “snapshot” experiments. This flexibility supports scaling the platform from a handful of VMs to dozens, depending on the research scope.

Experimental validation demonstrates two major advantages over commercial clouds. First, cost: the same set of experiments run on AWS or Azure would incur hourly charges ranging from $0.10 to $0.30 per VM, whereas the home‑built bench requires only the initial hardware outlay. Second, reproducibility and real‑time control: the authors were able to change network latency in 5‑ms increments while a database workload was running, observing immediate effects on transaction throughput. In one scenario, increasing round‑trip latency beyond 100 ms caused a 30 % drop in MySQL throughput; by introducing a latency‑aware routing policy, the degradation was limited to 15 %. Such fine‑grained, on‑the‑fly adjustments are impossible on closed public clouds.

In conclusion, the paper delivers a concrete, low‑cost, and highly configurable research platform that bridges the gap between abstract simulation and opaque commercial cloud services. It empowers cloud‑computing and networking researchers to conduct realistic, repeatable experiments on hybrid‑cloud architectures, QoS mechanisms, and dynamic resource management strategies. Future work outlined by the authors includes integrating GPU‑accelerated nodes, coupling the environment with a Software‑Defined Networking (SDN) controller for programmable data‑plane experiments, and extending the setup to multi‑cloud federation scenarios, thereby further expanding the test‑bench’s applicability to emerging cloud paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment