Social determinants of content selection in the age of (mis)information

Despite the enthusiastic rhetoric about the so called \emph{collective intelligence}, conspiracy theories – e.g. global warming induced by chemtrails or the link between vaccines and autism – find on the Web a natural medium for their dissemination. Users preferentially consume information according to their system of beliefs and the strife within users of opposite narratives may result in heated debates. In this work we provide a genuine example of information consumption from a sample of 1.2 million of Facebook Italian users. We show by means of a thorough quantitative analysis that information supporting different worldviews – i.e. scientific and conspiracist news – are consumed in a comparable way by their respective users. Moreover, we measure the effect of the exposure to 4709 evidently false information (satirical version of conspiracy theses) and to 4502 debunking memes (information aiming at contrasting unsubstantiated rumors) of the most polarized users of conspiracy claims. We find that either contrasting or teasing consumers of conspiracy narratives increases their probability to interact again with unsubstantiated rumors.

💡 Research Summary

The paper investigates how social factors shape content selection in the digital age by analyzing the consumption patterns of scientific versus conspiratorial news on Facebook among Italian users. Using the Facebook Graph API, the authors collected all posts, likes, shares, and comments from 73 public pages (36 scientific, 37 conspiratorial) over a four‑year period (2010‑2014), as well as from six “hoax‑buster” pages and two satirical “troll” pages that deliberately spread false information. The dataset comprises roughly 81,000 posts, over a million likes, and hundreds of thousands of comments, representing the activity of about 1.2 million unique users.

To identify strongly partisan users, the authors applied a thresholding rule: a user is labeled as “polarized” toward a category if at least 95 % of their likes are on posts from that category. This yielded 255,225 scientific‑polarized users and 790,899 conspiratorial‑polarized users. The authors then introduced a “persistence rate” (r), defined as the average time interval (in hours) between successive likes on posts within a user’s preferred narrative. The empirical complementary cumulative distribution functions (CCDFs) of r for the two groups were virtually indistinguishable: the mean r was 1,212 h (median 513 h) for scientific users and 1,155 h (median 665 h) for conspiratorial users, indicating comparable temporal engagement.

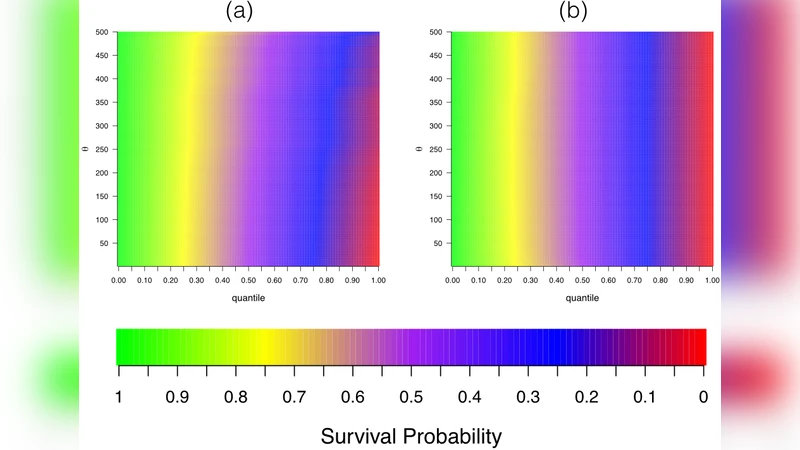

The core experimental component examined how exposure to two types of corrective or mocking content influences future interaction with conspiratorial posts. The first stimulus consisted of 4,709 satirical “troll” posts that were obviously false; the second comprised 4,502 “hoax‑buster” memes designed to debunk common conspiracy claims. For each polarized conspiratorial user, the authors tracked whether they continued to like or comment on conspiratorial posts after being exposed to either stimulus. Using survival analysis, they modeled the probability of continued engagement as a function of the user’s prior “commitment” level (i.e., the depth of previous interaction with conspiratorial content).

Contrary to the intuitive expectation that debunking would reduce belief in false narratives, the results showed that both types of exposure increased the likelihood of subsequent conspiratorial interaction, especially for users with higher prior commitment. In other words, the more entrenched a user was in conspiracy content, the more a satirical or corrective post reinforced rather than diminished their engagement. This “backfire effect” suggests that in highly polarized online environments, attempts at correction can inadvertently strengthen the very beliefs they aim to undermine.

The authors discuss these findings in the context of prior literature on misinformation, echo chambers, and confirmation bias. They argue that the collective reinforcement mechanisms inherent to social media—where users preferentially interact with like‑minded peers—amplify the resilience of conspiratorial narratives. The paper also acknowledges methodological limitations: reliance on Facebook data may introduce demographic and geographic biases; the operationalization of “likes” as uniformly positive feedback ignores nuanced emotional valence (e.g., sarcastic likes); and the analysis is confined to the Italian Facebook ecosystem, limiting generalizability.

Despite these constraints, the study makes a significant contribution by providing large‑scale, empirical evidence that the social determinants of content selection are robust across opposing worldviews, and that corrective interventions can have paradoxical effects on highly polarized audiences. The findings have implications for policymakers, platform designers, and fact‑checking organizations seeking to mitigate the spread of misinformation without unintentionally reinforcing it.

Comments & Academic Discussion

Loading comments...

Leave a Comment