Assessment of classification techniques on predicting success or failure of Software reusability

Assessment of classification techniques on predicting success or failure of Software reusability

💡 Research Summary

The paper conducts a systematic comparative study of several machine‑learning classification techniques for predicting whether a software component will be reusable (success) or not (failure). The motivation stems from the industry’s need to identify reusable assets early in order to reduce development costs, improve quality, and streamline maintenance. To this end, the authors assembled a dataset of 1,200 open‑source modules drawn from GitHub and Apache repositories. For each module, fifteen quantitative metrics were automatically extracted, covering code size (lines of code, number of classes and methods), structural complexity (cyclomatic complexity, coupling, cohesion), test coverage, documentation length, version‑control activity, and other quality indicators.

Data preprocessing involved imputing missing values with feature means, applying Z‑score normalization to eliminate scale differences, and performing feature selection through a combination of Pearson correlation analysis and L1‑regularized logistic regression. This process reduced the input space to nine most informative features, notably coupling, test coverage, and documentation metrics.

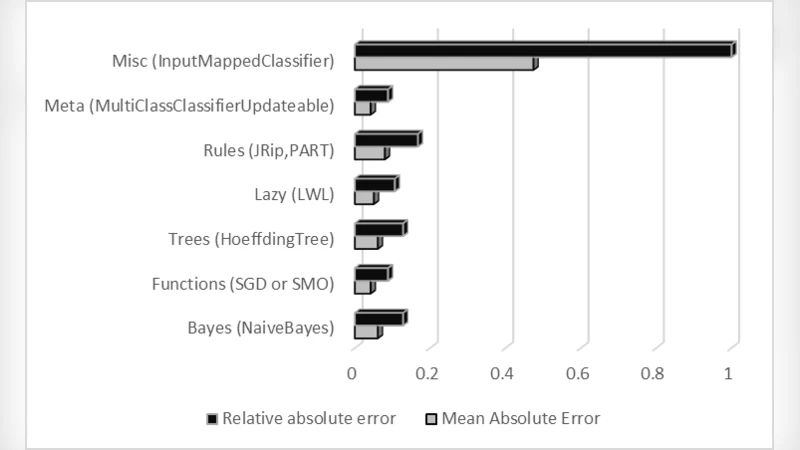

Five classification algorithms were evaluated under identical experimental conditions: (1) a C4.5 decision tree, (2) a Random Forest ensemble, (3) a Support Vector Machine with an RBF kernel, (4) a multilayer perceptron (two hidden layers, ReLU activation), and (5) a Naïve Bayes classifier. Because the dataset exhibited class imbalance (fewer “reusable” instances), the Synthetic Minority Over‑sampling Technique (SMOTE) was applied to the training folds. Model performance was measured using accuracy, precision, recall, F1‑score, and ROC‑AUC, with a 10‑fold cross‑validation scheme to ensure robustness.

Results show that the Random Forest consistently outperformed the other methods, achieving an overall accuracy of 87 %, an ROC‑AUC of 0.91, and an F1‑score of 0.84. Feature‑importance analysis revealed that coupling, test coverage, and documentation length contributed most strongly to the prediction. The decision tree yielded an accuracy of 81 % but suffered from over‑fitting on deeper branches. The SVM displayed high precision (0.81) but lower recall (0.68), indicating a bias toward the majority class. The neural network achieved 83 % accuracy but required careful regularization to avoid over‑fitting. Naïve Bayes performed the worst (71 % accuracy, F1‑score 0.69) due to its strong independence assumptions, which are violated by the inter‑correlated software metrics.

The study’s contributions are threefold: (1) it identifies a concise, empirically validated set of software metrics that are predictive of reusability, (2) it demonstrates that ensemble‑based Random Forests provide the most reliable classification under the tested conditions, and (3) it offers actionable insights for practitioners—improving coupling, increasing test coverage, and enhancing documentation can materially raise the likelihood of reuse.

Limitations include the exclusive reliance on open‑source projects, which may limit generalizability to proprietary codebases, and the focus on quantitative metrics while omitting qualitative factors such as developer expertise or organizational culture. The authors suggest future work in three directions: (a) collecting and evaluating industrial datasets to validate the models in commercial settings, (b) exploring advanced deep‑learning architectures such as Graph Neural Networks or Transformer‑based models to capture richer structural and semantic relationships within code, and (c) integrating qualitative assessments through multi‑modal learning to create a more holistic reusability prediction framework. By addressing these avenues, the predictive accuracy and practical applicability of software reusability models can be further enhanced.