Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation

In this paper, we propose a novel neural network model called RNN Encoder-Decoder that consists of two recurrent neural networks (RNN). One RNN encodes a sequence of symbols into a fixed-length vector representation, and the other decodes the representation into another sequence of symbols. The encoder and decoder of the proposed model are jointly trained to maximize the conditional probability of a target sequence given a source sequence. The performance of a statistical machine translation system is empirically found to improve by using the conditional probabilities of phrase pairs computed by the RNN Encoder-Decoder as an additional feature in the existing log-linear model. Qualitatively, we show that the proposed model learns a semantically and syntactically meaningful representation of linguistic phrases.

💡 Research Summary

The paper introduces a novel neural architecture called the RNN Encoder‑Decoder, designed to map a variable‑length source sequence into a fixed‑length vector and then decode that vector back into a variable‑length target sequence. The model consists of two recurrent neural networks: an encoder that reads the source tokens one by one and compresses the entire sentence into a single context vector c, and a decoder that, conditioned on c and the previously generated target token, predicts the next token using a soft‑max output layer. Both networks are trained jointly to maximize the conditional log‑likelihood of the target given the source, i.e., max θ ∑ log pθ(y|x).

A key technical contribution is a new gated hidden unit that is simpler than the traditional LSTM but retains its ability to control information flow. The unit employs a reset gate r and an update gate z. The reset gate determines how much of the previous hidden state should be ignored when computing the candidate activation \tilde{h}, while the update gate interpolates between the previous hidden state and the candidate to produce the new hidden state. This design enables the network to drop irrelevant information and retain long‑term dependencies without the computational overhead of four LSTM gates.

The authors apply the Encoder‑Decoder to statistical machine translation (SMT) in a pragmatic way. Instead of replacing the entire translation model, they compute the conditional probability pθ(y|x) for every phrase pair in the existing phrase table and add this score as an additional feature to the log‑linear model that combines all SMT features (translation probabilities, language model scores, etc.). This approach preserves the existing decoder infrastructure while enriching it with a neural‑network‑based assessment of phrase plausibility.

Experiments are conducted on the English‑French translation task of the WMT’14 benchmark. From a massive bilingual corpus exceeding two billion words, the authors select a subset of 348 million words for training the Encoder‑Decoder and limit the vocabulary to the 15 000 most frequent words in each language (covering about 93 % of the data). The baseline SMT system is a conventional phrase‑based model with a 5‑gram language model and standard log‑linear weighting. After training the Encoder‑Decoder, its phrase‑pair scores are incorporated as a new feature and the system is retuned using MERT.

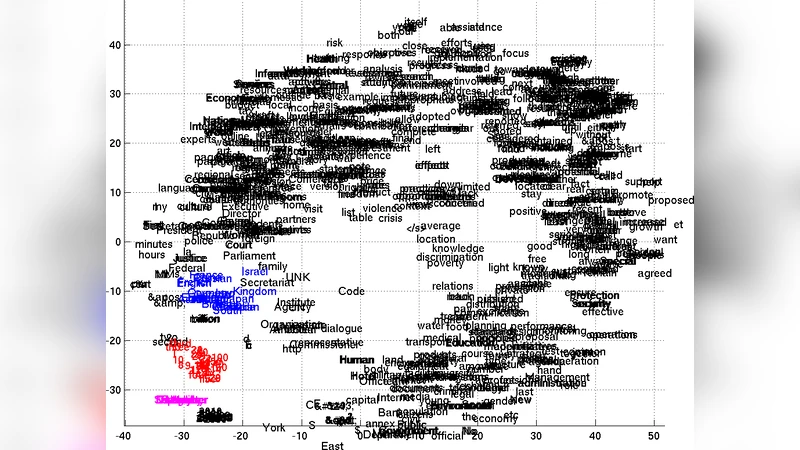

Results show consistent improvements: the BLEU score on the newstest2014 test set rises by approximately 0.5–0.7 points over the baseline. Gains are especially pronounced for longer phrases (5–7 tokens), indicating that the neural model captures longer‑range syntactic and semantic regularities that the traditional phrase‑based model overlooks. Qualitative analysis of the learned context vectors reveals that semantically similar phrases cluster together and that syntactic patterns (e.g., verb‑object order) are reflected in the geometry of the embedding space. This demonstrates that the Encoder‑Decoder learns a continuous representation that preserves both meaning and grammatical structure.

The paper also situates its contribution relative to prior work. Earlier approaches such as Schwenk (2012) used feed‑forward networks with fixed‑size windows, limiting them to short phrases and ignoring word order. Other methods employed bag‑of‑words representations or bilingual embeddings that also discard sequential information. The proposed RNN Encoder‑Decoder naturally respects token order and can handle arbitrarily long sequences, making it a more flexible and powerful component for SMT. The authors note a related model by Kalchbrenner and Blunsom (2013) that combines convolutional encoders with recurrent decoders; their work differs in architecture and evaluation methodology, focusing on perplexity rather than direct phrase‑scoring within a phrase‑based system.

In summary, the study demonstrates that a relatively simple yet effective gated RNN Encoder‑Decoder can be trained on large‑scale phrase pairs, produce meaningful probability scores for phrase translations, and be seamlessly integrated into a conventional SMT pipeline to achieve measurable translation quality gains. The work paves the way for further exploration of neural sequence‑to‑sequence models as complementary components in hybrid translation systems, and suggests future directions such as full‑sentence generation, sub‑word modeling, and multilingual extensions.

Comments & Academic Discussion

Loading comments...

Leave a Comment