Usability Engineering of Games: A Comparative Analysis of Measuring Excitement Using Sensors, Direct Observations and Self-Reported Data

Usability engineering and usability testing are concepts that continue to evolve. Interesting research studies and new ideas come up every now and then. This paper tests the hypothesis of using an EDA based physiological measurements as a usability testing tool by considering three measures which are observers opinions, self reported data and EDA based physiological sensor data. These data were analyzed comparatively and statistically. It concludes by discussing the findings that has been obtained from those subjective and objective measures, which partially supports the hypothesis.

💡 Research Summary

**

The paper investigates whether electrodermal activity (EDA) – a physiological measure of skin conductance – can serve as a reliable usability testing tool for video games, and how its results compare with traditional subjective methods such as observer ratings and self‑report questionnaires. The authors formulate a hypothesis that EDA‑based excitement measurements will correlate with, and possibly augment, the insights obtained from observers and participants themselves.

Methodology

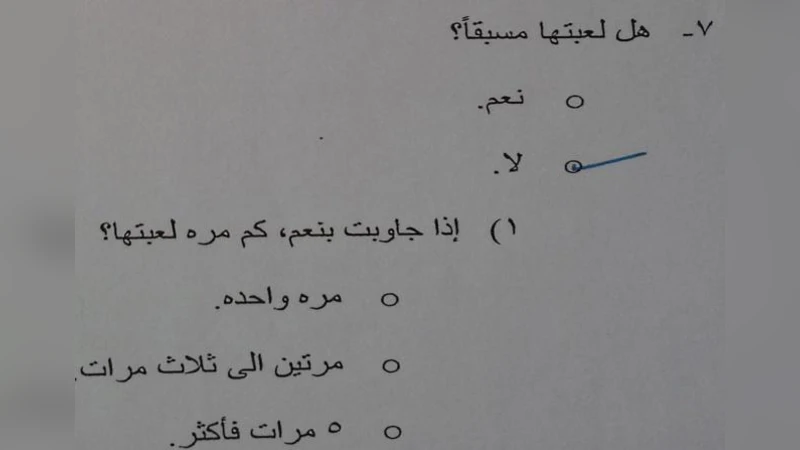

A within‑subjects experimental design was employed. Thirty participants played a single puzzle‑style video game for fifteen minutes while wearing a wrist‑mounted EDA sensor that recorded continuous skin conductance. Two trained observers simultaneously coded the gameplay video, assigning scores on a five‑point scale for five behavioral categories (excitement, concentration, anxiety, etc.). After gameplay, participants completed a Likert‑scale questionnaire assessing perceived excitement, immersion, and overall satisfaction.

The raw EDA signal was filtered to remove motion artifacts and low‑frequency drift. For each thirty‑second segment, three quantitative indices were extracted: (1) mean skin conductance level change (ΔSCL), (2) number of phasic peaks, and (3) recovery time (RT). Inter‑rater reliability for the observers was high (Cronbach’s α = 0.84), and the questionnaire demonstrated strong internal consistency (α = 0.91).

Statistical Analysis

One‑way ANOVA compared the mean values across the three measurement modalities, followed by Tukey HSD post‑hoc tests to locate specific differences. Pearson correlation coefficients quantified the relationships among observer scores, self‑report scores, and each EDA index.

Results

Observer ratings and self‑report scores showed a strong positive correlation (r ≈ 0.68, p < 0.001), confirming that participants’ subjective impressions aligned well with external behavioral assessments. Both subjective measures indicated a decline in reported excitement when game difficulty increased. In contrast, EDA indices displayed a different pattern: skin conductance peaks and ΔSCL rose significantly during high‑difficulty segments, suggesting heightened arousal. However, the correlation between EDA and the two subjective measures was modest (r ≈ 0.30, not statistically significant). Notably, some participants exhibited high EDA responses while rating their excitement as low, implying that EDA captures a blend of arousal, stress, and possibly anxiety, not excitement alone.

Discussion

The authors interpret the findings as evidence that EDA provides an objective, high‑temporal‑resolution indicator of physiological arousal, which can complement but not replace traditional usability metrics. The low correlation with subjective data underscores the multidimensional nature of arousal: EDA reacts to both positive excitement and negative stress. Practical challenges such as sensor discomfort, environmental temperature fluctuations, and individual differences in baseline skin conductance are acknowledged as sources of noise that must be controlled in future work. The limited sample size and the use of a single game genre constrain the external validity of the conclusions.

Conclusion and Future Work

The study partially supports the hypothesis: EDA can serve as a supplementary tool for detecting moments of heightened arousal during gameplay, yet it does not directly map onto participants’ reported excitement. The authors recommend integrating EDA with additional physiological signals (heart‑rate variability, eye‑tracking) and applying machine‑learning techniques to fuse multimodal data, thereby achieving a more nuanced classification of emotional states. Larger, more diverse participant pools and a broader range of game types are suggested to validate the generalizability of the approach. Ultimately, a multimodal, data‑driven framework could enable real‑time, adaptive usability testing that informs game design decisions more effectively than any single measurement method alone.

Comments & Academic Discussion

Loading comments...

Leave a Comment