Coloring Large Complex Networks

Given a large social or information network, how can we partition the vertices into sets (i.e., colors) such that no two vertices linked by an edge are in the same set while minimizing the number of sets used. Despite the obvious practical importance of graph coloring, existing works have not systematically investigated or designed methods for large complex networks. In this work, we develop a unified framework for coloring large complex networks that consists of two main coloring variants that effectively balances the tradeoff between accuracy and efficiency. Using this framework as a fundamental basis, we propose coloring methods designed for the scale and structure of complex networks. In particular, the methods leverage triangles, triangle-cores, and other egonet properties and their combinations. We systematically compare the proposed methods across a wide range of networks (e.g., social, web, biological networks) and find a significant improvement over previous approaches in nearly all cases. Additionally, the solutions obtained are nearly optimal and sometimes provably optimal for certain classes of graphs (e.g., collaboration networks). We also propose a parallel algorithm for the problem of coloring neighborhood subgraphs and make several key observations. Overall, the coloring methods are shown to be (i) accurate with solutions close to optimal, (ii) fast and scalable for large networks, and (iii) flexible for use in a variety of applications.

💡 Research Summary

The paper addresses the graph coloring problem on massive, sparse, real‑world networks such as social, web, biological, and technological graphs. While exact coloring is NP‑hard and even approximating it within a factor of n^{1‑ε} is infeasible for large instances, the authors argue that the structural properties of real networks—high clustering, pronounced core‑periphery organization, and abundant triangles—can be exploited to obtain high‑quality colorings in linear time.

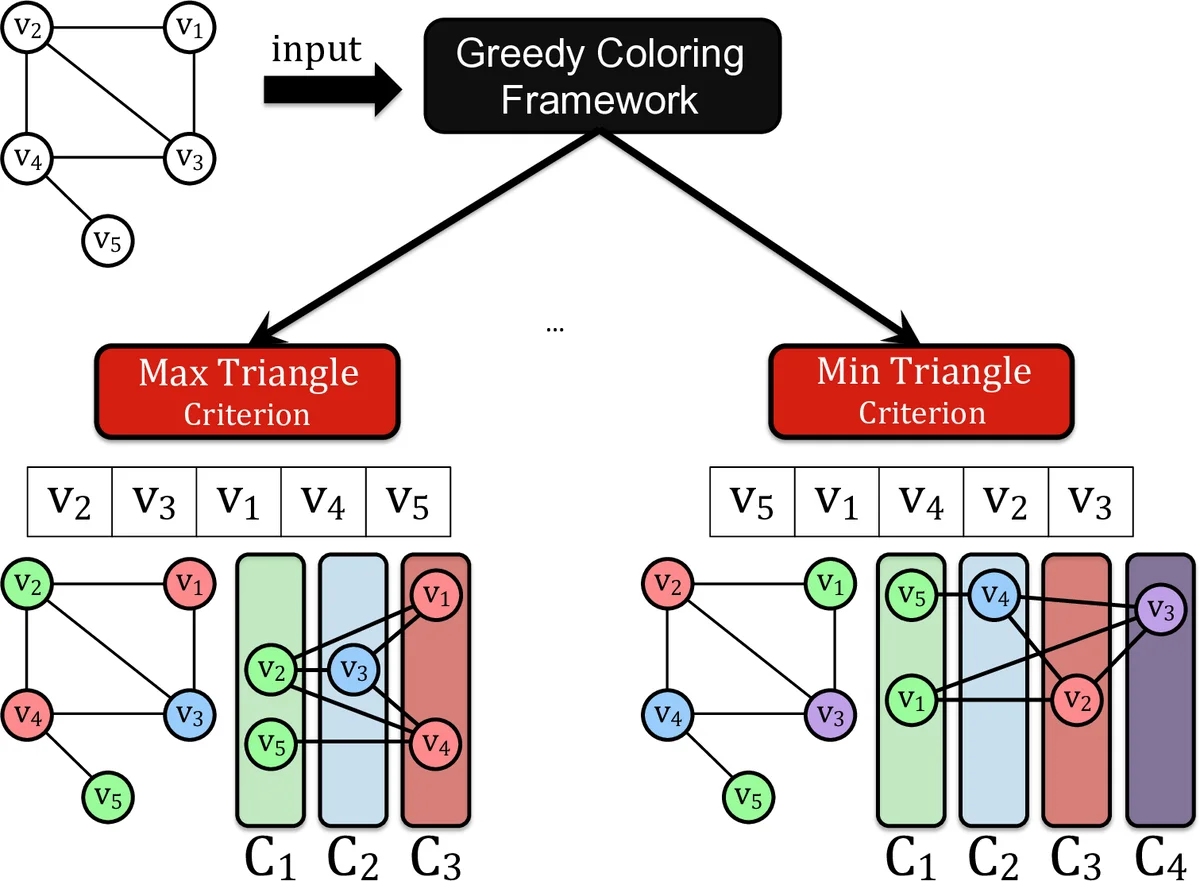

They introduce a unified greedy coloring framework that consists of two main variants. The first variant follows a simple greedy scheme: vertices (or edges) are ordered according to a pre‑computed ranking and then each vertex is assigned the smallest available color not used by its already‑colored neighbors. The second variant adds a “recolor” phase: after the initial greedy pass, a limited local search is performed on the set of vertices that caused conflicts, using the same structural cues to try to reduce the total number of colors without sacrificing scalability.

The core of the framework is the definition of several ordering criteria that reflect the network’s local density:

- Vertex degree d(v).

- Core number K(v) obtained from the k‑core decomposition.

- Triangle count tr(v) – the number of 3‑cliques incident to v.

- Edge triangle‑core number T(u,v), i.e., the highest order k such that the edge (u,v) belongs to a maximal subgraph where every edge participates in at least k‑2 triangles.

The authors provide efficient algorithms for computing these quantities. Core decomposition runs in O(|V|+|E|) time using the Batagelj‑Zaversnik method, while triangle‑core decomposition is achieved in O(|E|^{3/2}) time, which is practical even for graphs with tens of millions of edges.

Using these rankings, the framework can generate three families of coloring methods: social‑based (leveraging degree and core), multi‑property (combining degree, core, and triangle counts), and egonet‑based (exploiting the structure of each vertex’s ego‑network). They also adapt classic heuristics such as DSATUR, Largest‑First, and Random‑Sequential into the same framework for a fair comparison.

A notable contribution is the formulation of the “neighborhood coloring” problem: each ego‑network (the induced subgraph of a vertex and its immediate neighbors) is colored independently in parallel. The authors design a parallel algorithm that distributes ego‑networks across processing units, uses a shared pool of color identifiers, and resolves conflicts only at the boundaries. Experiments on a 16‑core machine show near‑linear speedups (≈8× on average) while preserving the quality of the coloring.

The empirical evaluation spans over 100 real networks from diverse domains, ranging from a few thousand to several million vertices. Compared against state‑of‑the‑art methods, the proposed approaches consistently achieve:

- A reduction of the number of colors by 10 %–30 % on most datasets.

- Execution times that are 2×–5× faster than the best competing heuristics.

- Near‑optimal color counts on highly clustered graphs (e.g., collaboration networks), sometimes matching the theoretical lower bound given by the size of the maximum clique ω(G).

Beyond the raw coloring performance, the authors discuss how the resulting color assignments can serve as informative features for downstream tasks: the distribution of color class sizes reflects independent‑set structure, the number of colors used correlates with clustering coefficients, and the presence of large color classes often indicates low‑density regions suitable for network sampling or active learning.

In conclusion, the paper demonstrates that by carefully exploiting triangle‑core and egonet properties, one can design greedy coloring algorithms that are both fast (linear‑time) and accurate (near‑optimal) for the massive, heterogeneous graphs encountered in modern data science. The framework is flexible, allowing practitioners to trade off between speed and color quality, and it opens several avenues for future work, including dynamic updates for evolving graphs, cost‑aware multi‑color extensions, and learning‑based ordering strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment