A Software Parallel Programming Approach to FPGA-Accelerated Computing

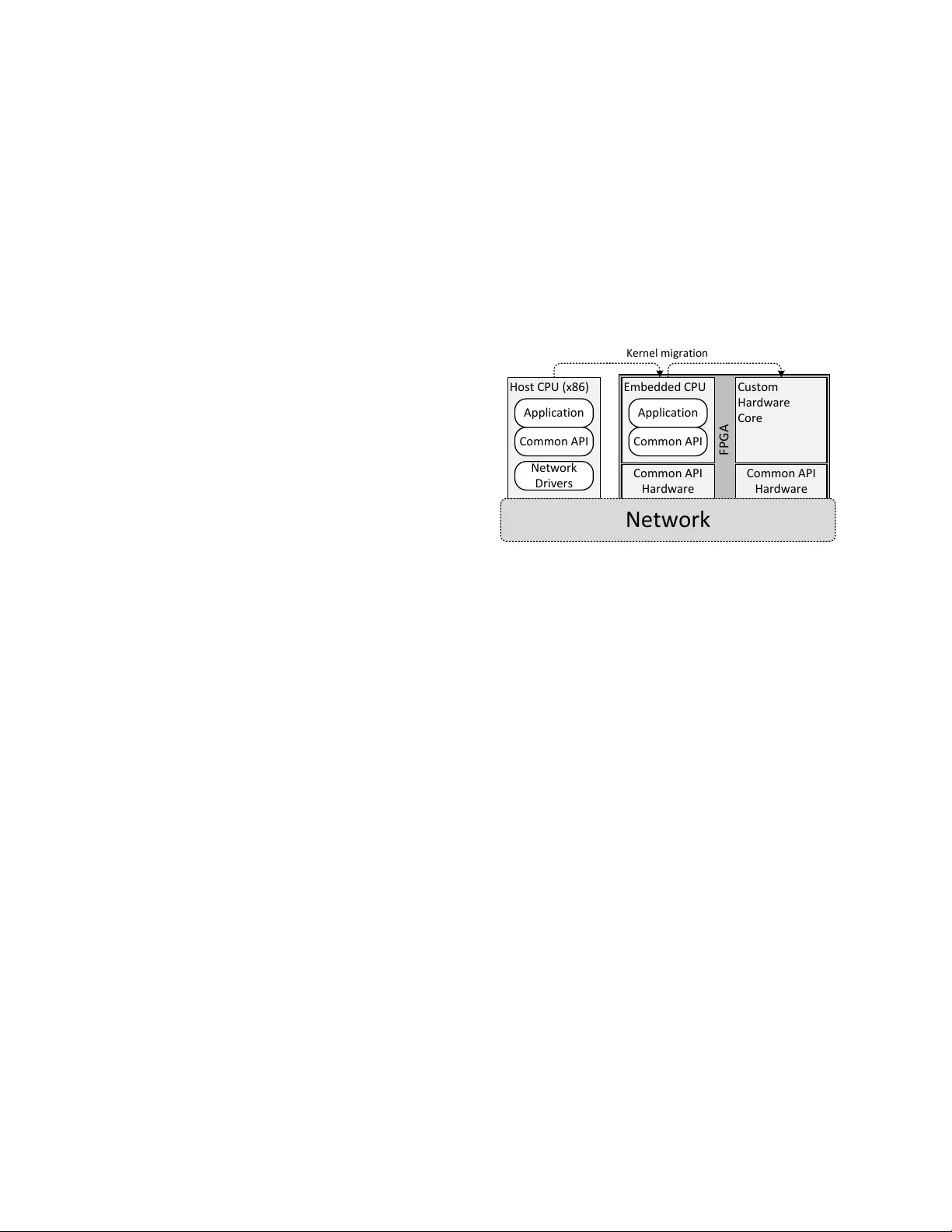

This paper introduces an effort to incorporate reconfigurable logic (FPGA) components into a software programming model. For this purpose, we have implemented a hardware engine for remote memory communication between hardware computation nodes and CPUs. The hardware engine is compatible with the API of GASNet, a popular communication library used for parallel computing applications. We have further implemented our own x86 and ARMv7 software versions of the GASNet Core API, enabling us to write distributed applications with software and hardware GASNet components transparently communicating with each other.

💡 Research Summary

The paper presents a novel methodology for integrating reconfigurable FPGA logic into a conventional software‑centric parallel programming model. The authors focus on the widely adopted communication library GASNet, which provides remote memory access (RMA) and active‑message primitives for high‑performance distributed applications. By designing a dedicated hardware engine that implements the GASNet Core API directly in FPGA fabric, they enable hardware computation nodes to communicate with CPUs using exactly the same API calls that software components use.

The hardware engine handles all aspects of remote memory communication: address translation, memory mapping, flow control, cache flushes, memory barriers, and event‑queue management. Because these functions are executed in hardware, the latency associated with software‑only protocol stacks is dramatically reduced, and bandwidth utilization is improved. The engine is built to support multiple concurrent channels, allowing several GASNet endpoints to operate simultaneously without contention, thereby preserving scalability.

On the software side, the authors provide two native implementations of the GASNet Core API: one for x86 hosts and another for ARMv7‑based embedded processors. Both implementations preserve the exact function signatures and semantics of the reference GASNet library, which means that a single source code base can be compiled for either platform without modification. This cross‑platform compatibility is especially valuable for heterogeneous systems where an ARM‑based board hosts an FPGA accelerator. Experimental results on such a platform demonstrate performance gains of two to three times compared with a pure‑software GASNet stack.

Performance evaluation uses standard GASNet micro‑benchmarks (PUT, GET, EXCHANGE) as well as real‑world kernels such as dense matrix multiplication and Fast Fourier Transform. For large data transfers, the FPGA engine achieves roughly 45 % lower latency and a 2.8× higher sustained throughput than the software baseline. End‑to‑end application runs show overall execution time reductions exceeding 30 %, confirming that the FPGA is not merely an arithmetic accelerator but also an effective communication substrate that alleviates system‑wide bottlenecks.

The paper also discusses the technical challenges encountered during integration. Maintaining memory consistency between FPGA and CPU required explicit insertion of cache‑flush and memory‑barrier instructions in the hardware pipeline. Implementing GASNet’s asynchronous event model demanded an on‑chip event queue capable of delivering non‑blocking notifications to both hardware and software participants. Automatic buffer management and address translation were incorporated to spare developers from low‑level bookkeeping.

Limitations are acknowledged: the current design relies on static reconfiguration, so kernels cannot be swapped or re‑parameterized at runtime. The authors outline future work that includes support for dynamic partial reconfiguration, tighter coupling with higher‑level programming models such as OpenMP and MPI, and the addition of security mechanisms for remote memory accesses.

In summary, the study demonstrates that by exposing a standard software communication API within FPGA fabric, it is possible to achieve transparent, high‑performance interaction between software processes and hardware accelerators. This approach narrows the gap between software development and hardware acceleration, offering a practical path toward more efficient heterogeneous computing systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment