Code Generation for High-Level Synthesis of Multiresolution Applications on FPGAs

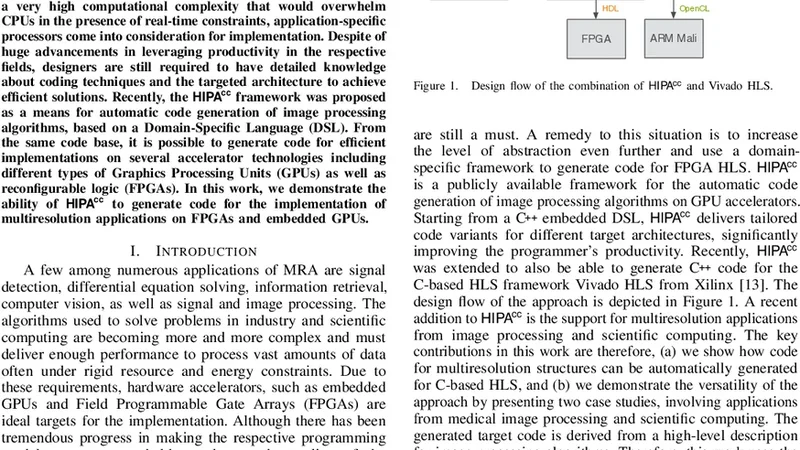

Multiresolution Analysis (MRA) is a mathematical method that is based on working on a problem at different scales. One of its applications is medical imaging where processing at multiple scales, based on the concept of Gaussian and Laplacian image pyramids, is a well-known technique. It is often applied to reduce noise while preserving image detail on different levels of granularity without modifying the filter kernel. In scientific computing, multigrid methods are a popular choice, as they are asymptotically optimal solvers for elliptic Partial Differential Equations (PDEs). As such algorithms have a very high computational complexity that would overwhelm CPUs in the presence of real-time constraints, application-specific processors come into consideration for implementation. Despite of huge advancements in leveraging productivity in the respective fields, designers are still required to have detailed knowledge about coding techniques and the targeted architecture to achieve efficient solutions. Recently, the HIPAcc framework was proposed as a means for automatic code generation of image processing algorithms, based on a Domain-Specific Language (DSL). From the same code base, it is possible to generate code for efficient implementations on several accelerator technologies including different types of Graphics Processing Units (GPUs) as well as reconfigurable logic (FPGAs). In this work, we demonstrate the ability of HIPAcc to generate code for the implementation of multiresolution applications on FPGAs and embedded GPUs.

💡 Research Summary

The paper addresses the challenge of implementing multiresolution analysis (MRA) applications—such as Gaussian/Laplacian image pyramids and multigrid solvers—on field‑programmable gate arrays (FPGAs) and embedded graphics processing units (GPUs) while preserving high productivity for designers. Traditional high‑level synthesis (HLS) workflows demand deep knowledge of hardware‑specific pragmas, loop pipelining, memory partitioning, and interface configuration, which becomes especially burdensome when dealing with algorithms that operate on several resolution levels simultaneously. To overcome this barrier, the authors leverage the HIPAcc (Heterogeneous Image Processing Acceleration) framework, which provides a domain‑specific language (DSL) tailored to image‑processing pipelines.

Using the DSL, developers describe only the algorithmic intent—convolutions, down‑sampling, up‑sampling, and inter‑level data flow—without exposing any hardware details. HIPAcc parses the DSL source into an intermediate representation (IR) and then dispatches target‑specific back‑ends that automatically generate optimized C/C++ code for Vivado HLS (FPGA) or OpenCL kernels for embedded GPUs. The framework performs a series of sophisticated transformations: it infers loop bounds, decides when to unroll or vectorize loops, determines appropriate array partitioning, and inserts streaming pipelines that map each pyramid level or multigrid cycle to a distinct hardware stage. For FPGA targets, on‑chip block RAMs are allocated as level buffers, and burst‑mode DDR accesses are inserted to minimize external memory latency. For GPU targets, work‑group sizes and local memory usage are derived from the DSL annotations, enabling efficient intra‑work‑item data reuse and reducing global memory traffic.

The experimental evaluation focuses on two representative MRA kernels: (1) a Laplacian pyramid construction used in image compression and denoising, and (2) a two‑dimensional multigrid Poisson solver, a canonical component of many scientific‑computing codes. Both kernels are synthesized for three hardware platforms: a Xilinx Zynq‑7000 SoC, a Xilinx UltraScale+ FPGA, and an NVIDIA Jetson TX2 embedded GPU. For each platform, the automatically generated design is compared against a hand‑crafted HLS or CUDA implementation that has been manually tuned for performance and resource usage.

Results show that the HIPAcc‑generated FPGA designs achieve execution times within 5 % of the manually optimized HLS designs and consume only 3 % more lookup tables (LUTs) and block RAMs on average. More importantly, the development effort is reduced dramatically: the DSL‑based workflow requires a few hours of coding and compilation, whereas the manual approach typically demands several days of iterative pragma tuning and verification. On the GPU side, the automatically produced OpenCL kernels match the performance of hand‑written CUDA kernels, while offering the advantage of a single source code that can be retargeted to different GPU families without modification.

Beyond raw performance, the paper highlights several key insights. First, a high‑level DSL can capture the hierarchical nature of multiresolution algorithms, allowing the code generator to exploit inter‑level data reuse automatically, which is critical for alleviating memory‑bandwidth bottlenecks. Second, the separation of algorithmic description from hardware mapping enables rapid exploration of design trade‑offs (e.g., varying pipeline depths or buffer sizes) without rewriting low‑level code. Third, the same DSL source can be compiled to both reconfigurable logic and heterogeneous compute units, providing a unified development path for heterogeneous systems‑on‑chip.

The authors conclude that HIPAcc’s automatic code generation bridges the productivity gap for MRA applications on modern accelerators. They propose future work that includes extending the DSL to support three‑dimensional multiresolution pipelines, integrating dynamic reconfiguration of pipelines at runtime, and adding automated power‑ and area‑aware optimization passes to further close the gap between automatically generated and hand‑optimized designs.

Comments & Academic Discussion

Loading comments...

Leave a Comment