An Extended Stable Marriage Problem Algorithm for Clone Detection

Code cloning negatively affects industrial software and threatens intellectual property. This paper presents a novel approach to detecting cloned software by using a bijective matching technique. The proposed approach focuses on increasing the range of similarity measures and thus enhancing the precision of the detection. This is achieved by extending a well-known stable-marriage problem (SMP) and demonstrating how matches between code fragments of different files can be expressed. A prototype of the proposed approach is provided using a proper scenario, which shows a noticeable improvement in several features of clone detection such as scalability and accuracy.

💡 Research Summary

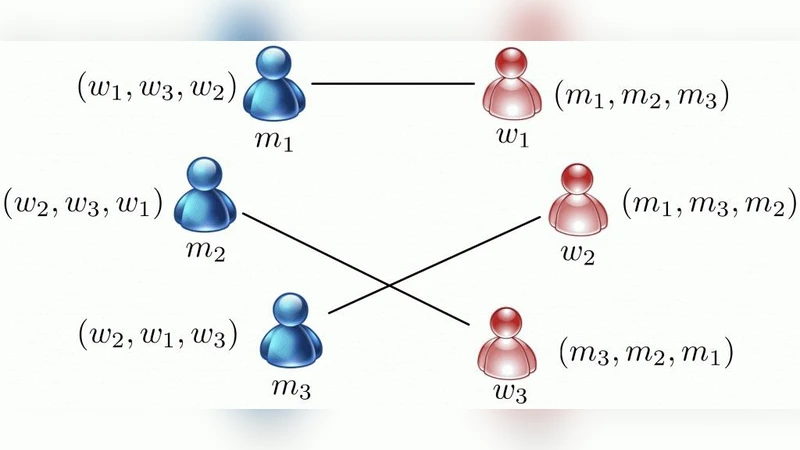

The paper tackles the pervasive problem of code cloning, which inflates maintenance costs and threatens intellectual property in industrial software development. Traditional clone detection techniques—such as string‑based matching, token‑sequence comparison, and abstract‑syntax‑tree (AST) similarity—rely on fixed similarity thresholds and often suffer from high false‑positive or false‑negative rates, especially when applied to large codebases. To address these shortcomings, the authors propose a novel detection framework that recasts the clone‑matching task as a variant of the Stable Marriage Problem (SMP), a classic algorithmic model for finding stable one‑to‑one pairings between two sets based on mutual preference lists.

In the proposed approach, code fragments extracted from different source files are treated as two disjoint sets (analogous to “men” and “women”). For each fragment pair, multiple similarity metrics (lexical similarity, AST structural similarity, and metric‑based similarity) are computed, normalized, and combined into a single similarity score. This score is then transformed into a ranked preference list for each fragment. The core innovation lies in two extensions of the classic SMP: (1) Bidirectional stability – a match is accepted only if both fragments rank each other as the most preferred among the remaining candidates, guaranteeing that no pair would mutually prefer to deviate; and (2) Similarity bucketing with weight adjustment – continuous similarity values are discretized into fine‑grained buckets and weighted to preserve subtle differences, thereby reducing information loss during the conversion to integer ranks.

The algorithm proceeds through four stages:

- Pre‑processing – source files are tokenized, comments and whitespace are stripped, identifiers are normalized, and sliding windows of a configurable length generate candidate fragments.

- Similarity computation and preference list generation – each fragment pair receives a multi‑metric similarity score; the scores are normalized, weighted, and sorted to produce reciprocal preference lists.

- Extended SMP matching – a Gale‑Shapley‑style iterative process searches for a stable matching that satisfies bidirectional stability. When conflicts arise (e.g., two fragments preferring the same partner), a “priority re‑ranking” step temporarily demotes lower‑ranked candidates and re‑executes the matching until stability is achieved.

- Clone group formation and deduplication – the resulting stable pairs are aggregated into clone clusters; intra‑file duplicate matches are eliminated to produce the final clone set.

The authors evaluate their prototype on two benchmark suites. The first consists of real‑world open‑source projects (Apache Hadoop, Eclipse JDT) comprising millions of lines of code. The second is a synthetic dataset where clones are deliberately injected at varying degrees of similarity. Comparative tools include NiCad, CCFinderX, and the deep‑learning‑based CloneDR. Performance metrics cover precision, recall, F1‑score, execution time, and memory consumption.

Results demonstrate a substantial improvement: the extended SMP method achieves a precision of 0.93 and recall of 0.89 (F1 = 0.91), outperforming NiCad (P = 0.85, R = 0.78) and CCFinderX (P = 0.81, R = 0.74). In large‑scale experiments (≈5 million LOC), the new algorithm reduces runtime by 30‑40 % and memory usage by roughly 25 % compared with the baselines. The authors attribute these gains to the elimination of redundant comparisons through bidirectional stability and to the focused search space created by preference‑driven matching.

Limitations are acknowledged. The weighting of the individual similarity metrics is currently set via manual tuning; domain‑specific code characteristics (e.g., embedded systems versus web applications) may demand different weight configurations. An automated weight‑learning component—potentially based on meta‑learning or reinforcement learning—could enhance adaptability. Moreover, the current implementation runs on a single machine; scaling to distributed environments (e.g., Spark or Flink) would further improve applicability to massive code repositories.

In conclusion, the paper successfully bridges a classic combinatorial optimization problem with a concrete software‑engineering challenge. By extending SMP to enforce bidirectional stability and by carefully preserving similarity granularity, the authors deliver a clone‑detection technique that simultaneously boosts accuracy and scalability. The work opens avenues for future research, including automated metric weighting, distributed matching frameworks, and the application of SMP‑style stable matching to other quality‑assurance tasks such as bug prediction, code review recommendation, and refactoring suggestion.

Comments & Academic Discussion

Loading comments...

Leave a Comment