POMDPs under Probabilistic Semantics

We consider partially observable Markov decision processes (POMDPs) with limit-average payoff, where a reward value in the interval [0,1] is associated to every transition, and the payoff of an infinite path is the long-run average of the rewards. We…

Authors: Krishnendu Chatterjee, Martin Chmelik

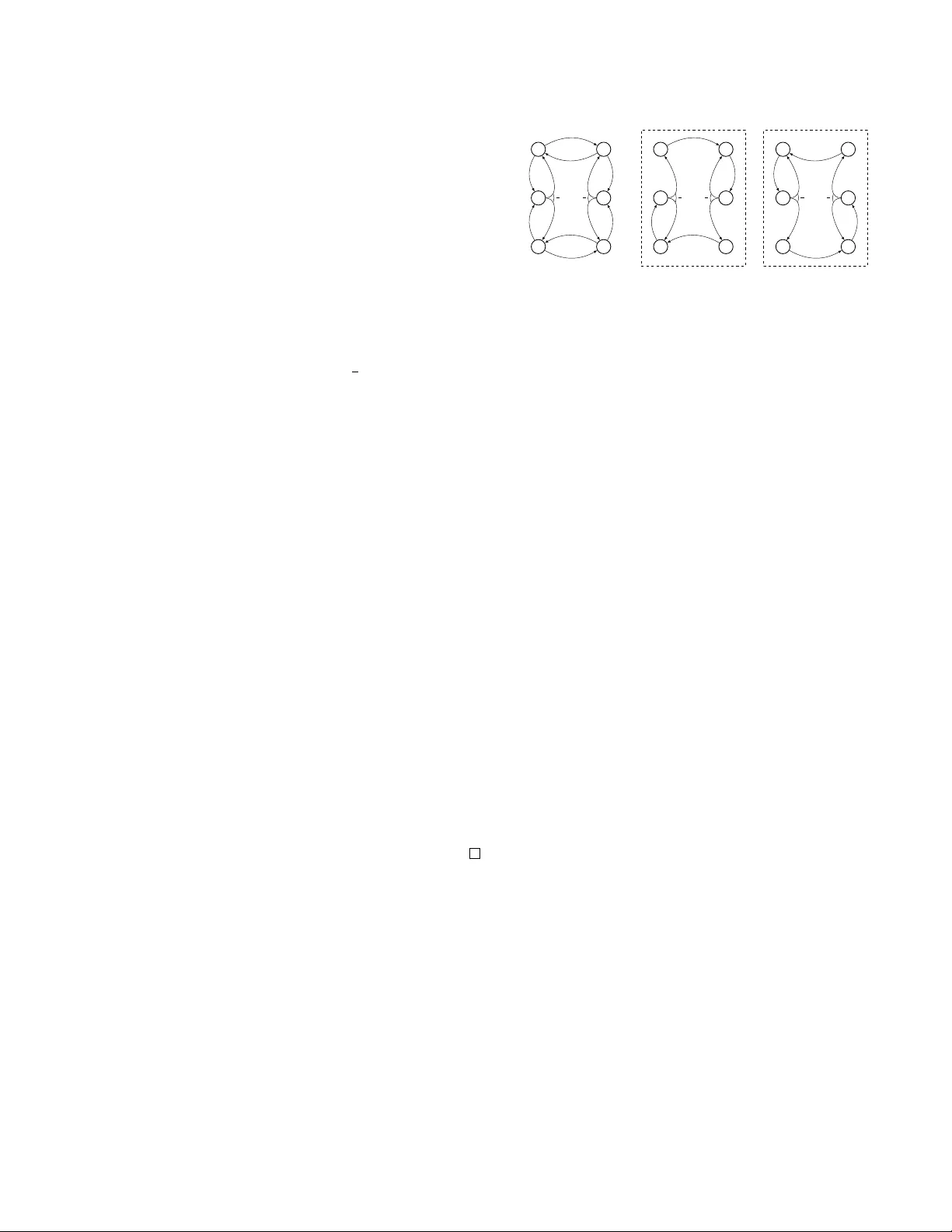

POMDPs under Probabilistic Seman tics Krishnendu Chatterjee IST Austria krishnendu.chatterjee@ist.ac.at Martin Chmel ´ ık IST Austria martin.chmelik@ist.ac.at Abstract W e consider partially observ able Mark ov decision pro cesses (POMDPs) with limit- a verage pay off, where a reward v alue in the in terv al [0 , 1] is asso ciated to ev ery transi- tion, and the pay off of an infinite path is the long-run av erage of the rew ards. W e con- sider tw o types of path constraints: (i) quan- titativ e constraint defines the set of paths where the pay off is at least a giv en thresh- old λ 1 ∈ (0 , 1]; and (ii) qualitative constraint whic h is a sp ecial case of quantitativ e con- strain t with λ 1 = 1. W e consider the compu- tation of the almost-sure winning set, where the con troller needs to ensure that the path constrain t is satisfied with probabilit y 1. Our main results for qualitative path constraint are as follows: (i) the problem of deciding the existence of a finite-memory con troller is EXPTIME-complete; and (ii) the problem of deciding the existence of an infinite-memory con troller is undecidable. F or quan titative path constrain t we show that the problem of deciding the existence of a finite-memory con troller is undecidable. 1 In tro duction P artially observ able Marko v decision processes (POMDPs). Markov de cision pr o c esses (MDPs) are standard mo dels for probabilistic systems that ex- hibit b oth probabilistic and nondeterministic b ehav- ior [10]. MDPs hav e b een used to mo del and solve con trol problems for sto chastic systems [7, 23]: nonde- terminism represen ts the freedom of the controller to c ho ose a control action, while the probabilistic com- p onen t of the b eha vior describes the system resp onse to control actions. In p erfe ct-observation (or p erfe ct- information) MDPs (PIMDPs) the con troller can ob- serv e the curren t state of the system to c ho ose the next con trol actions, whereas in p artial ly observable MDPs (POMDPs) the state space is partitioned according to observ ations that the controller can observe, i.e., giv en the curren t state, the con troller can only view the observ ation of the state (the partition the state b elongs to), but not the precise state [20]. POMDPs pro vide the appropriate mo del to study a wide v a- riet y of applications such as in computational biol- ogy [5], sp eec h pro cessing [19], image pro cessing [4], rob ot planning [13, 11], reinforcement learning [12], to name a few. POMDPs also subsume many other p o werful computational mo dels such as probabilistic finite automata (PF A) [24, 21] (since probabilistic fi- nite automata (ak a blind POMDPs) are a sp ecial case of POMDPs with a single observ ation). Limit-a verage pay off. A p ayoff function maps every infinite path (infinite sequence of state action pairs) of a POMDP to a real v alue. The most well-studied pa yoff in the setting of POMDPs is the limit-aver age pa yoff where every state action pair is assigned a real- v alued rew ard in the interv al [0 , 1] and the pay off of an infinite path is the long-run av erage of the rew ards on the path [7, 23]. POMDPs with limit-a verage pay- off pro vide the theoretical framew ork to study man y imp ortan t problems of practical relev ance, including probabilistic planning and sev eral stochastic optimiza- tion problems [11, 2, 16, 17, 27]. Exp ectation vs probabilistic seman tics. T radi- tionally , MDPs with limit-av erage pay off ha ve b een studied with the exp e ctation semantics, where the goal of the controller is to maximize the exp ected limit- a verage pay off. The exp ected pay off v alue can b e 1 2 when with probability 1 2 the pay off is 1, and with remaining probabilit y the pa yoff is 0. In man y ap- plications of system analysis (such as rob ot planning and con trol) the relev ant question is the probability measure of the paths that satisfy certain criteria, e.g., whether the probability measure of the paths suc h that the limit-a verage pa yoff is 1 (or the pa y off is at least 1 2 ) is at least a given threshold (e.g., see [1, 13]). W e classify the path constraints for limit-av erage pay off as follows: (1) quantitative c onstr aint that defines the set of paths with limit-a verage pa yoff at least λ 1 , for a threshold λ 1 ∈ (0 , 1]; and (2) qualitative c onstr aint is the sp ecial case of quantitativ e constraint that de- fines the set of paths with limit-av erage pay off 1 (i.e., the sp ecial case with λ 1 = 1). W e refer to the prob- lem where the controller must satisfy a path constrain t with a probability threshold λ 2 ∈ (0 , 1] as the pr ob a- bilistic semantics. An imp ortant sp ecial case of prob- abilistic semantics is the almost-sur e seman tics, where the probabilit y threshold is 1. The almost-sure seman- tics is of great imp ortance b ecause there are many ap- plications where the requirement is to kno w whether the correct b ehavior arises with probability 1. F or in- stance, when analyzing a randomized em bedded sc hed- uler, the relev ant question is whether ev ery thread pro- gresses with probabilit y 1. Ev en in settings where it suffices to satisfy certain specifications with probabil- it y λ 2 < 1, the correct choice of λ 2 is a challenging problem, due to the simplifications in tro duced during mo deling. F or example, in the analysis of randomized distributed algorithms it is quite common to require correctness with probabilit y 1 (e.g., [22, 26]). Besides its imp ortance in practical applications, almost-sure con vergence, is a fundamental concept in probability theory , and pro vide stronger con vergence guaran tee than con vergence in exp ectation [6]. Previous results. There are sev eral deep undecid- abilit y results established for the sp ecial case of proba- bilistic finite automata (PF A) (that immediately imply undecidabilit y for POMDPs). The basic undecidabil- it y results are for PF A ov er finite w ords: The empti- ness problem for PF A under probabilistic semantics is undecidable ov er finite words [24, 21, 3]; and it w as sho wn in [16] that even the following appro ximation v ersion is undecidable: for any fixed 0 < < 1 2 , given a probabilistic automaton and the guaran tee that ei- ther (a) there is a w ord accepted with probabilit y at least 1 − ; or (ii) all words are accepted with probabil- it y at most ; decide whether it is case (i) or case (ii). The almost-sure problem for probabilistic automata o ver finite words reduces to the non-emptiness ques- tion of universal automata ov er finite w ords and is PSP A CE-complete. How ever, another related decision question whether for every > 0 there is a w ord that is accepted with probability at least 1 − (called the v alue 1 problem) is undecidable for probabilistic au- tomata ov er finite words [8]. Also observe that all undecidabilit y results for probabilistic automata ov er finite w ords carry ov er to POMDPs where the con- troller is restricted to finite-memory strategies. The imp ortance of finite-memory strategies in applications has b een established in [9, 14, 18]. T able 1: Complexit y: New results are in b old fonts Almost-sure semantics Prob. semantics Fin. mem. Inf. mem. Fin./Inf. mem. PF A PSP A CE-c PSP A CE-c Undec. POMDP Qual. Constr. EXPTIME-c Undec. Undec. POMDP Quan. Constr. Undec. Undec. Undec. Our con tributions. Since under the general proba- bilistic seman tics, the decision problems are undecid- able ev en for PF A, w e consider POMDPs with limit- a verage pa yoff under the almost-sure semantics. W e presen t a complete picture of decidability as w ell as optimal complexit y . (A lmost-sur e winning for qualitative c onstr aint). W e first consider limit-av erage pay off with qualitative constrain t under almost-sure seman tics. W e show that b elief-b ase d strategies are not sufficien t (where a b elief-based strategy is based on the subset con- struction that remem b ers the p ossible set of current states): w e show that there exist POMDPs with limit- a verage pay off with qualitative constraint where finite- memory almost-sure winning strategy exists but there exists no b elief-based almost-sure winning strategy . Our coun ter-example sho ws that standard tec hniques based on subset construction (to construct an exp o- nen tial size PIMDP) are not adequate to solv e the problem. W e then show one of our main result that giv en a POMDP with | S | states and |A| actions, if there is a finite-memory almost-sure winning strategy to satisfy the limit-av erage pay off with qualitativ e con- strain t, then there is an almost-sure winning strategy that uses at most 2 3 ·| S | + |A| memory . Our exp onen- tial memory upp er b ound is asymptotically optimal, as ev en for PF A o ver finite w ords, exp onen tial memory is required for almost-sure winning (follows from the fact that the shortest witness word for non-emptiness of univ ersal finite automata is at least exp onen tial). W e then sho w that the problem of deciding the exis- tence of a finite-memory almost-sure winning strategy for limit-av erage pa yoff with qualitative constraint is EXPTIME-complete for POMDPs. In contrast to our result for finite-memory strategies, w e show that de- ciding the existence of an infinite-memory almost-sure winning strategy for limit-av erage pay off with qualita- tiv e constraint is undecidable for POMDPs. (A lmost-sur e winning with quantitative c onstr aint). In con trast to our decidabilit y result under finite-memory strategies for qualitative constraint, w e show that the almost-sure winning problem for limit-av erage pay off with quantitativ e constraint is undecidable even for finite-memory strategies for POMDPs. In summary w e establish the precise decidability fron- tier for POMDPs with limit-a verage pay off under probabilistic semantics (see T able 1). F or practical purp oses, the most prominen t question is the prob- lem of finite-memory strategies, and for finite-memory strategies we establish decidability with EXPTIME- complete complexity for the imp ortant sp ecial case of qualitativ e constraint under almost-sure semantics. 2 Definitions W e presen t the definitions of POMDPs, strategies, ob- jectiv es, and other basic notions required for our re- sults. W e follo w standard notations from [23, 15]. Notations. Given a finite set X , we denote by P ( X ) the set of subsets of X , i.e., P ( X ) is the pow er set of X . A probability distribution f on X is a function f : X → [0 , 1] such that P x ∈ X f ( x ) = 1, and w e denote by D ( X ) the set of all probability distributions on X . F or f ∈ D ( X ) w e denote b y Supp( f ) = { x ∈ X | f ( x ) > 0 } the supp ort of f . Definition 1 (POMDP) . A Partially Observ able Mark ov Decision Pro cess (POMDP) is a tuple G = ( S, A , δ, O , γ , s 0 ) wher e: (i) S is a finite set of states; (ii) A is a finite alphab et of actions ; (iii) δ : S × A → D ( S ) is a probabilistic transition function that given a state s and an action a ∈ A gives the pr ob ability distribution over the suc c essor states, i.e., δ ( s, a )( s 0 ) denotes the tr ansition pr ob ability fr om s to s 0 given action a ; (iv) O is a finite set of observ ations ; (v) γ : S → O is an observ ation function that maps every state to an observation; and (vi) s 0 is the initial state. Giv en s, s 0 ∈ S and a ∈ A , w e also write δ ( s 0 | s, a ) for δ ( s, a )( s 0 ). A state s is absorbing if for all actions a w e ha ve δ ( s, a )( s ) = 1 (i.e., s is never left from s ). F or an observ ation o , we denote by γ − 1 ( o ) = { s ∈ S | γ ( s ) = o } the set of states with observ ation o . F or a set U ⊆ S of states and O ⊆ O of observ ations w e denote γ ( U ) = { o ∈ O | ∃ s ∈ U. γ ( s ) = o } and γ − 1 ( O ) = S o ∈ O γ − 1 ( o ). Pla ys and belief-up dates. A play (or a path) in a POMDP is an infinite sequence ( s 0 , a 0 , s 1 , a 1 , s 2 , a 2 , . . . ) of states and actions suc h that for all i ≥ 0 we hav e δ ( s i , a i )( s i +1 ) > 0. W e write Ω for the set of all plays. F or a fi- nite prefix w = ( s 0 , a 0 , s 1 , a 1 , . . . , s n ) we denote by γ ( w ) = ( γ ( s 0 ) , a 0 , γ ( s 1 ) , a 1 , . . . , γ ( s n )) the observ a- tion and action sequence asso ciated with w . F or a finite sequence ρ = ( o 0 , a 0 , o 1 , a 1 , . . . , o n ) of obser- v ations and actions, the b elief B ( ρ ) after the pre- fix ρ is the set of states in whic h a finite prefix of a play can b e after the sequence ρ of observ ations and actions, i.e., B ( ρ ) = { s n = Last ( w ) | w = ( s 0 , a 0 , s 1 , a 1 , . . . , s n ) , w is a prefix of a pla y , and for all 0 ≤ i ≤ n. γ ( s i ) = o i } . The belief-up dates asso ci- ated with finite-prefixes are as follows: for prefixes w and w 0 = w · a · s the b elief up date is defined inductiv ely B ( γ ( w 0 )) = S s 1 ∈B ( γ ( w )) Supp( δ ( s 1 , a )) ∩ γ − 1 ( γ ( s )). Strategies. A str ate gy (or a p olicy) is a recipe to extend prefixes of plays and is a function σ : ( S · A ) ∗ · S → D ( A ) that given a finite history (i.e., a finite prefix of a play) selects a probabil- it y distribution ov er the actions. Since we consider POMDPs, strategies are observation-b ase d , i.e., for all histories w = ( s 0 , a 0 , s 1 , a 1 , . . . , a n − 1 , s n ) and w 0 = ( s 0 0 , a 0 , s 0 1 , a 1 , . . . , a n − 1 , s 0 n ) such that for all 0 ≤ i ≤ n w e hav e γ ( s i ) = γ ( s 0 i ) (i.e., γ ( w ) = γ ( w 0 )), we must ha ve σ ( w ) = σ ( w 0 ). In other words, if the obser- v ation sequence is the same, then the strategy can- not distinguish b etw een the prefixes and must play the same. W e no w present an equiv alent definition of observ ation-based strategies suc h that the memory of the strategy is explicitly specified, and will be required to presen t finite-memory strategies. Definition 2 (Strategies with memory and finite-memory strategies) . A strategy with mem- ory is a tuple σ = ( σ u , σ n , M , m 0 ) wher e:(i) (Memory set). M is a denumer able set (finite or infinite) of memory elements (or memory states). (ii) (Action selection function). The function σ n : M → D ( A ) is the action selection function that given the curr ent memory state gives the pr ob ability distribution over actions. (iii) (Memory up date function). The func- tion σ u : M × O × A → D ( M ) is the memory up date function that given the curr ent memory state, the curr ent observation and action, up dates the memory state pr ob abilistic al ly. (iv) (Initial memory). The memory state m 0 ∈ M is the initial memory state. A str ate gy is a finite-memory str ate gy if the set M of memory elements is finite. A str ate gy is pure (or deterministic) if the memory up date function and the action sele ction function ar e deterministic, i.e., σ u : M × O × A → M and σ n : M → A . A str ate gy is memoryless (or stationary) if it is indep endent of the history but dep ends only on the curr ent observation, and c an b e r epr esente d as a function σ : O → D ( A ) . Ob jectiv es. An obje ctive in a POMDP G is a measur- able set ϕ ⊆ Ω of plays. W e first define limit-aver age p ayoff (ak a mean-pa yoff ) function. Given a POMDP w e consider a rew ard function r : S × A → [0 , 1] that maps every state action pair to a real-v alued re- w ard in the interv al [0 , 1]. The LimAvg pa yoff func- tion maps every pla y to a real-v alued rew ard that is the long-run av erage of the rewards of the play . F ormally , given a play ρ = ( s 0 , a 0 , s 1 , a 1 , s 2 , a 2 , . . . ) w e ha ve LimAvg ( r , ρ ) = lim inf n →∞ 1 n · P n i =0 r ( s i , a i ) . When the reward function r is clear from the con text, w e drop it for simplicity . F or a reward function r , w e consider tw o types of limit-av erage pay off constraints: (i) Qualitative c onstr aint. The qualitative c onstr aint limit-a verage ob jective LimAvg =1 defines the set of paths suc h that the limit-a verage pay off is 1; i.e., LimAvg =1 = { ρ | LimAvg ( ρ ) = 1 } . (ii) Quantitative c onstr aints. Given a threshold λ 1 ∈ (0 , 1), the quan- titative c onstr aint limit-av erage ob jectiv e LimAvg >λ 1 defines the set of paths such that the limit-a verage pa yoff is strictly greater than λ 1 ; i.e., LimAvg >λ 1 = { ρ | LimAvg ( ρ ) > λ 1 } . Probabilistic and almost-sure winning. Giv en a POMDP , an ob jective ϕ , and a class C of strategies, we sa y that: (i) a strategy σ ∈ C is almost-sur e winning if P σ ( ϕ ) = 1; (ii) a strategy σ ∈ C is pr ob abilistic winning , for a threshold λ 2 ∈ (0 , 1), if P σ ( ϕ ) ≥ λ 2 . Theorem 1 (Results for PF A (probabilistic automata o ver finite words) [21]) . The fol lowing assertions hold for the class C of al l infinite-memory as wel l as finite- memory str ate gies: (1) the pr ob abilistic winning pr ob- lem is unde cidable for PF A; and (2) the almost-sur e winning pr oblem is PSP ACE-c omplete for PF A. Since PF A are a sp ecial case of POMDPs, the undecid- abilit y of the probabilistic winning problem for PF A implies the undecidability of the probabilistic win- ning problem for POMDPs with both qualitative and quan titative constraint limit-av erage ob jectives. The almost-sure winning problem is PSP A CE-complete for PF As, and we study the complexity of the almost-sure winning problem for POMDPs with both qualitative and quantitativ e constrain t limit-av erage ob jectives, under infinite-memory and finite-memory strategies. Basic prop erties of Marko v Chains. Since our pro ofs will use results of Marko v chains, w e start with some basic results related to Mark ov chains. Markov chains and r e curr ent classes. A Marko v chain G = ( S , δ ) consists of a finite set S of states and a prob- abilistic transition function δ : S → D ( S ). Given the Mark ov chain, we consider the directed graph ( S , E ) where E = { ( s, s 0 ) | δ ( s 0 | s ) > 0 } . A r e curr ent class C ⊆ S of the Marko v chain is a bottom strongly con- nected comp onent (scc) in the graph ( S , E ) (a b ottom scc is an scc with no edges out of the scc). W e denote b y Rec ( G ) the set of recurrent classes of the Marko v c hain, i.e., Rec ( G ) = { C | C is a recurrent class } . Giv en a state s and a set U of states, w e say that U is reachable from s if there is a path from s to some state in U in the graph ( S , E ). Given a state s of the Mark ov chain w e denote by Rec ( G )( s ) ⊆ Rec ( G ) the subset of the recurren t classes reachable from s in G . A state is r e curr ent if it belongs to a recurren t class. The follo wing standard prop erties of reac hability and the recurren t classes will b e used in our pro ofs: • Pr op erty 1. (a) F or a set T ⊆ S , if for all states s ∈ S there is a path to T (i.e., for all states there is a p ositiv e probability to reach T ), then from all states the set T is reached with probabilit y 1. (b) F or all states s , if the Mark ov c hain starts at s , then the set C = S C ∈ Rec ( G )( s ) C is reached with probabilit y 1, i.e., the set of recurrent classes is reac hed with probability 1. • Pr op erty 2. F or a recurrent class C , for all states s ∈ C , if the Marko v chain starts at s , then for all states t ∈ C the state t is visited infinitely often with probability 1, and is visited with p osi- tiv e av erage frequency (i.e., p ositive limit-av erage frequency) with probabilit y 1. Lemma 1 is a consequence of the ab ov e prop erties. Lemma 1. L et G = ( S , δ ) b e a Markov chain with a r ewar d function r : S → [0 , 1] , and s ∈ S a state of the Markov chain. The state s is almost-sur e winning for the obje ctive LimAvg =1 iff for al l r e curr ent classes C ∈ Rec ( G )( s ) and for al l states s 1 ∈ C we have r ( s 1 ) = 1 . Markov chains under finite memory str ate gies. W e no w define Marko v c hains obtained b y fixing a finite- memory strategy in a POMDP G . A finite-memory strategy σ = ( σ u , σ n , M , m 0 ) induces a Marko v c hain ( S × M , δ σ ), denoted G σ , with the probabilistic tran- sition function δ σ : S × M → D ( S × M ): given s, s 0 ∈ S and m, m 0 ∈ M , the transition δ σ ( s 0 , m 0 ) | ( s, m ) is the probability to go from state ( s, m ) to state ( s 0 , m 0 ) in one step under the strategy σ . The probability of transition can b e decomp osed as follo ws: (i) First an action a ∈ A is sampled according to the dis- tribution σ n ( m ); (ii) then the next state s 0 is sam- pled according to the distribution δ ( s, a ); and (iii) fi- nally the new memory m 0 is sampled according to the distribution σ u ( m, γ ( s 0 ) , a ) (i.e., the new memory is sampled according σ u giv en the old memory , new ob- serv ation and the action). More formally , we hav e: δ σ ( s 0 , m 0 ) | ( s, m ) = P a ∈A σ n ( m )( a ) · δ ( s, a )( s 0 ) · σ u ( m, γ ( s 0 ) , a )( m 0 ). 3 Finite-memory strategies with Qualitativ e Constrain t In this section w e show the following three results for finite-memory strategies: (i) in POMDPs with LimAvg =1 ob jectiv es b elief-based strategies are not sufficien t for almost-sure winning; (ii) an exponential upp er bound on the memory required b y an almost- sure winning strategy for LimAvg =1 ob jectiv es; and (iii) the decision problem is EXPTIME-complete. Belief is not sufficient. W e no w sho w with an ex- ample that there exist POMDPs with LimAvg =1 ob- jectiv es, where finite-memory randomized almost-sure winning strategies exist, but there exists no b elief- based randomized almost-sure winning strategy (a b elief-based strategy only uses memory that relies on the subset construction where the subset denotes the p ossible curren t states called belief ). W e will present the counter-example even for POMDPs with restricted rew ard function r assigning only Bo olean rew ards 0 and 1 to the states (do es not dep end on the action). Example 1. We c onsider a POMDP with state sp ac e { s 0 , X , X 0 , Y , Y 0 , Z , Z 0 } and action set { a, b } , and let U = { X , X 0 , Y , Y 0 , Z , Z 0 } . F r om the initial state s 0 al l the other states ar e r e ache d with uniform pr ob ability in one-step, i.e., for al l s 0 ∈ U = { X , X 0 , Y , Y 0 , Z , Z 0 } we have δ ( s 0 , a )( s 0 ) = δ ( s 0 , b )( s 0 ) = 1 6 . The tr ansitions fr om the other states ar e shown in Figur e 1. Al l states in U have the same observation. The r ewar d function r assigns the r ewar d 1 to states X , X 0 , Z , Z 0 and 0 to states Y and Y 0 . The b elief initial ly after one-step is the set U = { X , X 0 , Y , Y 0 , Z , Z 0 } sinc e fr om s 0 al l of them ar e r e ache d with p ositive pr ob ability. The b elief is always the set U sinc e every state has an input e dge for every action, i.e., if the curr ent b elief is U (the set of states that the POMDP is curr ently in with p os- itive pr ob ability is U ), then irr esp e ctive of whether a or b is chosen al l states of U ar e r e ache d with p ositive pr ob ability and thus the b elief is again U . Ther e ar e thr e e b elief-b ase d str ate gies: (i) σ 1 that plays always a ; (ii) σ 2 that plays always b ; or (iii) σ 3 that plays b oth a and b with p ositive pr ob ability. The Markov chains G σ 1 and G σ 2 ar e also shown in Figur e 1, and the gr aph of G σ 3 is the same as the POMDP G (with e dge lab els r emove d). F or al l the thr e e str ate gies, the Markov chains c ontain the whole set U as the r e achable r e curr ent class, and it fol lows by L emma 1 that none of the b elief-b ase d str ate gies σ 1 , σ 2 or σ 3 ar e almost- sur e winning for the LimAvg =1 obje ctive. The str ate gy σ 4 that plays action a and b alternately gives rise to a Markov chain wher e the r e curr ent classes do not inter- se ct with Y or Y 0 , and is a finite-memory almost-sur e winning str ate gy for the LimAvg =1 obje ctive. 3.1 Strategy complexit y F or the rest of the subsection w e fix a finite-memory almost-sure winning strategy σ = ( σ u , σ n , M , m 0 ) on the POMDP G = ( S, A , δ, O , γ , s 0 ) with a rew ard function r for the ob jective LimAvg =1 . Our goal is to construct an almost-sure winning strategy for the LimAvg =1 ob jectiv e with memory size at most Mem ∗ = 2 3 ·| S | · 2 |A| . W e start with a few definitions asso ciated with strategy σ . F or m ∈ M : • The function RecFun σ ( m ) : S → { 0 , 1 } is such that RecFun σ ( m )( s ) is 1 iff the state ( s, m ) is re- curren t in the Marko v c hain G σ and 0 otherwise. • The function A WF un σ ( m ) : S → { 0 , 1 } is suc h X X 0 Y Y 0 Z Z 0 a b b a a b a b a,b a,b a,b a,b 1 2 1 2 POMDP G X X 0 Y Y 0 Z Z 0 1 2 1 2 MC G σ 1 R ec : { X , X 0 , Y, Y 0 , Z, Z 0 } X X 0 Y Y 0 Z Z 0 1 2 1 2 R ec : { X , X 0 , Y, Y 0 , Z, Z 0 } MC G σ 2 Figure 1: Belief is not sufficien t that A WFun σ ( m )( s ) is 1 iff the state ( s, m ) is almost-sure winning for the LimAvg =1 ob jectiv e in the Mark ov chain G σ and 0 otherwise. • W e also consider Act σ ( m ) = Supp( σ n ( m )) that for ev ery memory elemen t giv es the support of the probabilit y distribution ov er actions play ed at m . Remark 1. L et ( s 0 , m 0 ) b e r e achable fr om ( s, m ) in G σ . If the state ( s, m ) is almost-sur e winning for the LimAvg =1 obje ctive, then the state ( s 0 , m 0 ) is also almost-sur e winning for the LimAvg =1 obje ctive. Collapsed graph of σ . Giv en the strategy σ w e define the notion of a c ol lapse d gr aph CoGr ( σ ) = ( V , E ). The states of the graph are elemen ts from the set V = { ( Y , A WF un σ ( m ) , RecFun σ ( m ) , Act σ ( m )) | Y ⊆ S and m ∈ M } and the initial state is ( { s 0 } , A WF un σ ( m 0 ) , RecFun σ ( m 0 ) , Act σ ( m 0 )). The edges in E are lab eled by actions in A . In- tuitiv ely , the action lab eled edges of the graph depict the up dates of the b elief and the func- tions up on a particular action. F ormally , there is an edge ( Y , A WFun σ ( m ) , RecFun σ ( m ) , Act σ ( m )) a → ( Y 0 , A WF un σ ( m 0 ) , RecFun σ ( m 0 ) , Act σ ( m 0 )) in the col- lapsed graph CoGr ( σ ) iff there exists an observ a- tion o ∈ O such that (i) the action a ∈ Act σ ( m ); (ii) the set Y 0 is non-empty and it is the b elief up- date from Y , under action a and the observ ation o , i.e., Y 0 = S s ∈ Y Supp( δ ( s, a )) ∩ γ − 1 ( o ); and (iii) m 0 ∈ Supp( σ u ( m, o, a )). Note that the num ber of states in the graph is b ounded b y | V | ≤ 2 3 ·| S | · 2 |A| = Mem ∗ . W e no w define the collapsed strategy for σ . Intuitiv ely w e collapse memory elemen ts of σ whenev er they agree on all the RecFun , A WFun , and Act functions. The collapsed strategy plays uniformly all the actions from the set giv en by Act in the collapsed state. Collapsed strategy . W e now construct the c ol lapse d str ate gy σ 0 = ( σ 0 u , σ 0 n , M 0 , m 0 0 ) of σ based on the col- lapsed graph CoGr ( σ ) = ( V , E ). W e will refer to this construction b y σ 0 = CoSt ( σ ). • The memory set M 0 are the v ertices of the col- lapsed graph CoGr ( σ ) = ( V , E ), i.e., M 0 = V = { ( Y , A WF un σ ( m ) , RecFun σ ( m ) , Act σ ( m )) | Y ⊆ S and m ∈ M } . Note that | M 0 | ≤ Mem ∗ . • The initial memory is m 0 0 = ( { s 0 } , A WF un σ ( m 0 ) , RecFun σ ( m 0 ) , Act σ ( m 0 )). • The next action function giv en a memory ( Y , W, R, A ) ∈ M 0 is the uniform distribution o ver the set of actions { a | ∃ ( Y 0 , W 0 , R 0 , A 0 ) ∈ M 0 and ( Y , W, R, A ) a → ( Y 0 , W 0 , R 0 , A 0 ) ∈ E } , where E are the edges of the collapsed graph. • The memory update function σ 0 u (( Y , W, R, A ) , o, a ) given a memory ele- men t ( Y , W , R, A ) ∈ M 0 , a ∈ A , and o ∈ O is the uniform distribution o ver the set of states { ( Y 0 , W 0 , R 0 , A 0 ) | ( Y , W, R, A ) a → ( Y 0 , W 0 , R 0 , A 0 ) ∈ E and Y 0 ⊆ γ − 1 ( o ) } . Random v ariable notation. F or all n ≥ 0 w e write X n , Y n , W n , R n , A n , L n for the random v ariables that corresp ond to the pro jection of the n th state of the Marko v c hain G σ 0 on the S comp onen t, the b elief P ( S ) comp onent, the A WFun σ comp onen t, the RecF un σ comp onen t, the Act σ comp onen t, and the n th action, resp ectiv ely . Run of the Mark ov chain G σ 0 . A run of the Mark ov chain G σ 0 is an infinite sequence ( X 0 , Y 0 , W 0 , R 0 , A 0 ) L 0 → ( X 1 , Y 1 , W 1 , R 1 , A 1 ) L 1 → · · · suc h that each finite prefix of the run is generated with positive probabilit y on the Mark ov chain, i.e., for all i ≥ 0, w e ha v e (i) L i ∈ Supp( σ 0 n ( Y i , W i , R i , A i )); (ii) X i +1 ∈ Supp( δ ( X i , L i )); and (iii) ( Y i +1 , W i +1 , R i +1 , A i +1 ) ∈ Supp( σ 0 u (( Y i , W i , R i , A i ) , γ ( X i +1 ) , L i )). In the follow- ing lemma we establish important prop erties of the Mark ov chain G σ 0 that are essen tial for our pro of. Lemma 2. L et ( X 0 , Y 0 , W 0 , R 0 , A 0 ) L 0 → ( X 1 , Y 1 , W 1 , R 1 , A 1 ) L 1 → · · · b e a run of the Markov chain G σ 0 , then the fol lowing assertions hold for al l i ≥ 0 : 1. X i +1 ∈ Supp( δ ( X i , L i )) ∩ Y i +1 ; 2. ( Y i , W i , R i , A i ) L i → ( Y i +1 , W i +1 , R i +1 , A i +1 ) is an e dge in the c ol lapse d gr aph CoGr ( σ ) ; 3. if W i ( X i ) = 1 , then W i +1 ( X i +1 ) = 1 ; 4. if R i ( X i ) = 1 , then R i +1 ( X i +1 ) = 1 ; and 5. if W i ( X i ) = 1 and R i ( X i ) = 1 , then r ( X i , L i ) = 1 . Pr o of. W e present the pro of of the fifth p oint, and the other p oints are straigh t-forward. F or the fifth point consider that W i ( X i ) = 1 and R i ( X i ) = 1. Then there exists a memory m ∈ M such that (i) A WFun σ ( m ) = W i , and (ii) RecF un σ ( m ) = R i . Moreov er, the state ( X i , m ) is a recurrent (since R i ( X i ) = 1) and almost- sure winning state (since W i ( X i ) = 1) in the Mark ov c hain G σ . As L i ∈ Act σ ( m ) it follows that L i ∈ Supp( σ n ( m )), i.e., the action L i is play ed with p os- itiv e probability in state X i giv en memory m , and ( X i , m ) is in an almost-sure winning recurrent class. By Lemma 1 it follows that the rew ard r ( X i , L i ) must b e 1. The desired result follo ws. W e no w introduce the final notion of a collapsed- recurren t state that is required to complete the pro of. A state ( X , Y , W, R, A ) of the Marko v chain G σ 0 is collapsed-recurren t, if for all memory elemen ts m ∈ M that w ere merged to the memory element ( Y , W , R, A ), the state ( X , m ) of the Marko v chain G σ is recur- ren t. It will turn out that every recurrent state of the Mark ov chain G σ is also collapsed-recurren t. Definition 3. A state ( X , Y , W , R, A ) of the Markov chain G σ 0 is c al le d c ol lapse d-r e curr ent iff R ( X ) = 1 . Note that due to p oint 4 of Lemma 2 all the states reac hable from a collapsed-recurrent state are also collapsed-recurren t. In the following lemma we sho w that the set of collapsed-recurrent states is reached with probability 1; and the key fact w e show is that from every state in G σ 0 a collapsed-recurrent state is reac hed with p ositive probabilit y , and then use Prop- ert y 1 (a) of Marko v chains to establish the lemma. Lemma 3. With pr ob ability 1 a run of the Markov chain G σ 0 r e aches a c ol lapse d-r e curr ent state. Lemma 4. The c ol lapse d str ate gy σ 0 is a finite- memory almost-sur e winning str ate gy for the LimAvg =1 obje ctive on the POMDP G with the r ewar d function r . Pr o of. The initial state of the Marko v chain G σ 0 is ( { s 0 } , A WF un σ ( m 0 ) , RecFun σ ( m 0 ) , Act σ ( m 0 )) and as the strategy σ is an almost-sure winning strategy w e ha ve that A WF un σ ( m 0 )( s 0 ) = 1. It follo ws from the third p oint of Lemma 2 that ev ery reachable state ( X, Y , W, R , A ) in the Marko v c hain G σ 0 satisfies that W ( X ) = 1. F rom the initial state a collapsed- recurren t state is reac hed with probability 1. It follo ws that all the recurrent states in the Marko v chain G σ 0 are also collapsed-recurrent states. As in all reach- able states ( X , Y , W, R, A ) we hav e W ( X ) = 1, by the fifth p oint of Lemma 2 it follows that every action L play ed in a collapsed-recurrent state ( X , Y , W , R, A ) satisfies that the rew ard r ( X, L ) = 1. As this true for ev ery reachable recurrent class, the fact that the col- lapsed strategy is an almost-sure winning strategy for LimAvg =1 ob jectiv e follows from Lemma 1. Theorem 2 (Strategy complexity) . The fol low- ing assertions hold: (1) If ther e exists a finite- memory almost-sur e winning str ate gy in the POMDP G = ( S, A , δ, O , γ , s 0 ) with r ewar d function r for the LimAvg =1 obje ctive, then ther e exists a finite-memory almost-sur e winning str ate gy with memory size at most 2 3 ·| S | + |A| . (2) Finite-memory almost-sur e win- ning str ate gies for LimAvg =1 obje ctives in POMDPs in gener al r e quir e exp onential memory and b elief-b ase d str ate gies ar e not sufficient. Pr o of. The first p oint follows from Lemma 4 and the fact that the size of the memory set of the collapsed strategy σ 0 of an y finite-memory strategy σ (whic h is the size of the vertex set of the collapsed graph of σ ) is b ounded b y 2 3 ·| S | + |A| . 3.2 Computational complexit y A naive double-exp onential time algorithm would b e to enumerate all finite-memory strategies with mem- ory b ounded by 2 3 ·| S | + |A| (b y Theorem 2). Our impro ved exp onential-time algorithm consists of t wo steps: (i) first it constructs a special type of a b elief- observation POMDP G from a POMDP G (and G is exp onential in G ); and we show that there exists a finite-memory almost-sure winning strategy for the ob jectiv e LimAvg =1 in G iff there exists a randomized memoryless almost-sure winning strategy in G for the ob jectiv e LimAvg =1 ; and (ii) then we sho w how to de- termine whether there exists a randomized memoryless almost-sure winning strategy in G for the LimAvg =1 ob jectiv e in p olynomial time with resp ect to the size of G . F or a b elief-observ ation POMDP the current be- lief is alwa ys the set of states with curren t observ ation. Definition 4. A POMDP G = ( S , A , δ , O , γ , s 0 ) is a b elief-observ ation POMDP iff for every finite pr efix w = ( s 0 , a 0 , s 1 , a 1 , . . . , a n − 1 , s n ) the b elief asso ciate d with the observation se quenc e ρ = γ ( w ) is the set of states with the last observation γ ( s n ) of the observa- tion se quenc e ρ , i.e., B ( ρ ) = γ − 1 ( γ ( s n )) . Construction of the b elief-observ ation POMDP . Intuitiv ely , the construction of G from G will proceed as follo ws: if there exists an almost-sure winning finite-memory strategy , then there exists an almost-sure winning collapsed strategy with memory b ounded b y 2 3 ·| S | + |A| . This allo ws us to consider the memory elements M = 2 S × { 0 , 1 } | S | × { 0 , 1 } | S | × 2 A ; and intuitiv ely construct the pro duct of the memory M with the POMDP G . The POMDP G is con- structed such that it allows all p ossible wa ys the collapsed strategy of a finite-memory almost-sure winning strategy could play . The rew ard function r in G is obtained from the rew ard function r in G . In the POMDP G the belief is already included in the state space itself of the POMDP , and the belief represen ts exactly the set of states in which the POMDP can b e with positive probabilit y . Therefore, the POMDP G is a b elief-observ ation POMDP . Since p ossible memory states of collapsed strategies are part of state space, w e only need to consider memoryless strategies in G . Lemma 5. The POMDP G is a b elief-observation POMDP, such that ther e exists a finite-memory almost-sur e winning str ate gy for the LimAvg =1 obje c- tive with the r ewar d function r in the POMDP G iff ther e exists a memoryless almost-sur e winning str ate gy for the LimAvg =1 obje ctive with the r ewar d function r in the POMDP G . Almost-sure winning observ ations. Giv en a POMDP G = ( S , A , δ, O , γ ) and an ob jective ψ , let Almost M ( ψ ) denote the set of observ ations o ∈ O , suc h that there exists a memoryless almost-sure win- ning strategy to ensure ψ from every state s ∈ γ − 1 ( o ). Almost-sure winning for LimAvg =1 ob jectiv es. Our goal is to compute the set Almost M ( LimAvg =1 ) giv en the b elief-observ ation POMDP G (of our con- struction of G with pro duct with M ). Let F ⊆ S b e the set of states of G where some actions can b e pla yed consisten tly with the collapsed strategy of any finite-memory almost-sure winning strategy . Let e G denote the POMDP G restricted to F . W e define a subset of states of the b elief-observ ation POMDP e G that in tuitively corresp ond to winning collapsed- recurren t states (wcs), i.e., e S wcs = { ( s, ( Y , W, R , A )) | W ( s ) = 1 , R ( s ) = 1 } . Then, we compute the set of observ ations g A W that can ensure to reac h S wcs almost- surely in the the POMDP e G . W e show that the set of observ ations g A W is equal to the set of observ a- tions Almost M ( LimAvg =1 ) in the POMDP G . Thus the computation reduces to computation of almost- sure states for reac habilit y ob jectives. Finally w e show that almost-sure reac hability set can be computed in quadratic time for b elief-observ ation POMDPs. The quadratic time algorithm is obtained as follo ws: w e sho w almost-sure winning observ ations to ensure to reac h a target set T with probability 1 is the greatest fixp oin t of a set Y of observ ations such that pla ying all actions uniformly that ensures Y is not left, ensures to reac h T almost-surely . This characterization giv es a nested iterativ e algorithm that is quadratic time. Lemma 6. g A W = Almost M ( LimAvg =1 ) ; and g A W c an b e c ompute d in quadr atic time in the size of G . The EXPTIME-completeness. In this section we first show ed that given a POMDP G with a LimAvg =1 ob jectiv e w e can construct an exponential size b elief- observ ation POMDP G and the computation of the almost-sure winning set for LimAvg =1 ob jectiv es is reduced to the computation of the almost-sure win- ning set for reac habilit y ob jectives, which we solve in quadratic time in G . This gives us an exp onential- time algorithm to decide (and construct if one ex- s 0 s a b s 0 , 1 s 0 , 0 s, 1 s, 0 goo d bad $ a $ b # # # # Figure 2: PF A P to a POMDP G ists) the existence of finite-memory almost-sure win- ning strategies in POMDPs with LimAvg =1 ob jectiv es. The EXPTIME-hardness for almost-sure winning can b e obtained easily from the result of Reif for t w o-play er partial-observ ation games with safety ob jectives [25]. Theorem 3. The fol lowing assertions hold: (1) Given a POMDP G with | S | states, |A| actions, and a LimAvg =1 obje ctive, the existenc e (and c onstruction if one exists) of a finite-memory almost-sur e winning str ate gy c an b e achieve d in 2 O ( | S | + |A| ) time. (2) The de cision pr oblem of given a POMDP and a LimAvg =1 obje ctive whether ther e exists a finite-memory almost- sur e winning str ate gy is EXPTIME-c omplete. Remark 2. We c onsider e d observation function that assigns an observation to every state. In gener al the observation function γ : S → 2 O \ ∅ may assign mul- tiple observations to a single state. In that c ase we c onsider the set of observations as O 0 = 2 O \ ∅ and c onsider the mapping that assigns to every state an observation fr om O 0 and then apply our r esults. 4 Finite-memory strategies with Quan titativ e Constraint W e will show that the problem of deciding whether there exists a finite-memory (as well as an infinite- memory) almost-sure winning s trategy for the ob jec- tiv e LimAvg > 1 2 is undecidable. W e present a reduction from the standard undecidable problem for probabilis- tic finite automata (PF A). A PF A P = ( S, A , δ, F , s 0 ) is a sp ecial case of a POMDP G = ( S, A , δ, O , γ , s 0 ) with a single observ ation O = { o } suc h that for all states s ∈ S we hav e γ ( s ) = o . Moreov er, the PF A pro ceeds for only finitely many steps, and has a set F of desired final states. The strict emptiness pr ob- lem asks for the existence of a strategy w (a finite w ord ov er the alphab et A ) such that the measure of the runs ending in the desired final states F is strictly greater than 1 2 ; and the strict emptiness problem for PF A is undecidable [21]. Reduction. Given a PF A P = ( S, A , δ, F , s 0 ) w e construct a POMDP G = ( S 0 , A 0 , δ 0 , O , γ , s 0 0 ) with a Bo olean rew ard function r suc h that there exists a w ord w ∈ A ∗ accepted with probabilit y strictly greater than 1 2 in P iff there exists a finite-memory almost-sure win- ning strategy in G for the ob jectiv e LimAvg > 1 2 . Intu- itiv ely , the construction of the POMDP G is as fol- lo ws: for every state s ∈ S of P w e construct a pair of states ( s, 1) and ( s, 0) in S 0 with the property that ( s, 0) can only b e reac hed with a new action $ (not in A ) play ed in state ( s, 1). The transition function δ 0 from the state ( s, 0) mimics the transition function δ , i.e., δ 0 (( s, 0) , a )(( s 0 , 1)) = δ ( s, a )( s 0 ). The reward r of ( s, 1) (resp. ( s, 0)) is 1 (resp. 0), ensuring the a verage of the pair to b e 1 2 . W e add a new av ailable action # that when play ed in a final state reaches a state go o d ∈ S 0 with rew ard 1, and when play ed in a non- final state reaches a state bad ∈ S 0 with reward 0, and for states go o d and bad giv en action # the next state is the initial state. An illustration of the construction on an example is depicted on Figure 2. Whenev er an action is play ed in a state where it is not av ailable, the POMDP reaches a lo osing absorbing state, i.e., an absorbing state with reward 0, and for brevit y w e omit transitions to the lo osing absorbing state. W e present k ey pro of ideas to establish the correctness: (Strict emptiness implies almost-sur e LimAvg > 1 2 ). Let w ∈ A ∗ b e a word accepted in P with probability µ > 1 2 and let the length of the word b e | w | = n . W e construct a pure finite-memory almost-sure winning strategy for the ob jective LimAvg > 1 2 in the POMDP G as follo ws: W e denote b y w [ i ] the i th ac- tion in the w ord w . The finite-memory strategy we construct is sp ecified as an ultimately p erio dic w ord ($ w [1] $ w [2] . . . $ w [ n ] # #) ω . Observe that by the con- struction of the POMDP G , the sequence of rew ards (that app ear on the transitions) is (10) n follo wed by (i) 1 with probabilit y µ (when F is reached), and (ii) 0 otherwise; and the whole sequence is rep eated ad in- finitum. Then using the Strong La w of Large Num bers (SLLN) [6, Theorem 7.1, page 56] we show that with probabilit y 1 the ob jective LimAvg > 1 2 is satisfied. (A lmost-sur e LimAvg > 1 2 implies strict emptiness). Con versely , if there is a pure finite-memory strategy σ to ensure the ob jective LimAvg > 1 2 in the POMDP , then the strategy σ can b e viewed as an ultimately p erio dic infinite word of the form u · v ω , where u, v are finite words from A 0 . Note that v m ust contain the subsequence ##, as otherwise the LimAvg pay- off w ould b e only 1 2 . Similarly , b efore every letter a ∈ A in the words u, v , the strategy must necessarily pla y the $ action, as otherwise the loosing absorbing state is r eached. Again using SLLN w e show that from the w ord v we can extract a word w that is accepted in the PF A with probability strictly greater than 1 2 . Finally , we sho w that if there is randomized (p ossi- bly infinite-memory strategy) to ensure the ob jectiv e LimAvg > 1 2 in the POMDP , then there is a pure finite- memory strategy as well (the technical pro of uses F a- s 0 s a b s 0 s goo d bad a b $ $ # # $ $ Figure 3: PF A P to a POMDP G tou’s lemma [6]). Theorem 4. The pr oblem whether ther e exists a finite (or infinite-memory) almost-sur e winning str ate gy in a POMDP for the obje ctive LimAvg > 1 2 is unde cidable. 5 Infinite-memory strategies with Qualitativ e Constrain t In this section we sho w that the problem of deciding the existence of infinite-memory almost-sure winning strategies in POMDPs with LimAvg =1 ob jectiv es is un- decidable. W e prov e this fact by a reduction from the value 1 pr oblem in PF A, which is undecidable [8]. The v alue 1 problem given a PF A P asks whether for every > 0 there exists a finite wor d w suc h that the word is accepted in P with probabilit y at least 1 − (i.e., the limit of the acceptance probabilities is 1). Reduction. Given a PF A P = ( S, A , δ, F , s 0 ), we construct a POMDP G 0 = ( S 0 , A 0 , δ 0 , O 0 , γ 0 , s 0 0 ) with a rew ard fun ction r 0 , suc h that P satisfies the v alue 1 problem iff there exists an infinite-memory almost-sure winning strategy in G 0 for the ob jective LimAvg =1 . In- tuitiv ely , the construction adds tw o additional states go o d and bad . W e add an edge from every state of the PF A under a new action $, this edge leads to the state go o d when play ed in a final state, and to the state bad otherwise. In the states go o d and bad we add self-loops under a new ac tion #. The action $ in the states go o d or bad leads bac k to the initial state. An example of the construction is illustrated with Figure 3. All the states belong to a single observ ation, and we will use Bo olean reward function on states. The rew ard for all states except the newly added state go o d is 0, and the rew ard for the state go o d is 1. The k ey pro of ideas for correctness are as follows: (V alue 1 implies almost-sur e LimAvg =1 ). If P satisfies the v alue 1 problem, then there exists a sequence of fi- nite words ( w i ) i ≥ 1 , such that each w i is accepted in P with probability at least 1 − 1 2 i +1 . W e construct an infi- nite w ord w 1 · $ · # n 1 · w 2 · $ · # n 2 · · · , where each n i ∈ N is a natural n um b er that satisfies the following condition: let k i = | w i +1 · $ | + P i j =1 ( | w j · $ | + n j ) b e the length of the word sequence b efore # n i +1 , then we m ust hav e n i k i ≥ 1 − 1 i . The construction ensures that if the state bad app ears only finitely often with probability 1, then LimAvg =1 is ensured with probability 1. The argument to sho w that bad is visited infinitely often with prob- abilit y 0 is as follows. W e first upp er b ound the prob- abilit y u k +1 to visit the state bad at least k + 1 times, giv en k visits to state bad . The probability u k +1 is at most 1 2 k +1 (1 + 1 2 + 1 4 + · · · ). The ab ov e b ound for u k +1 is obtained as follo ws: following the visit to bad for k times, the w ords w j , for j ≥ k are pla y ed; and hence the probability to reach bad decreases by 1 2 ev ery time the next w ord is pla y ed; and after k visits the probabil- it y is alwa ys smaller than 1 2 k +1 . Hence the probability to visit bad at least k +1 times, giv en k visits, is at most the sum abov e, whic h is 1 2 k . Let E k denote the ev en t that bad is visited at least k + 1 times given k visits to bad . Then we ha v e P k ≥ 0 P ( E k ) ≤ P k ≥ 1 1 2 k < ∞ . By Borel-Cantelli lemma [6, Theorem 6.1, page 47] we ha ve that the probabilit y that bad is visited infinitely often is 0. (A lmost-sur e LimAvg =1 implies value 1). W e prov e the con verse. Consider that the PF A P do es not sat- isfy the v alue 1 problem, i.e., there exists a constan t c > 0 such that for all w ∈ A ∗ w e hav e that the proba- bilit y that w is accepted in P is at most 1 − c < 1. W e will show that there is no almost-sure winning strat- egy . Assume to wards contradiction that there exists an infinite-memory almost-sure winning strategy σ in the POMDP G 0 ; and the infinite word corresp ond- ing to σ m ust play infinitely many sequences of #’s to ensure LimAvg =1 . Let X i b e the random v ariable for the rewards for the i -th sequence of #’s. Then w e hav e that X i = 1 with probability at most 1 − c and 0 otherwise. The expected LimAvg pay off is then at most: E (lim inf n →∞ 1 n P n i =0 X i ) . Since X i ’s are non- negativ e measurable function, by F atou’s lemma [6, Theorem 3.5, page 16] E (lim inf n →∞ 1 n n X i =0 X i ) ≤ lim inf n →∞ E ( 1 n n X i =0 X i ) ≤ 1 − c. It follows that E σ ( LimAvg ) ≤ 1 − c . Note that if the strategy σ was almost-sure winning for the ob- jectiv e LimAvg =1 (i.e., P σ ( LimAvg =1 ) = 1), then the exp ectation of the LimAvg pay off would also b e 1 (i.e., E σ ( LimAvg ) = 1). Therefore w e hav e reac hed a con- tradiction to the fact that the strategy σ is almost-sure winning for the ob jectiv e LimAvg =1 . Theorem 5. The pr oblem whether ther e exists an infinite-memory almost-sur e winning str ate gy in a POMDP with the obje ctive LimAvg =1 is unde cidable. References [1] S. B. Andersson and D. Hristu. Sym b olic feed- bac k control for navigation. IEEE T r ansactions on Automatic Contr ol , 51(6):926–937, 2006. [2] A. R. Cassandra, L. P . Kaelbling, and M. L. Littman. Acting optimally in partially observ able sto c hastic domains. In Pr o c e e dings of the National Confer enc e on Artificial Intel ligenc e , pages 1023– 1023. JOHN WILEY & SONS L TD, 1995. [3] A. Condon and R. J. Lipton. On the complexity of space b ounded interactiv e pro ofs. In FOCS , pages 462–467, 1989. [4] K. Culik and J. Kari. Digital images and formal languages. Handb o ok of formal languages , pages 599–616, 1997. [5] R. Durbin, S. Eddy , A. Krogh, and G. Mitchison. Biolo gic al se quenc e analysis: pr ob abilistic mo dels of pr oteins and nucleic acids . Cambridge Univ. Press, 1998. [6] R. Durrett. Pr ob ability: The ory and Examples (Se c ond Edition) . Duxbury Press, 1996. [7] J. Filar and K. V rieze. Comp etitive Markov De- cision Pr o c esses . Springer-V erlag, 1997. [8] H. Gimbert and Y. Oualhadj. Probabilistic au- tomata on finite words: Decidable and undecid- able problems. In Pr o c. of ICALP , LNCS 6199, pages 527–538. Springer, 2010. [9] E. A. Hansen and R. Zhou. Synthesis of hierarc hi- cal finite-state controllers for p omdps. In ICAPS , pages 113–122, 2003. [10] H. Ho ward. Dynamic Pr o gr amming and Markov Pr o c esses . MIT Press, 1960. [11] L. P . Kaelbling, M. L. Littman, and A. R. Cas- sandra. Planning and acting in partially observ- able sto chastic domains. Artificial intel ligenc e , 101(1):99–134, 1998. [12] L. P . Kaelbling, M. L. Littman, and A. W. Mo ore. Reinforcemen t learning: A survey . J. of Artif. Intel l. R ese ar ch , 4:237–285, 1996. [13] H. Kress-Gazit, G. E. F ainekos, and G. J. Pap- pas. T emp oral-logic-based reactive mission and motion planning. IEEE T r ansactions on R ob otics , 25(6):1370–1381, 2009. [14] M. L. Littman, J. Goldsmith, and M. Mund- henk. The computational complexity of proba- bilistic planning. J. A rtif. Intel l. R es. (JAIR) , 9:1–36, 1998. [15] M. L. Littman. A lgorithms for Se quential De ci- sion Making . PhD thesis, Bro wn Universit y , 1996. [16] O. Madani, S. Hanks, and A. Condon. On the un- decidabilit y of probabilistic planning and related sto c hastic optimization problems. Artif. Intel l. , 147(1-2):5–34, 2003. [17] N. Meuleau, L. P eshkin, K-E. Kim, and L.P . Kael- bling. Learning finite-state controllers for par- tially observ able environmen ts. In Pr o c e e dings of the Fifte enth c onfer enc e on Unc ertainty in ar- tificial intel ligenc e , UAI’99, pages 427–436, San F rancisco, CA, USA, 1999. Morgan Kaufmann Publishers Inc. [18] N. Meuleau, K.-E. Kim, L. P . Kaelbling, and A. R. Cassandra. Solving p omdps by searc hing the space of finite p olicies. In UAI , pages 417– 426, 1999. [19] M. Mohri. Finite-state transducers in language and sp eech pro cessing. Computational Linguis- tics , 23(2):269–311, 1997. [20] C. H. P apadimitriou and J. N. Tsitsiklis. The complexit y of Marko v decision pro cesses. Mathe- matics of Op er ations R ese ar ch , 12:441–450, 1987. [21] A. Paz. Intr o duction to pr ob abilistic automata (Computer scienc e and applie d mathematics) . Academic Press, 1971. [22] A. Pogosy ants, R. Segala, and N. Lynch. V er- ification of the randomized consensus algorithm of Aspnes and Herlihy: a case study . Distribute d Computing , 13(3):155–186, 2000. [23] M. L. Puterman. Markov De cision Pr o c esses . John Wiley and Sons, 1994. [24] M. O. Rabin. Probabilistic automata. Informa- tion and Contr ol , 6:230–245, 1963. [25] J. H. Reif. The complexity of tw o-play er games of incomplete information. Journal of Computer and System Scienc es , 29(2):274–301, 1984. [26] M. I. A. Sto elinga. F un with FireWire: Exp er- imen ts with v erifying the IEEE1394 ro ot con- ten tion protocol. In F ormal Asp e cts of Comput- ing , 2002. [27] J. D. Williams and S. Y oung. Partially observ able mark ov decision pro cesses for sp oken dialog sys- tems. Computer Sp e e ch & L anguage , 21(2):393– 422, 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment