Using Learned Predictions as Feedback to Improve Control and Communication with an Artificial Limb: Preliminary Findings

Many people suffer from the loss of a limb. Learning to get by without an arm or hand can be very challenging, and existing prostheses do not yet fulfil the needs of individuals with amputations. One promising solution is to provide greater communication between a prosthesis and its user. Towards this end, we present a simple machine learning interface to supplement the control of a robotic limb with feedback to the user about what the limb will be experiencing in the near future. A real-time prediction learner was implemented to predict impact-related electrical load experienced by a robot limb; the learning system’s predictions were then communicated to the device’s user to aid in their interactions with a workspace. We tested this system with five able-bodied subjects. Each subject manipulated the robot arm while receiving different forms of vibrotactile feedback regarding the arm’s contact with its workspace. Our trials showed that communicable predictions could be learned quickly during human control of the robot arm. Using these predictions as a basis for feedback led to a statistically significant improvement in task performance when compared to purely reactive feedback from the device. Our study therefore contributes initial evidence that prediction learning and machine intelligence can benefit not just control, but also feedback from an artificial limb. We expect that a greater level of acceptance and ownership can be achieved if the prosthesis itself takes an active role in transmitting learned knowledge about its state and its situation of use.

💡 Research Summary

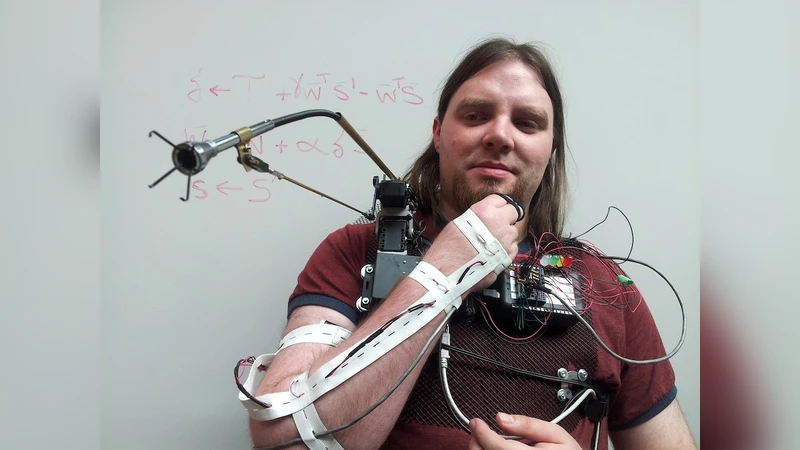

The paper addresses two persistent challenges in prosthetic limb technology—insufficient sensory feedback and limited control channels—by introducing a predictive tactile feedback system that augments user control with machine‑learned anticipations of imminent collisions. A custom four‑degree‑of‑freedom wearable robotic arm (the Extra Robotic Manipulator, XRM) equipped with Dynamixel servos served as a testbed. Users operated the arm’s shoulder joint via a two‑axis thumb joystick while wearing a sleeve containing four vibrotactile actuators (one over each major joint).

During a “training” phase, participants repeatedly moved the arm back and forth between the left and right walls of a confined 27 cm square workspace, pressing the arm against the walls to generate measurable servo load spikes. These load signals were fed into an online temporal‑difference (TD) learner that discretized the shoulder’s 300° range into 32 angular bins, each further split into three motion states (clockwise, counter‑clockwise, stationary), yielding a 96‑dimensional binary feature vector. The learner updated a weight vector w at 20 Hz using the rule wₜ₊₁ = wₜ + α

Comments & Academic Discussion

Loading comments...

Leave a Comment