Estimating Maximally Probable Constrained Relations by Mathematical Programming

Estimating a constrained relation is a fundamental problem in machine learning. Special cases are classification (the problem of estimating a map from a set of to-be-classified elements to a set of labels), clustering (the problem of estimating an equivalence relation on a set) and ranking (the problem of estimating a linear order on a set). We contribute a family of probability measures on the set of all relations between two finite, non-empty sets, which offers a joint abstraction of multi-label classification, correlation clustering and ranking by linear ordering. Estimating (learning) a maximally probable measure, given (a training set of) related and unrelated pairs, is a convex optimization problem. Estimating (inferring) a maximally probable relation, given a measure, is a 01-linear program. It is solved in linear time for maps. It is NP-hard for equivalence relations and linear orders. Practical solutions for all three cases are shown in experiments with real data. Finally, estimating a maximally probable measure and relation jointly is posed as a mixed-integer nonlinear program. This formulation suggests a mathematical programming approach to semi-supervised learning.

💡 Research Summary

**

The paper proposes a unified probabilistic framework for estimating binary relations between two finite, non‑empty sets A and B. Each possible pair (ab) is associated with a binary feature vector x₍ₐb₎ and a parameter vector θ. Two families of conditional models are considered: a logistic model, where p(Y₍ₐb₎=1|X₍ₐb₎,θ)=σ(⟨θ,x₍ₐb₎⟩), and a Bernoulli model that assumes each pair belongs to one of a finite number of classes. A random variable Z encodes the set of feasible relations (e.g., maps, equivalence relations, linear orders) by assigning probability 1 to feasible relations and 0 to infeasible ones.

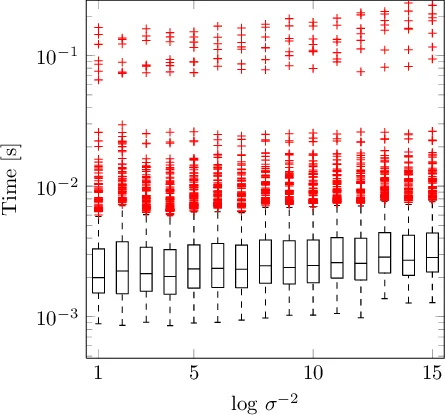

Learning (parameter estimation) and inference (relation estimation) are derived from the joint posterior p(Y,θ|X,Z). When the relation ŷ is fixed (training data), maximizing the posterior reduces to a convex optimization problem identical to regularized logistic regression (or a closed‑form solution for the Bernoulli case). This step is computationally tractable and can be solved with standard solvers.

When the parameters θ are fixed, the problem of finding the most probable relation becomes a 0‑1 linear program:

min_{y∈z} –∑_{ab}⟨θ,x₍ₐb₎⟩ y₍ₐb₎ (logistic) or an analogous weighted sum for the Bernoulli model.

The complexity of this program depends entirely on the feasible set z.

Three important specializations are examined:

-

Maps (Classification) – The feasibility constraints enforce that each element a∈A is assigned exactly one label b∈B. This yields a simple assignment problem solvable in linear time by selecting, for each a, the label with the highest score ⟨θ,x₍ₐb₎⟩. The authors also show how one‑vs‑rest multi‑label classification fits into this framework.

-

Equivalence Relations (Clustering) – Feasibility requires reflexivity, symmetry, and transitivity, which translate into linear constraints (y₍aa₎=1, y₍ab₎=y₍ba₎, y₍ab₎+y₍bc₎−1 ≤ y₍ac₎). The resulting optimization is exactly the Set Partition Problem, known in machine learning as correlation clustering, and is NP‑hard. The paper discusses exact branch‑and‑cut methods based on the Set Partition Polytope and fast heuristics such as the Kernighan‑Lin algorithm (O(|A|² log |A|)).

-

Linear Orders (Ranking) – In addition to the constraints for equivalence relations, antisymmetry (y₍ab₎+y₍ba₎ ≤ 1) and totality (y₍ab₎+y₍ba₎ ≥ 1) are imposed. This yields the Linear Ordering Problem, another classic NP‑hard combinatorial optimization task. State‑of‑the‑art branch‑and‑cut solvers exploiting the Linear Ordering Polytope are referenced, together with common heuristics.

The authors further formulate a joint estimation of θ and y as a mixed‑integer nonlinear program (MINLP):

min_{y∈z, θ∈ℝ^K}

Comments & Academic Discussion

Loading comments...

Leave a Comment