Learning From Ordered Sets and Applications in Collaborative Ranking

Ranking over sets arise when users choose between groups of items. For example, a group may be of those movies deemed $5$ stars to them, or a customized tour package. It turns out, to model this data type properly, we need to investigate the general combinatorics problem of partitioning a set and ordering the subsets. Here we construct a probabilistic log-linear model over a set of ordered subsets. Inference in this combinatorial space is highly challenging: The space size approaches $(N!/2)6.93145^{N+1}$ as $N$ approaches infinity. We propose a \texttt{split-and-merge} Metropolis-Hastings procedure that can explore the state-space efficiently. For discovering hidden aspects in the data, we enrich the model with latent binary variables so that the posteriors can be efficiently evaluated. Finally, we evaluate the proposed model on large-scale collaborative filtering tasks and demonstrate that it is competitive against state-of-the-art methods.

💡 Research Summary

The paper addresses a previously under‑explored ranking problem: users often rank not individual items but groups of items that share the same rating (e.g., all movies rated 5 stars). To model such data, the authors introduce the Ordered Set Model (OSM), a probabilistic log‑linear model defined over all possible partitions of a set of N items into T non‑empty subsets together with an ordering of those subsets. The number of possible configurations grows as the Fubini number F(N)≈(N!/2)·6.93145^{N+1}, far exceeding the N! permutations of a simple ranking.

OSM assigns a positive potential Φ to each subset, capturing intra‑subset compatibility, and a potential Ψ to each ordered pair of subsets, capturing inter‑subset preference. Both potentials are factorised into pairwise terms and parameterised in log‑linear form using feature functions and weights (α, β). The resulting distribution is P(X)=Ω(X)/Z, where Ω is the product of all Φ and Ψ terms and Z is the normalising constant.

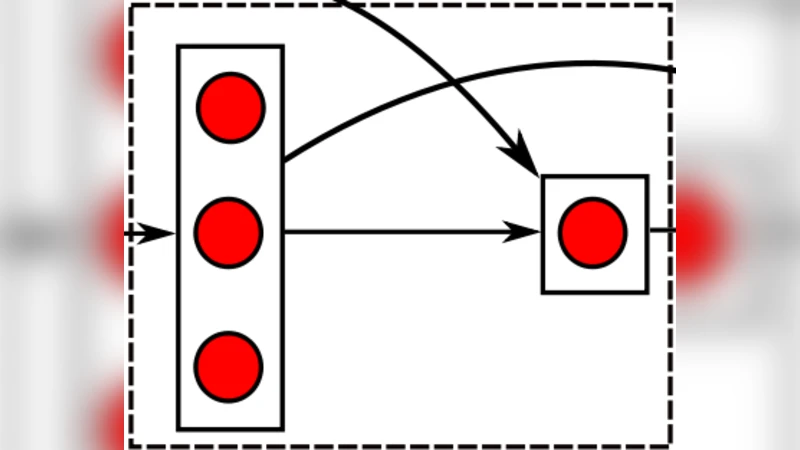

Because exact inference is infeasible, the authors devise a Metropolis‑Hastings sampler that alternates between two local moves: (i) split – randomly select a non‑singleton subset and split it into two consecutive subsets; (ii) merge – randomly select two consecutive subsets and merge them. The proposal probabilities are derived analytically from subset sizes and the total number of subsets, allowing the acceptance ratio to be computed efficiently. Repeated application of split and merge guarantees ergodicity over the entire combinatorial space.

To estimate the partition function Z, the paper employs Annealed Importance Sampling (AIS). Starting from τ=0 (uniform distribution, where Z(0)=F(N) is known), the temperature τ is gradually increased to 1, drawing samples at each intermediate τ using the split‑and‑merge sampler. The product of successive unnormalised probabilities yields importance weights, whose average provides an estimate of Z(1).

Recognising that a plain OSM cannot capture latent user preferences, the authors extend the model with binary hidden units h∈{0,1}^K, forming the Latent OSM. This construction mirrors a Restricted Boltzmann Machine: each hidden unit connects to all visible variables (the ordered sets). The posterior P(h_k=1|X) can be computed analytically, giving a K‑dimensional latent representation of a user’s taste. Learning proceeds by alternating short MCMC runs for the visible layer with stochastic gradient updates of the parameters, similar to contrastive divergence.

The models are evaluated on large‑scale collaborative‑filtering benchmarks (MovieLens 10M, Netflix Prize). Users’ ratings are transformed into ordered groups (e.g., all 5‑star movies form one subset). The task is to predict the ordering of unseen groups. Performance is measured by NDCG and Precision@k. Latent OSM consistently outperforms or matches state‑of‑the‑art baselines such as Matrix Factorization, Bayesian Personalized Ranking, and listwise learning‑to‑rank methods, especially when data are sparse. The experiments demonstrate that explicitly modelling intra‑group compatibility and inter‑group ordering yields more accurate recommendations.

Strengths of the work include (1) a principled probabilistic formulation for the joint partition‑and‑order problem; (2) an elegant split‑and‑merge MCMC that makes local moves tractable despite the super‑exponential state space; (3) the incorporation of latent factors that provide useful embeddings for downstream tasks. Limitations involve the computational cost of MCMC for very large N, potential slow mixing, and the large number of parameters that may require careful regularisation. Future directions suggested are variational inference or neural approximations to replace MCMC, dynamic partitioning to handle temporal changes, and scaling strategies for massive item sets.

In summary, the paper makes a significant contribution by introducing a flexible, log‑linear model for ordered set data, providing an effective sampling scheme, extending it with latent variables, and demonstrating its practical advantage in collaborative ranking scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment