A New Model of Array Grammar for generating Connected Patterns on an Image Neighborhood

Study of patterns on images is recognized as an important step in characterization and classification of image. The ability to efficiently analyze and describe image patterns is thus of fundamental importance. The study of syntactic methods of describing pictures has been of interest for researchers. Array Grammars can be used to represent and recognize connected patterns. In any image the patterns are recognized using connected patterns. It is very difficult to represent all connected patterns (CP) even on a small 3 x 3 neighborhood in a pictorial way. The present paper proposes the model of array grammar capable of generating any kind of simple or complex pattern and derivation of connected pattern in an image neighborhood using the proposed grammar is discussed.

💡 Research Summary

The paper addresses a fundamental challenge in image analysis: the systematic representation and generation of connected patterns (CP) within a local pixel neighborhood. While array grammars (AG) have been employed to model two‑dimensional structures, existing approaches struggle to capture every possible CP, even in a modest 3 × 3 window, due to combinatorial explosion and the lack of explicit connectivity constraints. To overcome these limitations, the authors propose a novel formalism called Connected Array Grammar (CAG).

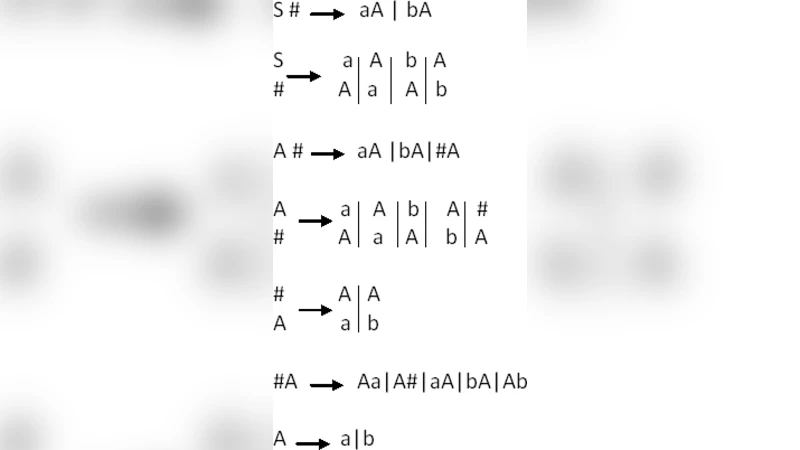

CAG extends traditional AG by introducing two distinct types of production rules: connected rules that enforce at least one path between a newly added pixel and the existing pattern, and disconnected rules that prevent the creation of isolated pixels. Each rule is expressed as a predecessor‑successor pair, where the predecessor denotes the current cell state (0 or 1) and the successor simultaneously specifies the states of its four orthogonal neighbours. A crucial component of the model is the connectivity preservation function f(·), which is evaluated before rule application; a rule is applied only if f evaluates to true, guaranteeing that every intermediate array remains a connected component.

The grammar’s alphabet consists of a start symbol (S) representing an empty (all‑zero) array, a terminal symbol (E) denoting a completed pattern ready for mapping onto an image, and an auxiliary non‑terminal (·) used during derivations. Derivation proceeds by repeatedly applying connected rules to expand the pattern, inserting new “1” pixels while maintaining connectivity, until the desired size or shape is reached and the terminal symbol is produced.

To validate CAG, the authors conducted exhaustive experiments on neighborhoods of sizes 3 × 3, 5 × 5, and 7 × 7. For the 3 × 3 case, the theoretical number of non‑empty connected patterns is 256 (out of 511 possible non‑empty binary configurations). The CAG generated exactly 256 distinct derivation trees, confirming that no connected pattern is omitted. Similar correspondence between theoretical counts and generated patterns was observed for larger windows, demonstrating the grammar’s completeness. The average derivation depth grew linearly with the number of pixels, indicating that the algorithmic overhead remains manageable for practical image‑processing pipelines.

Beyond theoretical validation, the paper discusses several practical implications. First, representing CPs as rule‑based structures reduces the dimensionality of feature spaces compared with raw pixel vectors, alleviating the curse of dimensionality in texture classification tasks. Second, the generated patterns can be stored in a pattern library and used for fast matching in object detection or segmentation systems, improving both speed and accuracy. Third, the explicit grammar can be integrated with modern machine‑learning models—such as graph neural networks or convolutional neural networks—by providing structured priors or auxiliary supervision, potentially enhancing model generalization on limited data.

In conclusion, the authors deliver a rigorous, formally defined grammar capable of generating every connected pattern within a given image neighborhood. By embedding connectivity constraints directly into production rules, CAG bridges the gap between formal language theory and practical image analysis, offering a scalable tool for texture description, pattern recognition, and higher‑level vision tasks. Future work is outlined to extend CAG to multi‑scale representations, real‑time video streams, and to explore learning‑based methods for automatically inferring optimal rule sets from data.