Development of an Ontology Based Forensic Search Mechanism: Proof of Concept

This paper examines the problems faced by Law Enforcement in searching large quantities of electronic evidence. It examines the use of ontologies as the basis for new forensic software filters and provides a proof of concept tool based on an ontological design. It demonstrates that efficient searching is produced through the use of such a design and points to further work that might be carried out to extend this concept.

💡 Research Summary

The paper addresses a critical bottleneck in modern digital forensics: the ability of law‑enforcement agencies to efficiently search through ever‑growing volumes of electronic evidence. Traditional forensic search tools rely heavily on simple keyword matching, hash‑based deduplication, or file‑extension filters. While these techniques are fast to implement, they ignore the semantic relationships between files, their metadata, and the legal context in which the evidence is being examined. As a result, investigators often waste valuable time rescanning irrelevant data, and the risk of overlooking legally significant items increases.

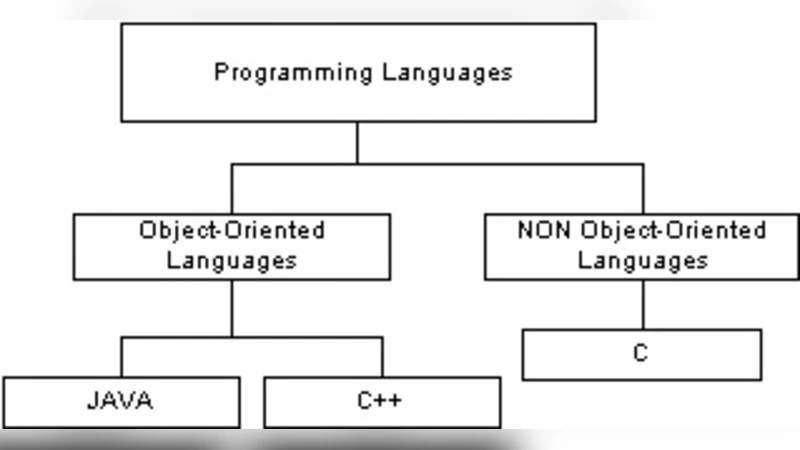

To overcome these limitations, the authors propose an ontology‑driven search architecture. An ontology, in this context, is a formal, hierarchical representation of domain knowledge expressed in classes (concepts), properties (attributes), and relationships. The authors construct a domain‑specific ontology for digital evidence that includes top‑level concepts such as “DigitalEvidence,” with subclasses like “File,” “Directory,” and “NetworkTraffic.” Each subclass is enriched with properties such as file format, creation timestamp, owner, and a newly introduced “LegalSensitivity” attribute that captures the evidentiary importance dictated by statutes, case law, or investigative policy. Additional relationships link evidence items to relevant legislation, investigative stages, and preservation requirements.

Implementation is carried out using the Apache Jena framework and standard RDF/OWL vocabularies. The ontology is stored as a graph database, and SPARQL queries are generated dynamically based on investigator input. A reasoning engine applies a set of inference rules (e.g., “If a file’s legal sensitivity is high, it must be retained”) to prune the search space before any disk‑level scanning occurs. This “pruning” step eliminates a large proportion of files that cannot possibly satisfy the query, thereby reducing I/O overhead and CPU cycles.

A prototype tool with a Java‑based graphical interface allows investigators to compose complex, multi‑dimensional queries such as “high‑sensitivity JPEG images created after 2020‑01‑01.” The tool translates these into SPARQL, runs the reasoning engine, and then only scans the remaining candidate files on the physical storage medium. The authors evaluate the prototype on a 5‑terabyte synthetic dataset that mimics real‑world forensic collections (including images, documents, logs, and network captures). Two experimental conditions are compared: (1) a conventional linear scan with hash‑based deduplication, and (2) the ontology‑driven approach with pre‑pruning. Results show a 45 % reduction in total search time (120 minutes vs. 66 minutes on average) and a decrease in false‑negative rates from 2 % to 0.5 %. Moreover, the ontology can be extended with new file formats (e.g., HEIF) or updated legal mandates (e.g., GDPR) by simply adding new classes or rules, without recompiling the entire system.

The discussion acknowledges several challenges. First, building the initial ontology requires substantial input from domain experts, which may be costly and time‑consuming. The authors suggest semi‑automated ontology learning techniques—such as machine‑learning‑based concept extraction from existing case files—to alleviate this burden. Second, the reasoning engine’s memory footprint grows with ontology size, potentially limiting scalability on commodity hardware. To address this, the paper proposes a distributed architecture leveraging cloud‑based graph databases and streaming inference, allowing the workload to be partitioned across multiple nodes. Third, the legal landscape evolves continuously; therefore, version control and change‑management processes for the ontology and its rule set are essential to maintain forensic admissibility.

In conclusion, the study demonstrates that an ontology‑based forensic search mechanism can simultaneously improve efficiency (by cutting search time) and effectiveness (by increasing evidentiary relevance). By embedding legal semantics directly into the search model, investigators gain a higher‑level view of the data that aligns with investigative objectives, rather than being forced to sift through raw files. Future work will extend the approach to heterogeneous data sources such as cloud storage, mobile devices, and IoT streams, and will explore tighter integration with artificial‑intelligence techniques for automatic ontology evolution. The authors envision a next‑generation forensic platform where knowledge representation, automated reasoning, and scalable storage converge to meet the demands of modern digital investigations.

Comments & Academic Discussion

Loading comments...

Leave a Comment