Survey of Parallel Computing with MATLAB

Matlab is one of the most widely used mathematical computing environments in technical computing. It has an interactive environment which provides high performance computing (HPC) procedures and easy to use. Parallel computing with Matlab has been an interested area for scientists of parallel computing researches for a number of years. Where there are many attempts to parallel Matlab. In this paper, we present most of the past,present attempts of parallel Matlab such as MatlabMPI, bcMPI, pMatlab, Star-P and PCT. Finally, we expect the future attempts.

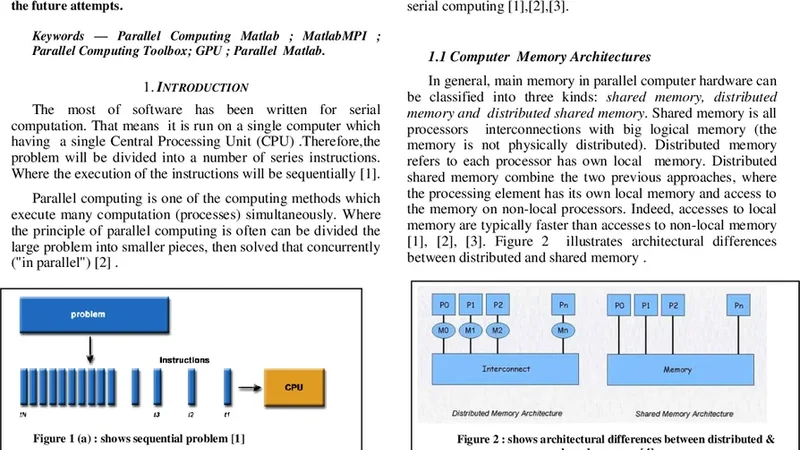

💡 Research Summary

The paper provides a comprehensive review of the evolution of parallel computing approaches for MATLAB, a dominant environment in technical and scientific computing. It begins by highlighting MATLAB’s strengths—an interactive interface, high‑level vectorized operations, and extensive toolboxes—while noting that its default single‑threaded execution model limits scalability for large‑scale simulations and data‑intensive tasks. To address this, researchers have pursued a series of increasingly sophisticated strategies, each building on the shortcomings of its predecessors.

The earliest effort, MATLABMPI, is a pure‑MATLAB implementation of the Message Passing Interface (MPI) protocol that relies on file‑based communication. Its simplicity and platform independence made it attractive for early adopters, but the reliance on disk I/O introduced substantial latency, especially for high‑volume data transfers. The next generation, bcMPI, wraps a native C‑based MPI library (such as OpenMPI or MPICH) with a MATLAB interface, enabling true network‑level messaging, asynchronous transfers, and significantly reduced communication overhead. While performance improves dramatically, bcMPI requires compilation and environment configuration, raising the entry barrier for non‑expert users.

pMatlab introduces a Partitioned Global Address Space (PGAS) model, allowing developers to declare distributed arrays and let the runtime automatically handle data partitioning and inter‑process communication. This high‑level abstraction preserves MATLAB’s familiar syntax, improving code readability and maintainability. However, pMatlab still depends on underlying MPI for data movement, limiting its ability to optimize irregular data structures or custom communication patterns.

Star‑P takes a different route by automatically translating MATLAB code into native C/C++ source, which is then compiled and executed on an MPI‑enabled cluster. By eliminating the interpreter overhead, Star‑P can achieve near‑native performance for many algorithms. The trade‑off lies in the translation step: not all MATLAB functions are supported, and debugging transformed code becomes more complex, requiring expertise in both MATLAB and compiled languages.

The most mature solution is MathWorks’ Parallel Computing Toolbox (PCT). PCT offers high‑level constructs such as parfor, spmd, and distributed arrays, together with an integrated job scheduler that transparently targets multicore CPUs, GPUs, and cloud resources. Its seamless integration with the MATLAB ecosystem and automatic data movement make it the most user‑friendly option for a broad audience. Nevertheless, PCT is a commercial product with a relatively high license cost, and its internal mechanisms are largely opaque, limiting fine‑grained performance tuning.

The authors compare these five approaches across several dimensions: implementation complexity, scalability, platform support, licensing, and typical use cases. The historical trajectory moves from lightweight, file‑based prototypes to robust, high‑performance MPI bindings, then to higher‑level PGAS abstractions, automatic code generation, and finally to a fully supported commercial toolbox. Each step mitigates earlier limitations but introduces new challenges, such as increased configuration effort, reduced flexibility, or higher cost.

Looking forward, the paper outlines three primary research directions. First, tighter integration of heterogeneous accelerators (GPUs, TPUs, FPGAs) is essential to exploit modern hardware trends. Second, adaptive scheduling and dynamic load balancing mechanisms are needed to maintain efficiency in workloads with unpredictable computational patterns. Third, cloud‑native, serverless execution models could provide seamless, on‑demand scaling without manual cluster management. By addressing these areas, MATLAB’s parallel computing capabilities can continue to evolve, maintaining its central role in scientific and engineering computation.