A Comparative Study of Hidden Web Crawlers

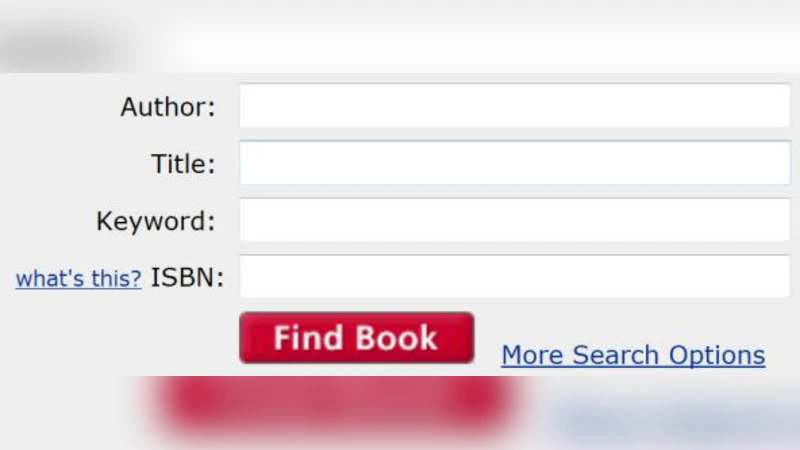

A large amount of data on the WWW remains inaccessible to crawlers of Web search engines because it can only be exposed on demand as users fill out and submit forms. The Hidden web refers to the collection of Web data which can be accessed by the crawler only through an interaction with the Web-based search form and not simply by traversing hyperlinks. Research on Hidden Web has emerged almost a decade ago with the main line being exploring ways to access the content in online databases that are usually hidden behind search forms. The efforts in the area mainly focus on designing hidden Web crawlers that focus on learning forms and filling them with meaningful values. The paper gives an insight into the various Hidden Web crawlers developed for the purpose giving a mention to the advantages and shortcoming of the techniques employed in each.

💡 Research Summary

The paper presents a comprehensive comparative study of hidden‑web crawlers, focusing on the techniques that enable automated access to data hidden behind web‑based search forms. It begins by defining the hidden web as the portion of the World Wide Web that cannot be reached by following hyperlinks alone, because the content is exposed only after a user submits a form. The authors argue that traditional search‑engine crawlers miss a substantial amount of valuable information—such as academic databases, e‑commerce product listings, and government records—because these resources require interaction with dynamic forms.

The study classifies hidden‑web crawling into four functional stages: (1) form detection, (2) field‑semantics extraction, (3) value generation, and (4) result processing. For each stage, the paper reviews the main design choices made by representative systems, including HiWE, Liddle, Gravano, and Barbosa, among others. HiWE relies on simple label‑keyword matching and a limited, static value list; it is easy to implement but suffers from low coverage on multi‑select or dependent fields. Liddle models a form as a tree and applies probabilistic sampling to reduce the combinatorial explosion of possible input combinations, achieving higher efficiency at the cost of sensitivity to the initial probability distribution. Gravano augments form analysis with external metadata and dictionaries, enabling recursive discovery of new forms from result pages; its performance is strong when rich metadata is available but degrades sharply otherwise. Barbosa leverages real user logs and search histories to train models that generate realistic query values, attaining the highest form‑submission success rate, yet it incurs significant overhead in log collection, preprocessing, and privacy compliance.

The authors evaluate the crawlers on a benchmark set of twelve hidden‑web sites spanning scholarly archives, online retail, real‑estate portals, and public record systems. Evaluation metrics include total documents retrieved, duplicate ratio, form‑submission success rate, crawl depth, and resource consumption (CPU, memory). Liddle demonstrates the best overall efficiency, retrieving the most documents per unit of time. Barbosa achieves the highest success rate (percentage of submitted forms that return meaningful results) but requires the most computational resources. HiWE, while lightweight, yields the smallest document set due to its limited query space. Gravano performs well in metadata‑rich domains but falters when such auxiliary information is absent.

The paper identifies four major challenges that still impede robust hidden‑web crawling: (1) dynamic, JavaScript‑driven forms and AJAX calls that evade static HTML parsing; (2) anti‑automation mechanisms such as CAPTCHAs; (3) session‑based authentication and cookie management that demand sophisticated state handling; and (4) privacy and legal concerns, especially for approaches that reuse user interaction logs. To address these issues, the authors propose future research directions, including machine‑learning‑based dynamic form detection, reinforcement‑learning strategies for optimal query generation, distributed crawling architectures with proxy pools to bypass authentication barriers, and differential‑privacy techniques to protect user data.

In conclusion, the study underscores that hidden‑web crawling remains an emerging field with no universally applicable framework. It emphasizes that combining domain‑specific dictionaries with adaptive machine‑learning models for field semantics and value generation can simultaneously improve coverage and efficiency. The authors call for the development of a modular, extensible crawler platform that can be customized for diverse hidden‑web domains while respecting privacy regulations and evolving web security measures.