An Analysis of Research in Software Engineering: Assessment and Trends

Glass published the first report on the assessment of systems and software engineering scholars and institutions two decades ago. The ongoing, annual survey of publications in this field provides fund managers, young scholars, graduate students, etc. with useful information for different purposes. However, the studies have been questioned by some critics because of a few shortcomings of the evaluation method. It is actually very hard to reach a widely recognized consensus on such an assessment of scholars and institutions. This paper presents a module and automated method for assessment and trends analysis in software engineering compared with the prior studies. To achieve a more reasonable evaluation result, we take into consideration more high-quality publications, the rank of each publication analyzed, and the different roles of authors named on each paper in question. According to the 7638 papers published in 36 publications from 2008 to 2013, the statistics of research subjects roughly follow power laws, implying the interesting Matthew Effect. We then identify the Top 20 scholars, institutions and countries or regions in terms of a new evaluation rule based on the frequently-used one. The top-ranked scholar is Mark Harman of the University College London, UK, the top-ranked institution is the University of California, USA, and the top-ranked country is the USA. Besides, we also show two levels of trend changes based on the EI classification system and user-defined uncontrolled keywords, as well as noteworthy scholars and institutions in a specific research area. We believe that our results would provide a valuable insight for young scholars and graduate students to seek possible potential collaborators and grasp the popular research topics in software engineering.

💡 Research Summary

The paper presents a novel, automated framework for assessing scholars, institutions, and countries in the field of software engineering, as well as for detecting research trends, and compares its results with those of earlier studies. The authors begin by reviewing the historical context: Glass’s pioneering 1990s assessment of software engineering researchers and the subsequent annual surveys that have become a key source of information for funding agencies, early‑career researchers, and graduate students. They note that many of these prior efforts have been criticized for relying solely on simple publication counts or citation metrics, for ignoring the quality of venues, and for treating all authors equally regardless of their contribution.

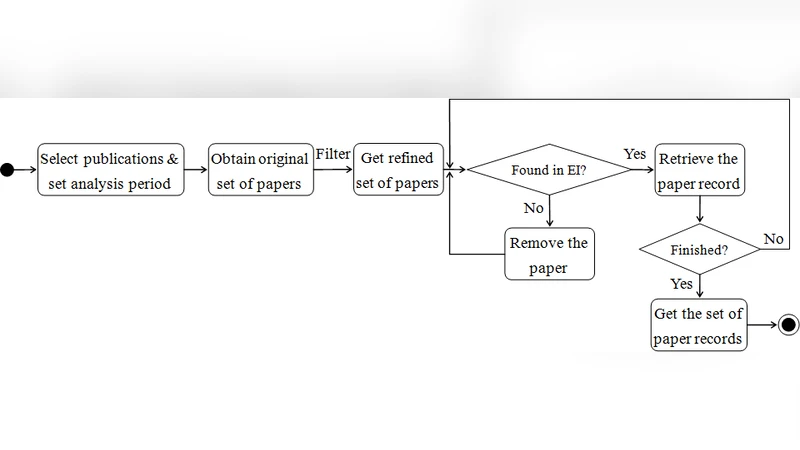

To address these shortcomings, the authors construct a data set comprising 7,638 papers published between 2008 and 2013 in 36 leading software‑engineering venues (journals and conference proceedings). Each venue is classified into three quality tiers (A, B, C) based on impact factor, acceptance rate, and community reputation; tier‑specific weight factors (3, 2, 1) are assigned. Moreover, the authors differentiate author roles: first authors and corresponding authors receive a multiplier of 1.5, while other co‑authors receive a multiplier of 1.0. The score of a single paper is calculated as the product of the venue weight and the author‑role weight, and these scores are summed across all papers associated with a given scholar, institution, or country.

Applying this weighted scoring scheme yields a ranking that diverges in several respects from traditional citation‑oriented lists. The top‑20 scholars, institutions, and countries are identified, with Mark Harman (University College London) emerging as the highest‑ranked individual, the University of California system as the leading institution, and the United States as the dominant country. Notably, some researchers from emerging economies climb the list because their work appears in high‑tier venues, while a few established European scholars drop in rank due to a lower proportion of high‑quality publications.

Statistical analysis of the subject distribution reveals a power‑law pattern: a small number of research topics account for a large share of publications, while many topics receive only modest attention. This “Matthew Effect” suggests that popular areas attract disproportionate resources, potentially creating barriers for newcomers and for work in less‑trendy domains.

For trend detection, the authors employ a two‑level approach. First, they map each paper to the Engineering Index (EI) classification hierarchy, allowing them to monitor shifts at both the macro (broad categories) and micro (sub‑categories) levels. Second, they supplement the classification‑based view with a user‑defined keyword analysis: a curated list of terms (e.g., “automated testing,” “static analysis,” “DevOps,” “machine‑learning‑based defect prediction”) is matched against titles and abstracts, and yearly frequencies are plotted. This dual strategy uncovers, for example, a sharp rise in publications on automated testing and static analysis after 2011, alongside a gradual decline in formal verification studies.

A focused case study on the “automated testing” keyword demonstrates how the framework can pinpoint leading contributors within a niche area. The analysis highlights Carnegie Mellon University and the University of Oxford as the most active institutions in this sub‑field, and it lists several high‑impact scholars whose recent work drives the trend.

The discussion acknowledges several limitations. The data set excludes many non‑English venues and conference papers not indexed in the selected databases, which may bias results toward Western institutions. The assignment of tier weights and author‑role multipliers, while grounded in expert judgment, remains somewhat subjective. The authors propose future work to incorporate a broader range of publication types, to refine weight calibration using machine‑learning techniques, and to develop a real‑time dashboard for continuous trend monitoring.

In conclusion, the proposed assessment module offers a more nuanced and balanced evaluation of software‑engineering research output than traditional count‑based methods. By accounting for venue quality and author contribution, it produces rankings that better reflect scholarly impact. The accompanying trend analysis, combining EI classifications with flexible keyword mining, provides valuable insights for early‑career researchers seeking collaborators, for institutions planning strategic investments, and for policymakers aiming to understand the evolving landscape of software‑engineering research.

Comments & Academic Discussion

Loading comments...

Leave a Comment