Structured Sparse Method for Hyperspectral Unmixing

Hyperspectral Unmixing (HU) has received increasing attention in the past decades due to its ability of unveiling information latent in hyperspectral data. Unfortunately, most existing methods fail to take advantage of the spatial information in data. To overcome this limitation, we propose a Structured Sparse regularized Nonnegative Matrix Factorization (SS-NMF) method from the following two aspects. First, we incorporate a graph Laplacian to encode the manifold structures embedded in the hyperspectral data space. In this way, the highly similar neighboring pixels can be grouped together. Second, the lasso penalty is employed in SS-NMF for the fact that pixels in the same manifold structure are sparsely mixed by a common set of relevant bases. These two factors act as a new structured sparse constraint. With this constraint, our method can learn a compact space, where highly similar pixels are grouped to share correlated sparse representations. Experiments on real hyperspectral data sets with different noise levels demonstrate that our method outperforms the state-of-the-art methods significantly.

💡 Research Summary

The paper introduces Structured Sparse Nonnegative Matrix Factorization (SS‑NMF), a novel approach for hyperspectral unmixing that simultaneously exploits spatial‑spectral structure and sparsity of abundances. Traditional NMF‑based unmixing methods, while providing a parts‑based representation due to nonnegativity constraints, suffer from three main drawbacks: (1) they ignore the spatial correlation among neighboring pixels, (2) they lack explicit sparsity enforcement on the abundance matrix, and (3) the solution space remains large and non‑convex, leading to ambiguous endmember and abundance estimates.

SS‑NMF addresses these issues by incorporating two complementary regularizers into the classic NMF objective. First, a graph Laplacian term models the manifold structure of the hyperspectral data. Each pixel is treated as a graph node; edges are created only when two conditions hold: (a) the pixels lie within a local m × m spatial window (m = 7 in the experiments), and (b) their spectral similarity measured by the Spectral Angle Distance (SAD) belongs to the top 30 % of neighbors inside that window. This dual‑criterion weighting yields a sparse, locally adaptive adjacency matrix W, whose degree matrix D leads to the Laplacian L = D − W. The regularizer λ Tr(A L Aᵀ) forces the learned abundance matrix A to preserve the same neighborhood relationships present in the original data, effectively encouraging nearby, spectrally similar pixels to have similar abundance vectors.

Second, a lasso (ℓ₁) penalty α‖A‖₁ is added to promote sparsity, reflecting the physical reality that most pixels are composed of only a few endmembers. By combining the Laplacian and ℓ₁ terms, SS‑NMF defines a “structured sparse constraint” that simultaneously enforces regional smoothness and global sparsity. The full objective becomes

O = ½‖Y − MA‖_F² + λ Tr(A L Aᵀ) + α‖A‖₁,

where Y ∈ ℝ^{L×N} is the hyperspectral data matrix, M ∈ ℝ^{L×K} the endmember matrix, and A ∈ ℝ^{K×N} the abundance matrix. Both M and A are constrained to be nonnegative.

Optimization proceeds via an alternating multiplicative update scheme derived from the Karush‑Kuhn‑Tucker (KKT) conditions. The updates retain the nonnegativity of M and A while guaranteeing a non‑increasing objective value:

M ← M ⊙ (Y Aᵀ) / (M A Aᵀ),

A ← A ⊙ (MᵀY + λ W A) / (MᵀM A + λ D A + α 1),

where ⊙ denotes element‑wise multiplication and division is also element‑wise. Convergence is proved by showing that each update step reduces the Lagrangian under the KKT constraints. The computational complexity is dominated by the graph construction (O(N m²)) and the matrix multiplications in each iteration (O(LKN)), comparable to standard NMF and scalable to large datasets.

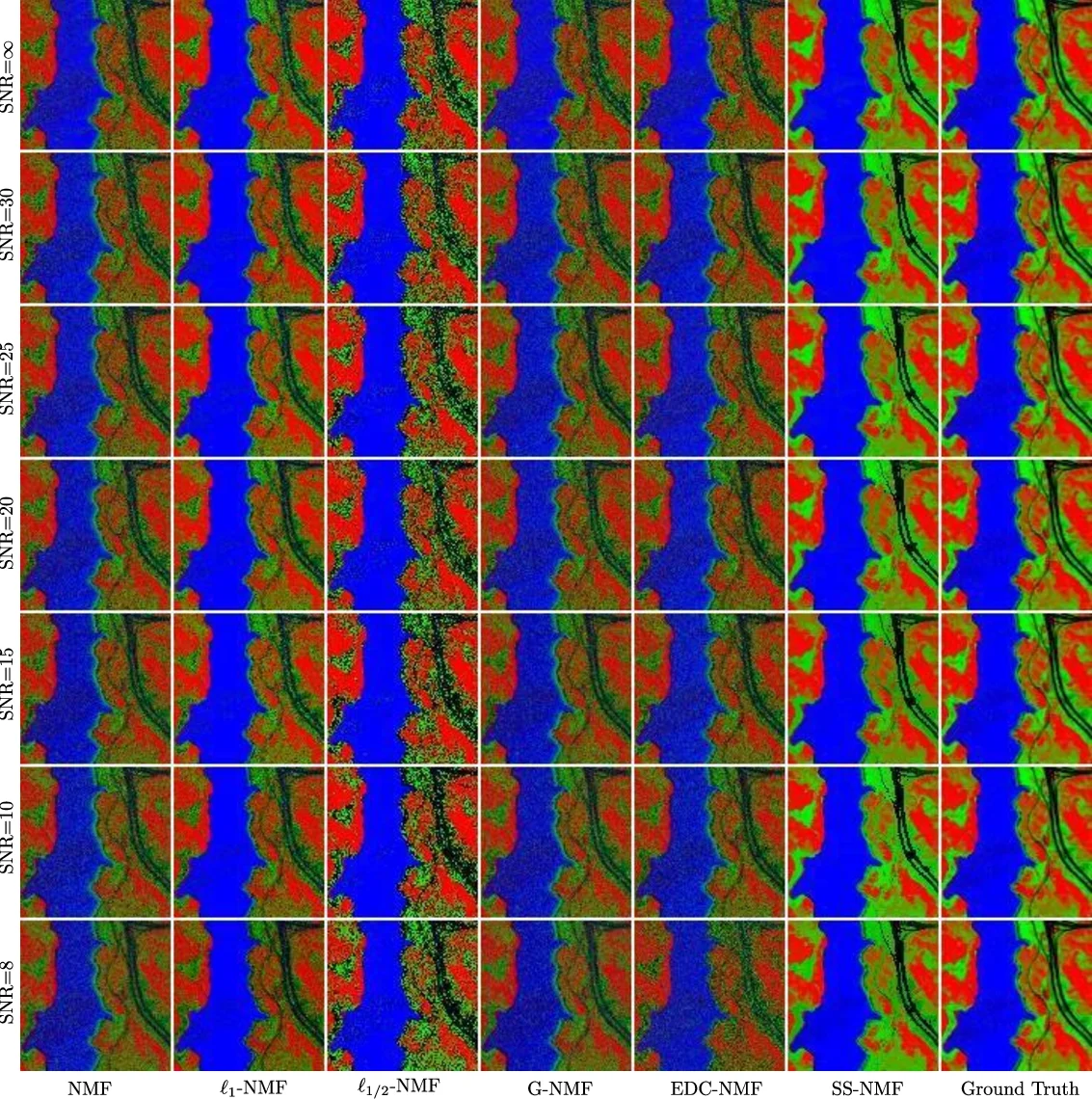

Experimental validation uses two real hyperspectral datasets (AVIRIS Cuprite and HYDICE Urban) under multiple signal‑to‑noise ratios (30 dB, 20 dB, 10 dB). SS‑NMF is benchmarked against state‑of‑the‑art unmixing algorithms including VCA, N‑FINDR, MVC‑NMF, GL‑NMF, and Sparse‑NMF. Performance metrics are spectral angle distance (SAD) for endmember recovery, root‑mean‑square error (RMSE) for abundance estimation, and visual inspection of abundance maps. Across all noise levels, SS‑NMF achieves the lowest SAD and RMSE, with particularly pronounced gains in low‑SNR scenarios where traditional methods deteriorate sharply. The abundance maps produced by SS‑NMF display clearer separation of materials (e.g., soil, vegetation, water, urban structures) and respect object boundaries, confirming the effectiveness of the graph‑based spatial regularization.

In summary, SS‑NMF introduces a principled way to embed both manifold smoothness and sparsity into the NMF framework for hyperspectral unmixing. By jointly learning endmembers and abundances that honor local spectral‑spatial relationships while remaining parsimonious, the method overcomes the key limitations of earlier NMF‑based approaches and delivers superior quantitative and qualitative results. The authors suggest future extensions to nonlinear mixing models and integration with deep learning priors to further enhance robustness and applicability.

Comments & Academic Discussion

Loading comments...

Leave a Comment