Sonic interaction with a virtual orchestra of factory machinery

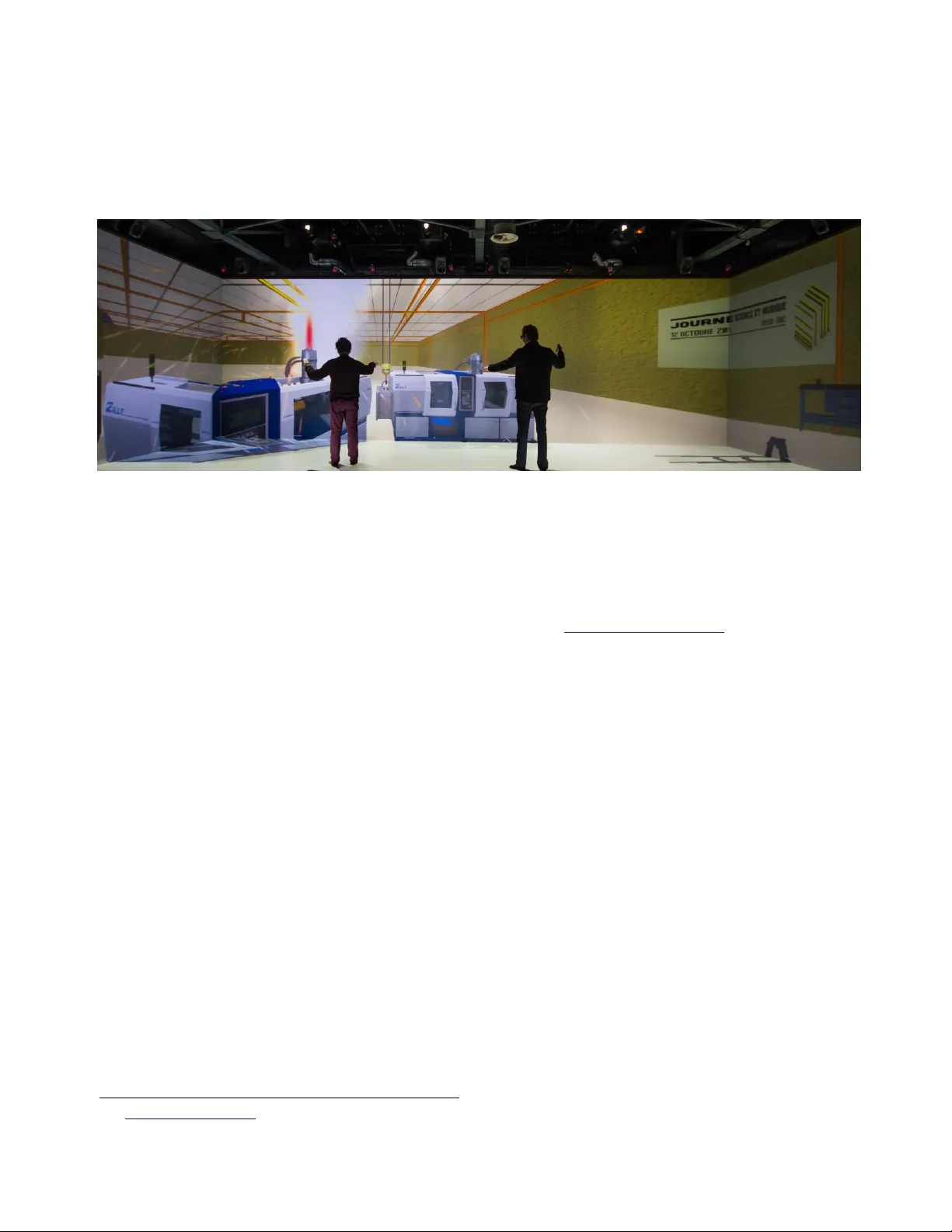

This paper presents an immersive application where users receive sound and visual feedbacks on their interactions with a virtual environment. In this application, the users play the part of conductors of an orchestra of factory machines since each of their actions on interaction devices triggers a pair of visual and audio responses. Audio stimuli were spatialized around the listener. The application was exhibited during the 2013 Science and Music day and designed to be used in a large immersive system with head tracking, shutter glasses and a 10.2 loudspeaker configuration.

💡 Research Summary

The paper presents an immersive interactive installation in which participants act as conductors of a “virtual orchestra” composed of factory machinery. Each user action on a handheld controller simultaneously triggers a visual animation of a machine component and a corresponding sound. The audio is spatially rendered using a 10.2 loudspeaker configuration (five front, five rear, two surround speakers) and is dynamically re‑positioned according to the listener’s head orientation, which is tracked in real time by a head‑tracking camera. The visual side is rendered in Unity3D, employing low‑poly models and particle effects to depict gear rotations, hydraulic pistons, and other industrial motions.

The system architecture is divided into hardware and software layers. The hardware layer consists of the multi‑channel speaker array, active shutter glasses, a head‑tracking system, and interaction devices (joysticks, buttons). The software layer integrates a Unity‑based visual engine, an audio engine that combines FM synthesis with sampled recordings of real factory sounds, and an interaction mapping module that binds each controller input to a specific machine’s audio‑visual pair. Spatialization is achieved through a hybrid approach that mixes Vector Base Amplitude Panning (VBAP) with Ambisonics, allowing accurate placement of sound sources even with a limited speaker count. Latency is kept below 20 ms, ensuring that the auditory and visual feedback feels instantaneous to the user.

The installation was exhibited at the 2013 Science and Music Day, where approximately 150 visitors interacted with the system. Post‑event questionnaires and informal interviews indicated high levels of immersion, perceived control, and satisfaction with the spatial audio. Participants particularly praised the way the sound field followed their head movements, creating a convincing sense of being surrounded by a mechanized orchestra. However, the authors note several limitations: the physical size of the exhibition space constrained optimal speaker placement, the visual fidelity of complex machine models taxed the rendering pipeline, and the fixed speaker layout limited spatial resolution in certain directions.

In discussion, the authors argue that the project demonstrates a novel convergence of industrial sound design and interactive art, expanding the vocabulary of virtual instrument interfaces beyond traditional musical timbres. They propose future work that includes higher‑resolution point‑cloud based spatial audio, GPU‑accelerated visual effects, and a networked architecture to support multiple users collaborating in the same virtual factory environment. Potential applications are suggested for education (teaching principles of acoustics and mechanics), museum installations, and therapeutic contexts where embodied interaction with sound can be beneficial. The paper concludes that the combination of real‑time head tracking, spatial audio, and synchronized visual feedback offers a powerful platform for immersive, interactive experiences that blur the line between performer and audience.