When Agile Is Not Good Enough: an initial attempt at understanding how to make the right decision

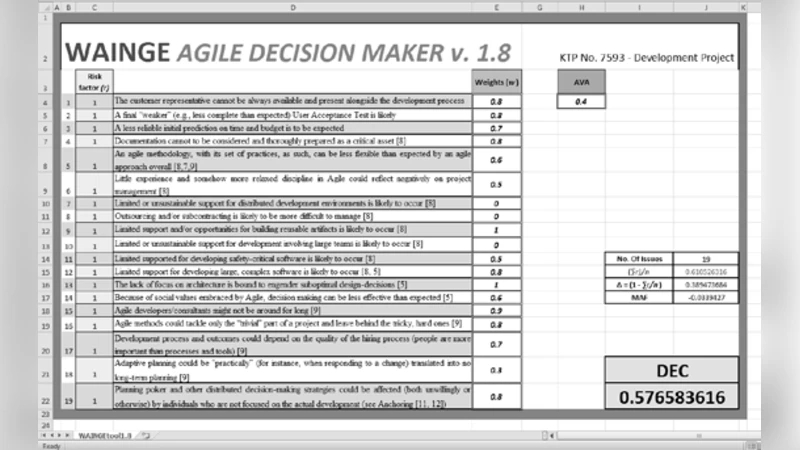

Particularly over the last ten years, Agile has attracted not only the praises of a broad range of enthusiast software developers, but also the criticism of others. Either way, adoption or rejection of Agile seems sometimes to be based more on a questionable understanding than on a critical, well-informed decision making process. In this paper, the dual nature of the above criticism is discussed, and the arguments against Agile have been classified within a critical taxonomy of risk factors. A decisional model and tool based on such taxonomy are consequently proposed for supporting software engineers and other stakeholders in the decision-making about whether or not to use Agile. The tool, which is freely available online, comes with a set of guidelines: its purpose is to facilitate the community of software developers to contribute to further assessing the potential and the criticalities of Agile Methods.

💡 Research Summary

The paper addresses the growing polarization surrounding Agile methodologies, arguing that many adoption or rejection decisions are driven more by intuition and incomplete understanding than by systematic analysis. After outlining the rapid diffusion of Agile over the past decade, the authors identify two dominant strands of criticism: methodological limitations (e.g., short sprint cycles, iterative design, high team autonomy) that can become risk amplifiers in certain contexts such as regulated or large‑scale systems, and organizational‑cultural mismatches where hierarchical structures and traditional governance clash with Agile’s distributed decision‑making. To move beyond anecdotal debate, the authors construct a taxonomy of risk factors grouped into five categories: (1) Project characteristics (size, complexity, regulatory constraints, time pressure), (2) Team capabilities (experience with Agile, collaboration culture, technology stack fit), (3) Organizational structure (authority delegation, decision‑flow, leadership style), (4) Customer and market dynamics (requirement volatility, contract type, competitive pressure), and (5) Tooling and process support (automation level, CI/CD pipelines, quality‑assurance mechanisms). Each factor is accompanied by impact and likelihood scales, allowing practitioners to assign quantitative scores.

Building on this taxonomy, the paper proposes a decision‑making model that aggregates weighted scores from the checklist, compares the total against predefined thresholds, and outputs one of three recommendations: “Agile recommended,” “Conditional adoption,” or “Do not adopt.” For conditional cases, the model automatically suggests mitigation actions—such as hybrid approaches, targeted training, or pilot projects—tailored to the specific high‑risk factors identified. The authors have implemented the model as an open‑source, web‑based tool that guides users through the questionnaire, generates a PDF report, and optionally uploads anonymized results to a community repository for collective refinement. The tool’s design emphasizes accessibility for non‑experts while preserving enough granularity for seasoned engineers to fine‑tune weightings.

The paper acknowledges several limitations. Scoring relies on subjective judgments, which may introduce bias; the current validation is limited to a handful of small‑to‑medium projects, and the model’s scalability to large enterprise or heavily regulated environments remains untested. Future work is outlined to include extensive empirical studies in diverse domains, integration of machine‑learning techniques for predictive risk estimation, and a continuous feedback loop that updates the taxonomy and weightings based on community contributions.

In summary, the authors present a structured, data‑driven framework that reframes Agile adoption as a risk‑assessment problem rather than a binary ideological choice. By providing a clear taxonomy, a weighted scoring model, and an openly available decision‑support tool, the work aims to foster more rational, evidence‑based decisions among software engineers, managers, and other stakeholders, ultimately contributing to a more mature decision‑making culture in software development.

Comments & Academic Discussion

Loading comments...

Leave a Comment