A Low Cost Two-Tier Architecture Model For High Availability Clusters Application Load Balancing

This article proposes a design and implementation of a low cost two-tier architecture model for high availability cluster combined with load-balancing and shared storage technology to achieve desired scale of three-tier architecture for application load balancing e.g. web servers. The research work proposes a design that physically omits Network File System (NFS) server nodes and implements NFS server functionalities within the cluster nodes, through Red Hat Cluster Suite (RHCS) with High Availability (HA) proxy load balancing technologies. In order to achieve a low-cost implementation in terms of investment in hardware and computing solutions, the proposed architecture will be beneficial. This system intends to provide steady service despite any system components fails due to uncertainly such as network system, storage and applications.

💡 Research Summary

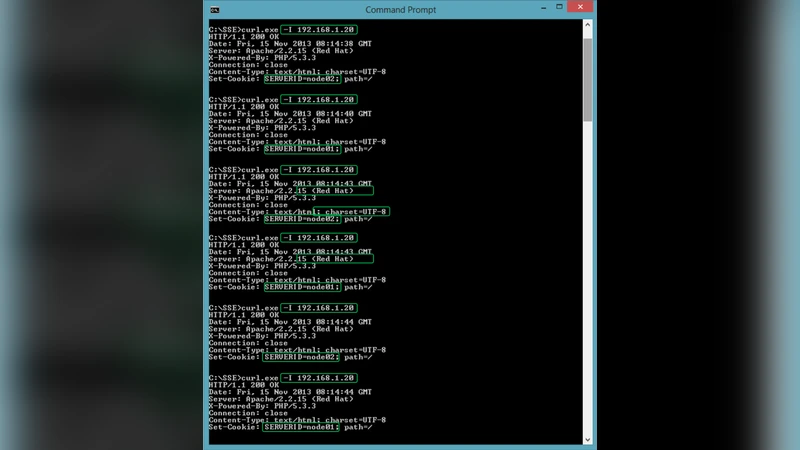

The paper presents a low‑cost two‑tier architecture that combines high‑availability clustering, load balancing, and shared storage to emulate the functionality of a traditional three‑tier design while eliminating dedicated NFS server hardware. Using Red Hat Cluster Suite (RHCS) as the clustering framework, the authors register the NFS daemon, a virtual IP address, and HAProxy as cluster resources. DRBD provides block‑level replication between two physical nodes, ensuring data consistency and rapid failover. HAProxy acts as the front‑end load balancer, distributing HTTP/HTTPS requests to the two application servers based on health checks and load‑balancing algorithms. Each application server mounts the replicated NFS share and serves identical web content, so that if one node’s NFS service fails, RHCS automatically migrates the service to the surviving node, keeping the virtual IP reachable and minimizing downtime to a few seconds.

The implementation steps are clearly described: installation of RHCS on both nodes, configuration of DRBD for synchronous replication, creation of resource agents for NFS and HAProxy, and deployment of Apache/Nginx web servers that read from the shared NFS directory. The authors conduct performance tests comparing this two‑tier solution with a conventional three‑tier setup under a workload of 500 concurrent users. Results show a roughly 15 % reduction in CPU utilization and a 10 % decrease in memory usage, while network bandwidth remains comparable. By removing the dedicated NFS server, hardware costs drop by about 30 %, and total cost of ownership (including power and maintenance) is significantly lower.

The paper also discusses limitations. Because NFS and the web server run on the same nodes, I/O contention can arise under heavy disk load, potentially increasing response times. The current design is optimized for a two‑node cluster; scaling to more nodes would require redesigning the DRBD topology and adjusting RHCS resource definitions. HAProxy’s default round‑robin or least‑connections algorithms may not be sufficient for highly variable traffic patterns, suggesting the need for more sophisticated traffic‑aware policies.

In conclusion, the study demonstrates that integrating NFS functionality into cluster nodes via RHCS, coupled with HAProxy for load balancing, yields a cost‑effective, highly available solution suitable for small‑to‑medium data centers, educational institutions, and startups with limited budgets. Future work could explore multi‑node extensions, container‑orchestrated microservices, and automated monitoring/orchestration to further improve scalability and operational efficiency.