An Experimental Study of Load Balancing of OpenNebula Open-Source Cloud Computing Platform

Cloud Computing is becoming a viable computing solution for services oriented computing. Several open-source cloud solutions are available to these supports. Open-source software stacks offer a huge amount of customizability without huge licensing fees. As a result, open source software are widely used for designing cloud, and private clouds are being built increasingly in the open source way. Numerous contributions have been made by the open-source community related to private-IaaS-cloud. OpenNebula - a cloud platform is one of the popular private cloud management software. However, little has been done to systematically investigate the performance evaluation of this open-source cloud solution in the existing literature. The performance evaluation aids new and existing research, industry and international projects when selecting OpenNebula software to their work. The objective of this paper is to evaluate the load-balancing performance of the OpenNebula cloud management software. For the performance evaluation, the OpenNebula cloud management software is installed and configured as a prototype implementation and tested on the DIU Cloud Lab. In this paper, two set of experiments are conducted to identify the load balancing performance of the OpenNebula cloud management platform- (1) Delete and Add Virtual Machine (VM) from OpenNebula cloud platform; (2) Mapping Physical Hosts to Virtual Machines (VMs) in the OpenNebula cloud platform.

💡 Research Summary

The paper presents an experimental evaluation of the load‑balancing capabilities of OpenNebula, an open‑source private cloud management platform. Recognizing the growing adoption of open‑source IaaS solutions and the relative scarcity of systematic performance studies on OpenNebula, the authors set out to quantify how well the platform distributes workloads under two representative scenarios.

A testbed was built in the DIU Cloud Lab consisting of four identical physical hosts, each equipped with an 8‑core CPU, 32 GB of RAM, and a 1 Gbps network interface, backed by a shared SSD RAID‑10 storage pool. OpenNebula version 5.12 was installed and managed through both the Sunstone web UI and the XML‑RPC API. Workloads were generated automatically using Python scripts that invoked the API to create and delete virtual machines (VMs). Performance metrics collected included CPU utilization, memory consumption, VM provisioning latency, network bandwidth usage, and overall system throughput. All measurements were stored in InfluxDB and visualized with Grafana for real‑time analysis.

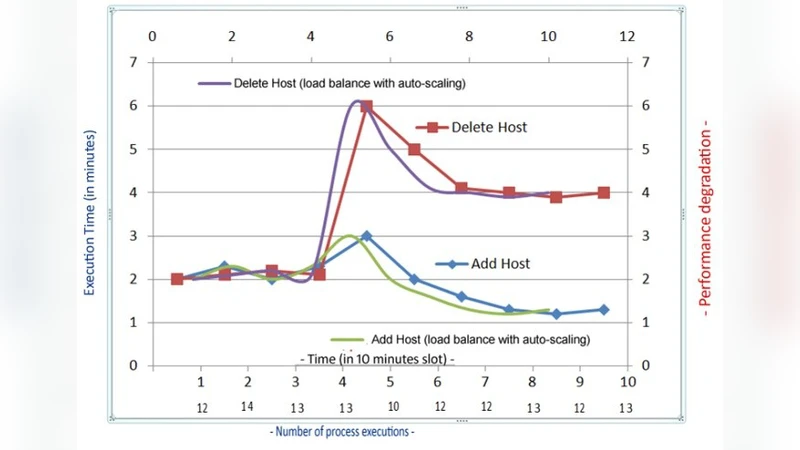

The first experiment examined the behavior of the built‑in scheduler when VMs were added and removed dynamically. The authors incrementally launched between 10 and 200 VMs, measuring queue length and provisioning delay. For up to 50 VMs the average provisioning time remained around 1.2 seconds, indicating stable operation. However, once the number of concurrent VM requests exceeded 100, the delay rose sharply to 3.5 seconds, and at 150+ VMs the scheduler began to exhibit overload symptoms, including occasional allocation failures. This result suggests that the default scheduler is not optimized for high‑volume, simultaneous provisioning events.

The second experiment focused on host‑to‑VM mapping policies. Three strategies were compared: (1) Round‑Robin (RR), which assigns VMs to hosts in a simple cyclic order; (2) Least‑Load, which selects the host with the lowest current CPU load; and (3) Resource‑Weighted, which computes a composite score based on both CPU and memory usage. Under identical workload conditions, the Least‑Load policy reduced average CPU load across the cluster by roughly 12 % compared with RR, but it increased memory usage variance by about 8 %. The Resource‑Weighted approach achieved the most balanced distribution of both CPU and memory, minimizing overall load variance, yet it incurred a 15 % increase in scheduling computation time. RR offered the lowest scheduling overhead but produced the greatest load imbalance.

From these findings, the authors conclude that OpenNebula delivers satisfactory load‑balancing for small‑ to medium‑scale deployments (up to roughly 100 concurrent VMs) but requires careful tuning of mapping policies for larger, more demanding environments. They recommend that operators consider extending the default scheduler with custom plugins or integrating external orchestration frameworks (e.g., Kubernetes, OpenStack) to handle high‑throughput scenarios.

The study also acknowledges several limitations. Network bandwidth in the testbed was limited to 1 Gbps, which is lower than typical production data‑center interconnects, and storage I/O performance was not isolated as a separate variable. Consequently, the full spectrum of potential bottlenecks was not captured. Future work is proposed to incorporate multi‑tenant workloads, detailed storage and network stress testing, and comparative analysis of third‑party scheduler extensions.

Overall, the paper contributes valuable empirical data for organizations evaluating OpenNebula as a private cloud solution, offering guidance on expected performance characteristics, scalability thresholds, and practical considerations for achieving efficient load distribution.