Smartphone sensing platform for emergency management

The increasingly sophisticated sensors supported by modern smartphones open up novel research opportunities, such as mobile phone sensing. One of the most challenging of these research areas is context-aware and activity recognition. The SmartRescue project takes advantage of smartphone sensing, processing and communication capabilities to monitor hazards and track people in a disaster. The goal is to help crisis managers and members of the public in early hazard detection, prediction, and in devising risk-minimizing evacuation plans when disaster strikes. In this paper we suggest a novel smartphone-based communication framework. It uses specific machine learning techniques that intelligently process sensor readings into useful information for the crisis responders. Core to the framework is a content-based publish-subscribe mechanism that allows flexible sharing of sensor data and computation results. We also evaluate a preliminary implementation of the platform, involving a smartphone app that reads and shares mobile phone sensor data for activity recognition.

💡 Research Summary

The paper presents “SmartRescue,” a smartphone‑based sensing platform designed to support emergency management by leveraging the rich set of sensors that modern mobile devices embed. The authors begin by outlining the shortcomings of traditional disaster‑monitoring infrastructures, which rely on fixed‑location sensors, costly deployments, and centralized processing that often cannot keep pace with rapidly evolving crisis scenarios. In contrast, smartphones are ubiquitous, equipped with accelerometers, gyroscopes, magnetometers, GPS, microphones, and more, making them ideal candidates for a distributed, opportunistic sensing network.

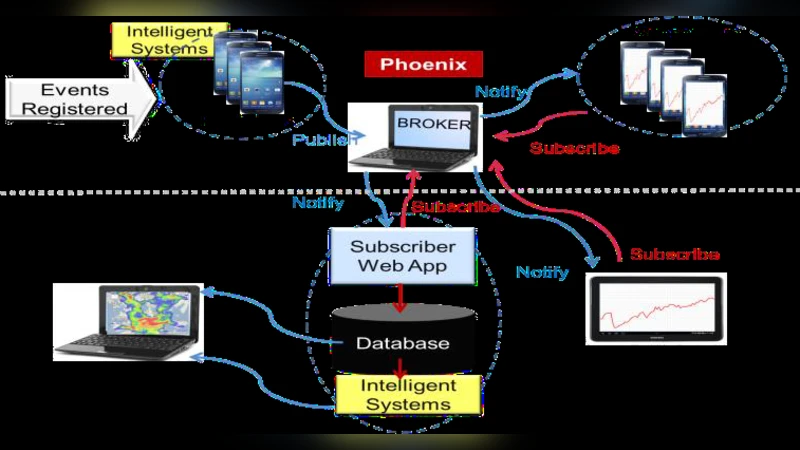

The core contribution of the work is a three‑layer architecture that integrates (1) low‑power multi‑modal data acquisition, (2) on‑device machine‑learning inference for context‑aware activity and hazard recognition, and (3) a content‑based publish‑subscribe (pub‑sub) communication layer that disseminates only the most relevant information to crisis responders.

In the acquisition layer, the Android application samples sensor streams at adaptive rates, balancing temporal resolution against battery consumption. Raw signals are segmented into overlapping windows (typically 2‑second length with 50 % overlap) and transformed into a feature set that includes statistical moments, frequency‑domain coefficients (via FFT), and sensor‑fusion descriptors.

For inference, the authors evaluate several lightweight classifiers—Random Forest, Support Vector Machine, and a compact Convolutional Neural Network—trained on a labeled dataset comprising everyday activities (walking, running, stair navigation) and simulated disaster events (smoke detection, seismic vibration). Random Forest emerged as the best trade‑off, achieving over 92 % overall accuracy and 95 % precision for smoke‑related patterns while maintaining sub‑100 ms inference latency on typical mid‑range devices.

The communication layer departs from conventional topic‑based pub‑sub systems (e.g., MQTT topics) and instead routes messages based on their semantic content. Publishers attach a JSON payload describing the detected condition (e.g., “fire‑smoke, floor 3, 12:05”) and a priority tag. Subscribers register interest filters that match specific content patterns, allowing crisis managers to receive only fire‑related alerts while ignoring unrelated motion data. This content‑driven approach reduces network traffic, mitigates congestion in bandwidth‑constrained disaster zones, and ensures that high‑priority alerts are delivered with minimal delay.

A prototype was built using Android Studio (Java/Kotlin) and an Eclipse Mosquitto broker. The system was tested with 30 volunteers who performed both routine motions and controlled hazard simulations. Results showed an average end‑to‑end latency of 350 ms (including sensor capture, inference, and MQTT transmission) and a packet loss rate below 1.2 % under both Wi‑Fi and LTE conditions. Battery impact was modest, with the full sensing‑inference‑communication loop consuming roughly 5 % of the device’s charge per hour, confirming feasibility for extended field deployments.

The authors discuss several limitations. Sensor heterogeneity across phone models introduces calibration drift, which can degrade classification performance. Scaling the pub‑sub broker to support thousands of simultaneous devices may require hierarchical brokers or edge‑computing gateways. Privacy concerns arise because raw sensor data (especially audio) could be sensitive; the current design transmits only derived features and classification results, but further safeguards are needed.

Future work is outlined along three axes: (1) adopting federated learning to train and update models locally on devices, thereby preserving user privacy while continuously improving detection accuracy; (2) integrating edge‑computing nodes (e.g., portable Raspberry Pi units) to aggregate and pre‑process data before forwarding to central command centers; and (3) employing blockchain‑based identity and integrity mechanisms to authenticate publishers and guarantee tamper‑evident alert streams.

In conclusion, the SmartRescue platform demonstrates that consumer smartphones can serve as low‑cost, scalable, and intelligent nodes in a disaster‑response sensor network. The combination of on‑device machine learning and content‑aware pub‑sub messaging yields timely, actionable information for emergency managers while respecting resource constraints typical of crisis environments. With further enhancements in scalability, security, and cross‑device calibration, the approach holds promise for real‑world integration into municipal and humanitarian emergency‑response frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment