Semi-Separable Hamiltonian Monte Carlo for Inference in Bayesian Hierarchical Models

Sampling from hierarchical Bayesian models is often difficult for MCMC methods, because of the strong correlations between the model parameters and the hyperparameters. Recent Riemannian manifold Hamiltonian Monte Carlo (RMHMC) methods have significant potential advantages in this setting, but are computationally expensive. We introduce a new RMHMC method, which we call semi-separable Hamiltonian Monte Carlo, which uses a specially designed mass matrix that allows the joint Hamiltonian over model parameters and hyperparameters to decompose into two simpler Hamiltonians. This structure is exploited by a new integrator which we call the alternating blockwise leapfrog algorithm. The resulting method can mix faster than simpler Gibbs sampling while being simpler and more efficient than previous instances of RMHMC.

💡 Research Summary

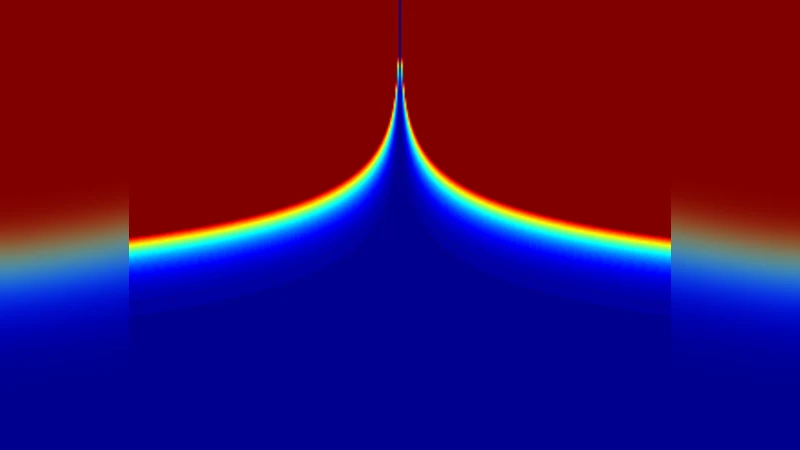

The paper addresses a long‑standing difficulty in Bayesian hierarchical models: the strong posterior dependence between group‑specific parameters (θ) and hyper‑parameters (φ). Standard Gibbs samplers and even vanilla Hamiltonian Monte Carlo (HMC) struggle because the posterior often exhibits a “funnel” geometry, where small changes in φ induce large changes in the conditional distribution of θ. Riemannian Manifold HMC (RMHMC) mitigates this by employing a position‑dependent mass matrix (the Fisher information metric), but the required O(d³) matrix operations (with d = dim(θ)+dim(φ)) make it impractical for realistic models.

The authors propose Semi‑Separable Hamiltonian Monte Carlo (SSHMC), a novel RMHMC variant that exploits a specially structured mass matrix. They restrict the metric to a block‑diagonal form

G(θ,φ)=⎡G_θ(φ,x) 0⎤

⎣0 G_φ(θ)⎦

where G_θ depends only on φ (and possibly the data x) and G_φ depends only on θ. This “semi‑separability” preserves the essential non‑separability of the joint Hamiltonian while allowing the overall system to be decomposed into two simpler, fully separable Hamiltonians:

- H₁(θ,r_θ; φ,r_φ) = U₁(θ|φ,r_φ) + K₁(r_θ|φ)

- H₂(φ,r_φ; θ,r_θ) = U₂(φ|θ,r_θ) + K₂(r_φ|θ)

Both U₁ and U₂ contain an “auxiliary potential” term (A(r_θ|φ) or A(r_φ|θ)) that appears as kinetic energy in one subsystem and as potential energy in the other. This coupling lets each subsystem borrow energy from the other, dramatically improving exploration of the hyper‑parameter space.

The computational engine is the Alternating Blockwise Leapfrog Algorithm (ABLA). Starting from a draw of the momentum vectors r_θ∼N(0,G_θ⁻¹) and r_φ∼N(0,G_φ⁻¹), the algorithm performs L iterations of:

- A half‑step leapfrog update for H₁ (θ,r_θ).

- A full‑step leapfrog update for H₂ (φ,r_φ).

- Another half‑step leapfrog update for H₁.

Because each sub‑Hamiltonian is separable, the standard leapfrog integrator (which is reversible, volume‑preserving, and symplectic) can be used without the costly generalized leapfrog required by full RMHMC. After L steps, a Metropolis‑Hastings correction guarantees that the joint distribution π(θ,φ) is invariant.

The authors discuss theoretical properties: ABLA is reversible and volume‑preserving in the full (θ, r_θ, φ, r_φ) space, and the composition of symplectic maps remains symplectic. Although ABLA does not simulate the exact flow of the original semi‑separable Hamiltonian, the MH step corrects any discretization error.

Compared with RMHMC‑within‑Gibbs, SSHMC retains the auxiliary potentials, thereby preserving the joint distribution over both positions and momenta. This leads to substantially faster mixing in both θ and φ dimensions, as demonstrated empirically on several benchmark problems: the Gaussian funnel, multi‑group linear regression, and a Bayesian neural network. Across these experiments, SSHMC achieved 2–5× higher effective sample size per unit time than Gibbs or standard HMC, while requiring far fewer O(d³) operations than full RMHMC.

Choosing G_θ and G_φ follows the same intuition as in RMHMC: use the (inverse) Hessian or Fisher information of the conditional log‑posterior, provided it is positive‑definite and independent of the other block’s parameters. In many models (e.g., logistic regression) the Hessian is block‑diagonal or can be approximated efficiently, making SSHMC scalable to high‑dimensional hierarchical problems.

In summary, the paper introduces a principled way to construct a non‑separable Hamiltonian that is computationally tractable, by enforcing a semi‑separable metric and exploiting the resulting block structure via an alternating leapfrog scheme. SSHMC bridges the gap between the robustness of RMHMC and the simplicity of standard HMC, offering a practical, fast‑mixing sampler for complex Bayesian hierarchical models. Future work may explore automated metric selection, adaptive step‑size strategies, and extensions to discrete‑parameter models.

Comments & Academic Discussion

Loading comments...

Leave a Comment