A Multiplicative Model for Learning Distributed Text-Based Attribute Representations

In this paper we propose a general framework for learning distributed representations of attributes: characteristics of text whose representations can be jointly learned with word embeddings. Attributes can correspond to document indicators (to learn sentence vectors), language indicators (to learn distributed language representations), meta-data and side information (such as the age, gender and industry of a blogger) or representations of authors. We describe a third-order model where word context and attribute vectors interact multiplicatively to predict the next word in a sequence. This leads to the notion of conditional word similarity: how meanings of words change when conditioned on different attributes. We perform several experimental tasks including sentiment classification, cross-lingual document classification, and blog authorship attribution. We also qualitatively evaluate conditional word neighbours and attribute-conditioned text generation.

💡 Research Summary

The paper introduces a general framework for learning distributed representations of textual attributes—metadata such as document identifiers, language tags, author demographics, or any other side information—jointly with word embeddings. The authors extend the classic log‑bilinear (LBL) neural language model by representing words as a three‑dimensional tensor T ∈ ℝ^{V×K×D}, where V is vocabulary size, K the embedding dimension, and D the attribute dimension. An attribute vector x ∈ ℝ^{D} gates the tensor: the attribute‑conditioned word matrix is obtained as a linear combination of D slices of the tensor weighted by x (T x = Σ_i x_i T^{(i)}). To keep the number of parameters tractable, the tensor is factorized into three low‑rank matrices: W_f^k ∈ ℝ^{F×K}, W_f^d ∈ ℝ^{F×D}, and W_f^v ∈ ℝ^{F×V}, where F is a chosen number of factors. The factorization yields T x = (W_f^v)^T · diag(W_f^d x)· W_f^k.

In the multiplicative neural language model, a context of n‑1 words is linearly transformed (using context matrices C(i)) to predict a representation \hat r. This representation is projected through W_f^k, while the attribute vector is projected through W_f^d; the element‑wise product of the two projections produces a factor vector f = (W_f^k \hat r) ⊙ (W_f^d x). The probability of the next word is then computed with a softmax over (W_f^v)^T f plus a bias term. Thus, the attribute vector dynamically gates the word embeddings, allowing the same lexical item to occupy different regions of semantic space depending on the attribute.

Attribute vectors themselves are stored in a lookup table L and are learned jointly with all other parameters via back‑propagation. A ReLU non‑linearity is applied to keep them sparse and positive, which stabilizes training. At test time, for unseen attributes (e.g., a new sentence), the network parameters are frozen and stochastic gradient descent is used to infer the corresponding attribute vector, following the approach of Le and Mikolov (2014).

The model also accommodates unshared vocabularies across attributes, which is crucial for cross‑lingual scenarios. When each attribute corresponds to a language, a language‑specific matrix W_f^{v,ℓ} is used while sharing W_f^k and W_f^d, enabling statistical strength sharing across languages.

Four experimental domains are explored:

-

Sentiment Classification (Stanford Sentiment Treebank). Each sentence is treated as an attribute (sentence vector). Sub‑phrases are extracted, a multiplicative language model is trained, and sentence vectors for test sentences are inferred. A logistic regression classifier built on these vectors achieves performance comparable to the best recursive neural networks and surpasses bag‑of‑words baselines. The method lags behind the latest convolutional and paragraph‑vector models, likely due to limited hyper‑parameter tuning.

-

Cross‑lingual Document Classification (RCV1/RCV2). Language attributes are learned for English, German, and French. The model shares factors across languages while allowing language‑specific vocabularies. Results show that using a third language (French) as an auxiliary source (ATD+) improves classification accuracy over baselines such as SMT, I‑Matrix, BAE, and BiCVM, demonstrating effective transfer of multilingual information.

-

Authorship Attribution on Blog Corpus. Metadata attributes (age, gender, industry) are learned for each author. The model captures stylistic similarities across authors sharing similar metadata, achieving competitive attribution accuracy.

-

Conditional Text Generation on Gutenberg Corpus. Each book is an attribute. The model generates samples conditioned on a specific book, reproducing characteristic writing styles. By averaging two book vectors, hybrid style samples are produced, despite never having seen such combinations during training. Additionally, conditioning on part‑of‑speech tags yields coherent sentences that respect the supplied POS sequence, illustrating the model’s ability to generate text under syntactic constraints.

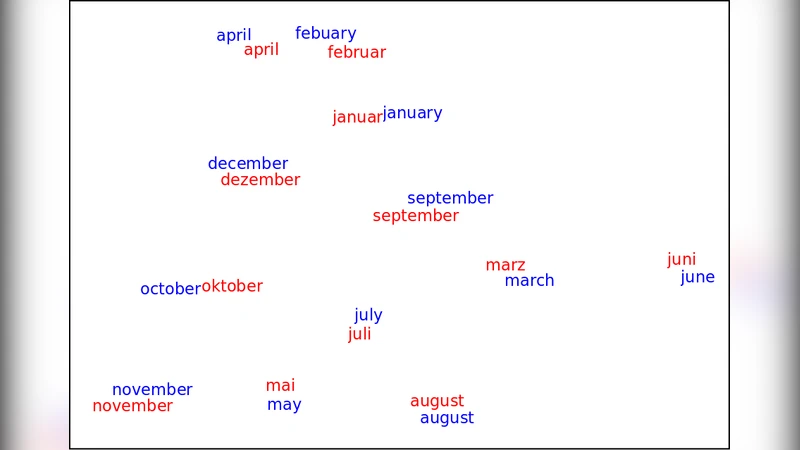

Qualitative analysis of conditional word similarity shows that word neighborhoods shift meaningfully with attributes: e.g., “joy” is close to “rapture” and “god” when conditioned on a religious author, but near “delight” and “comfort” when conditioned on a scientific author.

Strengths:

- Unified treatment of diverse side information via learnable attribute vectors.

- Efficient tensor factorization reduces parameters while preserving expressive multiplicative interactions.

- Ability to generate attribute‑conditioned text and to explore conditional word semantics.

- Demonstrated utility across sentiment, multilingual, and authorship tasks.

Limitations:

- Sensitivity to hyper‑parameters such as factor count F, context size, and embedding dimensions; extensive tuning is required.

- Sparse attribute vectors may lead to over‑fitting when training data for certain attributes is limited.

- The gating mechanism is linear in the attribute space; more complex non‑linear attribute‑word interactions are not captured.

- In cross‑lingual experiments, performance still trails the best supervised bilingual embeddings, indicating room for improvement.

Future Directions:

- Incorporate deeper non‑linear transformations (e.g., neural tensor networks) for richer attribute‑word coupling.

- Extend to multi‑attribute settings where several attributes (language + topic + author) influence the same token simultaneously.

- Combine with large‑scale unsupervised pre‑training (e.g., BERT, GPT) to leverage massive corpora while retaining attribute conditioning.

- Explore graph‑based representations of attributes to capture hierarchical or relational metadata.

Overall, the paper presents a versatile multiplicative model that bridges word embeddings and auxiliary textual attributes, opening avenues for more context‑aware language representations and controlled text generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment