Simulation based Hardness Evaluation of a Multi-Objective Genetic Algorithm

Studies have shown that multi-objective optimization problems are hard problems. Such problems either require longer time to converge to an optimum solution, or may not converge at all. Recently some researchers have claimed that real culprit for increasing the hardness of multi-objective problems are not the number of objectives themselves rather it is the increased size of solution set, incompatibility of solutions, and high probability of finding suboptimal solution due to increased number of local maxima. In this work, we have setup a simple framework for the evaluation of hardness of multi-objective genetic algorithms (MOGA). The algorithm is designed for a pray-predator game where a player is to improve its lifespan, challenging level and usability of the game arena through number of generations. A rigorous set of experiments are performed for quantifying the hardness in terms of evolution for increasing number of objective functions. In genetic algorithm, crossover and mutation with equal probability are applied to create offspring in each generation. First, each objective function is maximized individually by ranking the competing players on the basis of the fitness (cost) function, and then a multi-objective cost function (sum of individual cost functions) is maximized with ranking, and also without ranking where dominated solutions are also allowed to evolve.

💡 Research Summary

The paper presents a simulation‑based framework for quantifying the hardness of multi‑objective genetic algorithms (MOGAs). Recognizing that multi‑objective optimization problems often require more generations to converge—or may fail to converge altogether—the authors question the common belief that the sheer number of objectives is the primary cause of difficulty. Instead, they hypothesize that the size of the solution set, incompatibility among solutions, and the proliferation of local optima are the true drivers of hardness.

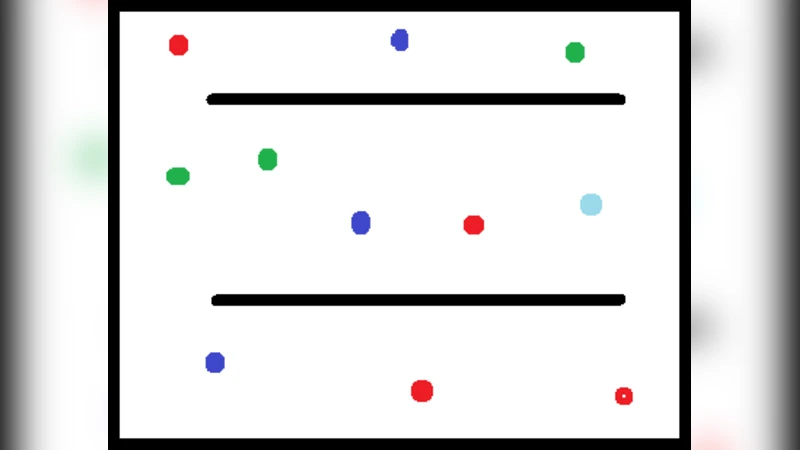

To test this hypothesis, they construct a simple predator‑prey game in which the “prey” (the evolving agent) must improve three performance metrics simultaneously: lifespan, challenge level, and arena usability. Each metric is modeled as an individual objective function to be maximized. The genetic algorithm employs standard crossover and mutation operators with equal probability (0.5) and evaluates the entire population each generation.

Two experimental tracks are defined. In the first track, each objective is optimized separately; the resulting best‑individual costs are summed to form a composite cost function, which is then maximized. In the second track, a true multi‑objective cost function (the sum of the three individual costs) is optimized directly. Within this track, two selection strategies are compared: (1) a conventional Pareto‑dominance ranking where non‑dominated individuals receive priority, and (2) a “non‑ranking” approach that ignores dominance and allows all individuals, including dominated ones, to reproduce with equal probability.

Results show a clear escalation of hardness as the number of objectives grows from one to three. The average number of generations required for convergence increases dramatically, especially under the non‑ranking scheme where convergence often does not occur within the allotted generations. This confirms that expanding the solution set and introducing incompatibility substantially enlarge the search space, making it difficult for a basic GA to locate the global Pareto front. Conversely, the “individual‑then‑sum” method converges quickly and achieves higher average fitness early on, but it produces a Pareto front with limited diversity because the scalar aggregation masks conflicts among objectives.

Pareto‑dominance ranking consistently outperforms the non‑ranking method in terms of final front quality and diversity, yet its advantage diminishes as more objectives are added, indicating that even sophisticated selection mechanisms struggle with high‑dimensional objective spaces. The authors argue that algorithm designers should focus less on the count of objectives and more on managing solution set structure, dominance handling, and adaptive operator settings.

Future work is suggested in three directions: (a) dynamic weighting of objectives to balance exploration and exploitation, (b) adaptive adjustment of crossover and mutation probabilities based on convergence metrics, and (c) maintenance of multiple Pareto fronts to preserve diversity. By providing empirical evidence that solution‑set characteristics, rather than objective count alone, dictate MOGA hardness, the paper offers a valuable diagnostic tool for researchers and practitioners aiming to design more robust multi‑objective evolutionary algorithms.