ExpertBayes: Automatically refining manually built Bayesian networks

Bayesian network structures are usually built using only the data and starting from an empty network or from a naive Bayes structure. Very often, in some domains, like medicine, a prior structure knowledge is already known. This structure can be automatically or manually refined in search for better performance models. In this work, we take Bayesian networks built by specialists and show that minor perturbations to this original network can yield better classifiers with a very small computational cost, while maintaining most of the intended meaning of the original model.

💡 Research Summary

The paper introduces ExpertBayes, a system that refines manually constructed Bayesian networks by applying small, random structural modifications. Traditional Bayesian network structure learning starts from an empty graph or a naïve Bayes model, which leads to a combinatorial explosion of the search space and often produces models that are difficult for domain experts to interpret. In many medical domains, however, experts already possess a meaningful network structure that encodes clinical knowledge. ExpertBayes leverages this prior structure as a starting point and searches locally for improvements, thereby reducing computational cost while preserving the expert’s intended semantics.

The algorithm works as follows: it reads an original network and a training‑test split, learns the conditional probability tables (CPTs) from the training data, and then iterates a fixed number of times. In each iteration a pair of nodes is selected uniformly at random. If an edge already exists between them, the algorithm randomly decides to either delete the edge or reverse its direction; if no edge exists, it inserts a new edge with a randomly chosen direction. Any operation that would create a directed cycle is discarded. When the operation affects a node that belongs to the Markov blanket of the class variable, the CPTs of the affected nodes are recomputed. The modified network is scored on the training set using the proportion of correctly classified instances (CCI) with a default probability threshold of 0.5. If the new score exceeds the best score seen so far, the modified network replaces the current best network. After all iterations, the best network is evaluated on the held‑out test set.

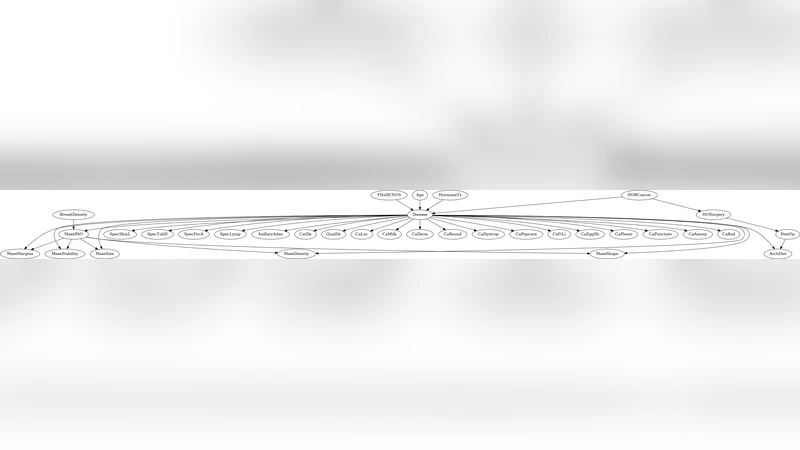

The authors evaluated ExpertBayes on three real‑world medical datasets: a prostate cancer cohort (496 instances, 11 variables) and two breast cancer cohorts (100 instances with 34 variables, and 241 instances with 8 variables). All datasets are binary‑class problems (survival vs. non‑survival for prostate; malignant vs. benign for breast). Original expert‑crafted networks were provided for each domain. For comparison, the authors also built networks automatically using WEKA’s K2 (a greedy score‑based algorithm) and TAN (Tree‑augmented naïve Bayes) starting from a naïve Bayes seed. Performance was measured using CCI and precision‑recall curves across 5‑fold cross‑validation.

Results show that ExpertBayes consistently improves upon the original expert networks. In the prostate dataset, accuracy rose from 74 % to 76 % (p < 0.01). For Breast Cancer (1), ExpertBayes achieved 63 % versus 49 % for the original model, outperforming K2 (59 %) and TAN (57%) with statistical significance (p < 0.004). For Breast Cancer (2), the automatically learned K2 (80 %) and TAN (79 %) models were superior to ExpertBayes (64 %), yet ExpertBayes still markedly outperformed the original expert network (49 %). Precision‑recall analysis revealed that ExpertBayes attains higher precision at comparable recall levels, indicating fewer false‑positive diagnoses—a crucial advantage in clinical settings.

A qualitative inspection of the best networks shows that ExpertBayes typically makes a single, interpretable change: adding, removing, or reversing an edge that often corresponds to a clinically plausible relationship (e.g., linking diastolic blood pressure to survival in prostate cancer, or connecting mass margin to malignancy in breast cancer). In contrast, the automatically learned K2 and TAN structures diverge substantially from the expert designs, sometimes reverting to naïve Bayes‑like configurations that lack domain‑specific meaning.

The key contributions of the work are: (1) demonstrating that modest, random local edits to an expert‑provided network can yield measurable performance gains with negligible computational overhead; (2) preserving the semantic integrity of the original model, thereby maintaining trust and interpretability for clinicians; (3) providing an interactive GUI that allows experts to visualize and manually adjust the network, fostering a collaborative human‑machine learning loop. Limitations include the reliance on random search (which does not guarantee global optimality) and the focus on binary classification tasks; extending the approach to multi‑class or continuous outcomes, and incorporating heuristic‑guided edits (e.g., based on information gain) are promising directions for future research.

In summary, ExpertBayes offers a practical hybrid methodology that combines expert knowledge with lightweight automated refinement, achieving better predictive performance than both the untouched expert models and fully data‑driven structures while keeping the models understandable to domain specialists. This approach is especially valuable in medical domains where expert insight is abundant but data alone may be insufficient or noisy.

Comments & Academic Discussion

Loading comments...

Leave a Comment