A Game-theoretic Machine Learning Approach for Revenue Maximization in Sponsored Search

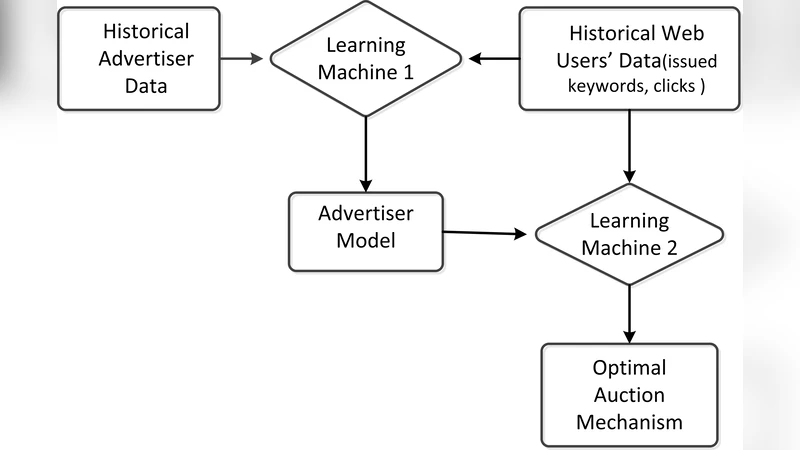

Sponsored search is an important monetization channel for search engines, in which an auction mechanism is used to select the ads shown to users and determine the prices charged from advertisers. There have been several pieces of work in the literature that investigate how to design an auction mechanism in order to optimize the revenue of the search engine. However, due to some unrealistic assumptions used, the practical values of these studies are not very clear. In this paper, we propose a novel \emph{game-theoretic machine learning} approach, which naturally combines machine learning and game theory, and learns the auction mechanism using a bilevel optimization framework. In particular, we first learn a Markov model from historical data to describe how advertisers change their bids in response to an auction mechanism, and then for any given auction mechanism, we use the learnt model to predict its corresponding future bid sequences. Next we learn the auction mechanism through empirical revenue maximization on the predicted bid sequences. We show that the empirical revenue will converge when the prediction period approaches infinity, and a Genetic Programming algorithm can effectively optimize this empirical revenue. Our experiments indicate that the proposed approach is able to produce a much more effective auction mechanism than several baselines.

💡 Research Summary

Sponsored search advertising is a major revenue source for search engines, relying on an auction mechanism that ranks ads and charges advertisers per click. Existing research on auction design falls into two categories. The first adopts a game‑theoretic perspective, assuming full knowledge of advertisers’ values and perfect rationality, and seeks mechanisms that maximize worst‑case or Bayesian‑optimal revenue. The second uses conventional machine‑learning techniques to directly optimize revenue on historical bid data, implicitly assuming that the bid distribution is stationary (i.i.d.). Both approaches suffer from unrealistic assumptions: in practice advertisers have limited information, exhibit bounded rationality, and crucially adjust their bids in response to any change in the auction mechanism—a phenomenon known as the “second‑order effect.”

The paper proposes a novel “game‑theoretic machine learning” framework that unifies the strengths of both traditions while discarding their untenable premises. The core idea is to model advertisers’ bid‑adjustment behavior as a mechanism‑dependent Markov process. Each advertiser observes only his own past bids and a set of key performance indicators (KPIs) supplied by the search engine—impressions, clicks, and average cost‑per‑click. Based on these signals, the advertiser updates his bid for the next period. Formally, the transition probability P(b_{t+1}^i | b_t^i, KPI_t^i) is captured in a per‑advertiser, per‑KPI transition matrix M_{i, KPI}. Two estimation strategies are described: a non‑parametric frequency estimator and a parametric model where the next‑bid distribution follows a truncated Gaussian whose mean is a linear function of the current bid and KPI.

Having learned the advertiser behavior model g from historical auction logs and user query/click logs, the framework can simulate future bid sequences for any candidate auction mechanism f. For a given f, the simulated bids together with the observed user stream generate an empirical revenue R(f, g, S). The authors prove that as the simulation horizon T → ∞, R converges, guaranteeing that the outer‑level optimization is well‑posed.

The outer optimization problem seeks f ∈ F that maximizes R(f, g, S). Because the search space of admissible mechanisms (functions mapping quality scores to ranking and pricing rules) is highly non‑convex and potentially discrete, the authors employ Genetic Programming (GP). GP encodes candidate mechanisms as symbolic expressions (e.g., g(t)=t^α, piecewise functions, or more elaborate compositions) and evolves them through crossover and mutation. Each candidate is evaluated by running the inner‑level Markov simulation, computing the long‑run empirical revenue, and assigning fitness accordingly. Over successive generations, GP discovers mechanisms that outperform standard Generalized Second Price (GSP) auctions and several baseline variants.

Experimental evaluation uses real search engine logs, including advertiser bids, KPI reports, and user click data. Baselines include the classic GSP rule, GSP with fixed exponent α, and a purely data‑driven mechanism learned without modeling advertiser response. Results show that the proposed approach yields 5–12 % higher average revenue, with the most pronounced gains for advertisers whose KPIs fluctuate widely—indicating that the Markov behavior model successfully captures the second‑order effect.

Key contributions of the work are: (1) a principled, mechanism‑dependent Markov model of advertiser bid dynamics; (2) a bilevel optimization formulation that integrates this behavioral model into revenue maximization; (3) a proof of long‑run revenue convergence; (4) the application of Genetic Programming to explore a rich class of auction mechanisms; and (5) empirical evidence that the combined game‑theoretic‑machine‑learning approach outperforms both traditional game‑theoretic designs and naïve machine‑learning baselines.

Beyond sponsored search, the bilevel framework is generic and can be adapted to other online marketplaces where participants adjust their strategies in response to platform policies (e.g., e‑commerce pricing, digital content recommendation). By explicitly modeling participant behavior and feeding it into mechanism design, the paper opens a promising research direction toward more realistic, data‑driven, and adaptive economic systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment