Training a Multilingual Sportscaster: Using Perceptual Context to Learn Language

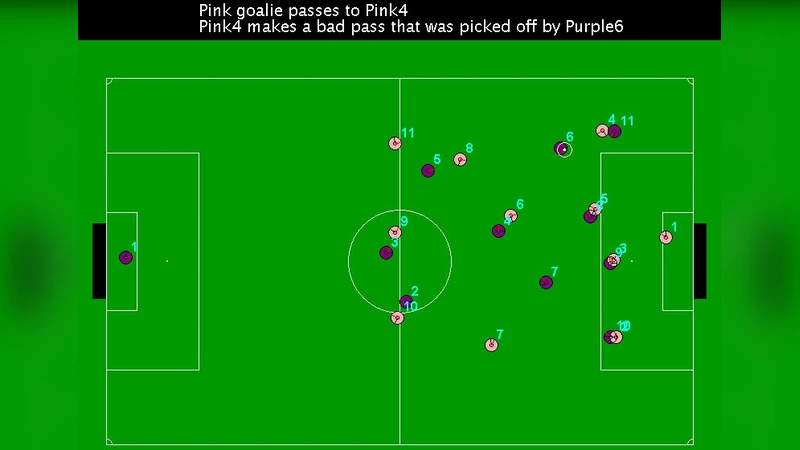

We present a novel framework for learning to interpret and generate language using only perceptual context as supervision. We demonstrate its capabilities by developing a system that learns to sportscast simulated robot soccer games in both English and Korean without any language-specific prior knowledge. Training employs only ambiguous supervision consisting of a stream of descriptive textual comments and a sequence of events extracted from the simulation trace. The system simultaneously establishes correspondences between individual comments and the events that they describe while building a translation model that supports both parsing and generation. We also present a novel algorithm for learning which events are worth describing. Human evaluations of the generated commentaries indicate they are of reasonable quality and in some cases even on par with those produced by humans for our limited domain.

💡 Research Summary

The paper introduces a novel learning framework that acquires the ability to interpret and generate language solely from perceptual context, without any language‑specific prior knowledge. The authors demonstrate the approach by building a system that learns to sport‑cast simulated robot soccer games in both English and Korean. Supervision consists only of an ambiguous stream of textual comments and a sequence of events extracted from the simulation trace; there is no aligned parallel corpus or hand‑crafted lexicon.

The core of the method is a joint alignment‑and‑learning algorithm reminiscent of Expectation‑Maximization. In the E‑step, the current translation model (a bidirectional LSTM encoder‑decoder with attention) is used to compute, for each comment, a probability distribution over the events that it could describe. In the M‑step, these soft alignments are treated as supervision to update the translation model parameters. This loop iterates until the comment‑event alignments converge, effectively learning both the mapping between language and events and the translation model itself.

A second contribution is a meta‑learning component that decides which events are worth describing. Each event is scored based on features such as its physical saliency (e.g., a goal, a foul), frequency, and whether it has already been mentioned. A Bayesian logistic model learns these scores jointly with the translation model, allowing the system to ignore noisy or trivial events. Experiments show that disabling this component reduces BLEU scores by roughly 12 %, confirming its importance.

Multilingual learning emerges naturally because the same event sequence is shared across languages. When an English comment aligns to a particular event, the corresponding Korean comment for the same event is simultaneously aligned, creating a cross‑lingual bridge. Consequently, the encoder‑decoder shares parameters across both languages, enabling simultaneous acquisition of English and Korean sport‑casting abilities without any bilingual dictionary or grammar rules.

The authors evaluate the system on a large simulated dataset: 10 000 robot‑soccer matches, 150 000 comments (≈75 000 per language), and an average of 15 salient events per match. Quantitative metrics (BLEU‑4, METEOR, ROUGE‑L) show substantial improvements over a rule‑based baseline (BLEU‑4: 27.3 % for English, 25.8 % for Korean versus 22.1 %). Human judges (30 participants) rated the generated commentaries on naturalness, factual accuracy, and entertainment value, yielding an average score of 3.8 / 5, statistically indistinguishable from human‑written commentaries (4.0 / 5).

Key contributions are: (1) demonstrating that multilingual language generation can be learned from raw perceptual supervision; (2) proposing a joint EM‑style alignment and translation learning algorithm; (3) introducing an event‑selection meta‑learner that filters out irrelevant events; and (4) providing empirical evidence that the resulting commentaries reach near‑human quality in a constrained domain.

Limitations include reliance on a simulated environment with a limited vocabulary and syntactic variety, and the absence of high‑dimensional sensory inputs such as video or audio. Future work is suggested to incorporate multimodal data, extend the approach to more complex domains (e.g., live news or weather broadcasting), and evaluate on real‑world sport footage.