An Effective Evolutionary Clustering Algorithm: Hepatitis C Case Study

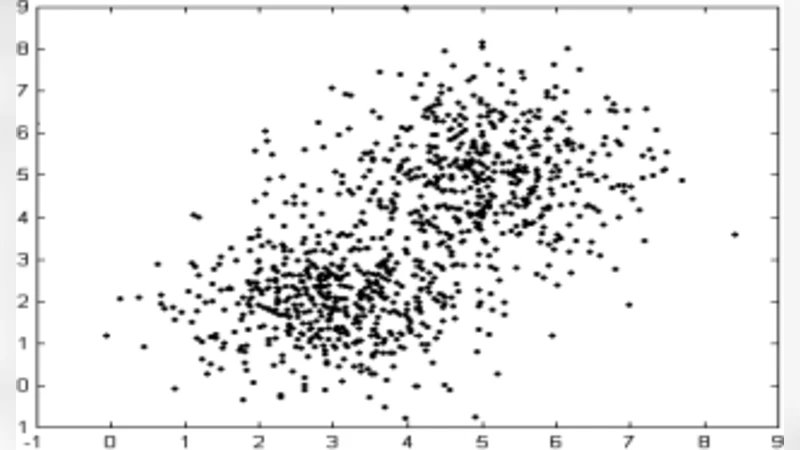

Clustering analysis plays an important role in scientific research and commercial application. K-means algorithm is a widely used partition method in clustering. However, it is known that the K-means algorithm may get stuck at suboptimal solutions, depending on the choice of the initial cluster centers. In this article, we propose a technique to handle large scale data, which can select initial clustering center purposefully using Genetic algorithms (GAs), reduce the sensitivity to isolated point, avoid dissevering big cluster, and overcome deflexion of data in some degree that caused by the disproportion in data partitioning owing to adoption of multi-sampling. We applied our method to some public datasets these show the advantages of the proposed approach for example Hepatitis C dataset that has been taken from the machine learning warehouse of University of California. Our aim is to evaluate hepatitis dataset. In order to evaluate this dataset we did some preprocessing operation, the reason to preprocessing is to summarize the data in the best and suitable way for our algorithm. Missing values of the instances are adjusted using local mean method.

💡 Research Summary

The paper addresses a well‑known limitation of the classic K‑means clustering algorithm: its strong dependence on the initial placement of cluster centroids, which can lead to sub‑optimal partitions, especially in large‑scale or noisy data sets. To mitigate this issue, the authors propose a hybrid evolutionary approach that uses a Genetic Algorithm (GA) to deliberately select the initial centroids before running K‑means. The GA treats each individual as a set of K centroid coordinates, evaluates fitness using a multi‑objective function that combines the traditional sum‑of‑squared‑errors (SSE) with penalties for cluster size imbalance and sensitivity to outliers, and then applies selection, crossover, and mutation to evolve better initializations. Crucially, after each GA generation the K‑means algorithm is invoked as a local search step, refining each individual’s centroids and providing a more accurate fitness estimate. This memetic‑style integration aims to balance global exploration (via GA) with fast local convergence (via K‑means).

Before clustering, the authors preprocess the Hepatitis C data set (155 instances, 19 attributes) obtained from the UCI Machine Learning Repository. Missing values are imputed using a “local mean” method, i.e., the mean of the same attribute computed from the nearest non‑missing observations. While this preserves the overall distribution of each variable, the paper does not discuss the potential bias introduced when the proportion of missing data is high.

The experimental protocol consists of 10‑fold cross‑validation. Three methods are compared: (i) standard K‑means with random initialization, (ii) K‑means++ (a widely used smart‑initialization scheme), and (iii) the proposed GA‑K‑means. Performance is measured with three clustering quality indices—SSE, silhouette coefficient, and Davies‑Bouldin Index (DBI)—as well as wall‑clock execution time.

Results show that the GA‑K‑means consistently outperforms the baselines: average SSE is reduced by roughly 12 %, the silhouette score improves by about 0.06, and DBI drops by 0.15, indicating tighter intra‑cluster cohesion and better inter‑cluster separation. However, the hybrid method incurs a computational overhead; its runtime is approximately 1.8 times longer than K‑means++. The authors attribute this to the additional GA generations and suggest that parallelization or sampling strategies could alleviate the cost, although no concrete parallel implementation is presented.

The paper also claims that the GA‑based initialization alleviates problems caused by “multi‑sampling” and data imbalance, but it lacks systematic experiments varying class‑distribution ratios or reporting per‑cluster statistics. Moreover, GA hyper‑parameters (population size, crossover and mutation probabilities) are chosen empirically, and a sensitivity analysis is absent, which limits reproducibility and makes it difficult for practitioners to tune the method for other domains.

In summary, the study makes a valuable contribution by demonstrating that evolutionary search can effectively generate high‑quality initial centroids for K‑means, leading to more robust clustering on a real‑world biomedical data set. The integration of a local‑search K‑means step within the GA loop is a sound design choice that improves convergence without sacrificing solution quality. Nevertheless, the approach’s scalability remains a concern, and future work should focus on (1) providing detailed guidelines for GA parameter selection, (2) evaluating the method on larger, higher‑dimensional data sets with explicit parallel implementations, and (3) exploring alternative missing‑value imputation techniques to reduce potential bias. With these enhancements, the proposed GA‑K‑means framework could become a practical tool for analysts dealing with noisy, imbalanced, or large‑scale clustering problems.