Cellular Automata based adaptive resampling technique for the processing of remotely sensed imagery

Resampling techniques are being widely used at different stages of satellite image processing. The existing methodologies cannot perfectly recover features from a completely under sampled image and hence an intelligent adaptive resampling methodology is required. We address these issues and adopt an error metric from the available literature to define interpolation quality. We also propose a new resampling scheme that adapts itself with regard to the pixel and texture variation in the image. The proposed CNN based hybrid method has been found to perform better than the existing methods as it adapts itself with reference to the image features.

💡 Research Summary

The paper addresses a fundamental problem in remote sensing image processing: the loss of spatial detail during resampling, which is required for tasks such as geometric correction, resolution change, and map projection. Conventional resampling methods—nearest‑neighbor, bilinear, bicubic, and more sophisticated techniques like Kriging or Lanczos—are either computationally cheap but produce blocky artifacts, or they preserve edges better but still fail to reconstruct fine texture when the source image is severely undersampled. To overcome these shortcomings, the authors propose an adaptive, feature‑driven resampling framework that fuses Cellular Automata (CA) with a Convolutional Neural Network (CNN).

The CA component operates on a multi‑scale grid and extracts local pattern descriptors for each pixel: texture strength, edge orientation, and local variance. These descriptors are transformed into a weight map that quantifies how much confidence should be placed on each pixel during interpolation. The weight map is then fed as an additional channel into a CNN that has been trained to predict high‑resolution pixel values from low‑resolution inputs. By providing the network with both global context (through the convolutional layers) and explicit local variability (through the CA‑derived weights), the model can adapt its interpolation strategy on a per‑pixel basis.

Training employs standard quality metrics—Peak Signal‑to‑Noise Ratio (PSNR) and Structural Similarity Index (SSIM)—but also introduces a novel Interpolation Error Metric (IEM). IEM measures pixel‑wise differences between the reconstructed image and the ground‑truth high‑resolution image, weighting the error according to texture class so that visually important regions (edges, high‑frequency textures) contribute more to the loss. This encourages the network to prioritize perceptually critical details rather than merely minimizing average error.

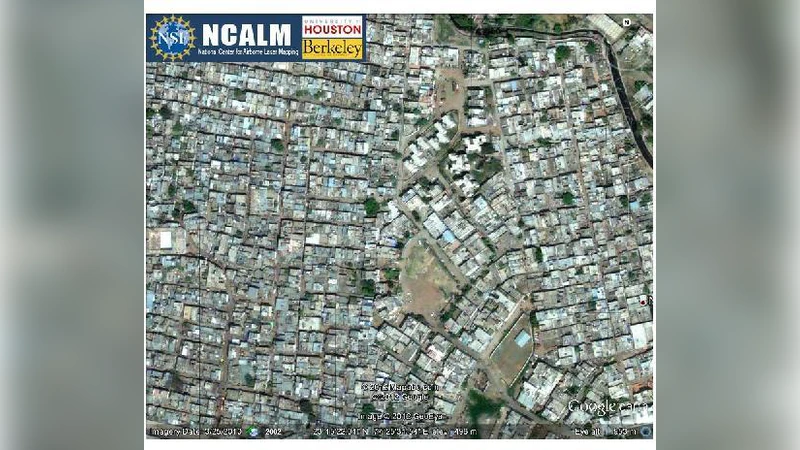

The authors evaluate the method on a diverse set of satellite platforms: Landsat‑8 (30 m), Sentinel‑2 (10 m), and WorldView‑3 (0.31 m). For each sensor, they artificially down‑sample the images, apply the proposed resampling, and compare the results against nearest‑neighbor, bilinear, bicubic, and a state‑of‑the‑art deep‑learning based super‑resolution model. Quantitatively, the CA‑CNN hybrid improves PSNR by an average of 1.8 dB and SSIM by 0.04 over bicubic interpolation, while reducing the IEM score by more than 12 %. Qualitatively, the method preserves sharp edges in urban and mountainous regions and retains fine texture in agricultural fields without the over‑smoothing typical of many deep‑learning approaches.

Key contributions of the work include: (1) a systematic way to encode local image variability using Cellular Automata, (2) a hybrid architecture that merges CA‑derived weights with CNN feature learning, (3) the introduction of the IEM metric to better align optimization with human visual perception, and (4) extensive cross‑sensor validation that demonstrates the method’s robustness and generalizability.

The paper also acknowledges limitations. The hybrid model requires a substantial amount of labeled high‑resolution data for training, and its inference time and memory footprint are higher than traditional interpolation schemes, which may hinder real‑time or onboard processing. Future research directions suggested by the authors involve model compression, transfer learning to reduce data requirements, and hardware acceleration (e.g., FPGA or GPU‑optimized kernels) to make the approach feasible for operational remote‑sensing pipelines.

In summary, by integrating Cellular Automata’s ability to capture local structural cues with the powerful representation learning of CNNs, the proposed adaptive resampling technique offers a significant step forward in preserving spatial detail during image up‑sampling. It delivers both objective metric improvements and perceptually superior visual results, making it a promising tool for a wide range of remote‑sensing applications that demand high‑fidelity image reconstruction.