Compressive Mining: Fast and Optimal Data Mining in the Compressed Domain

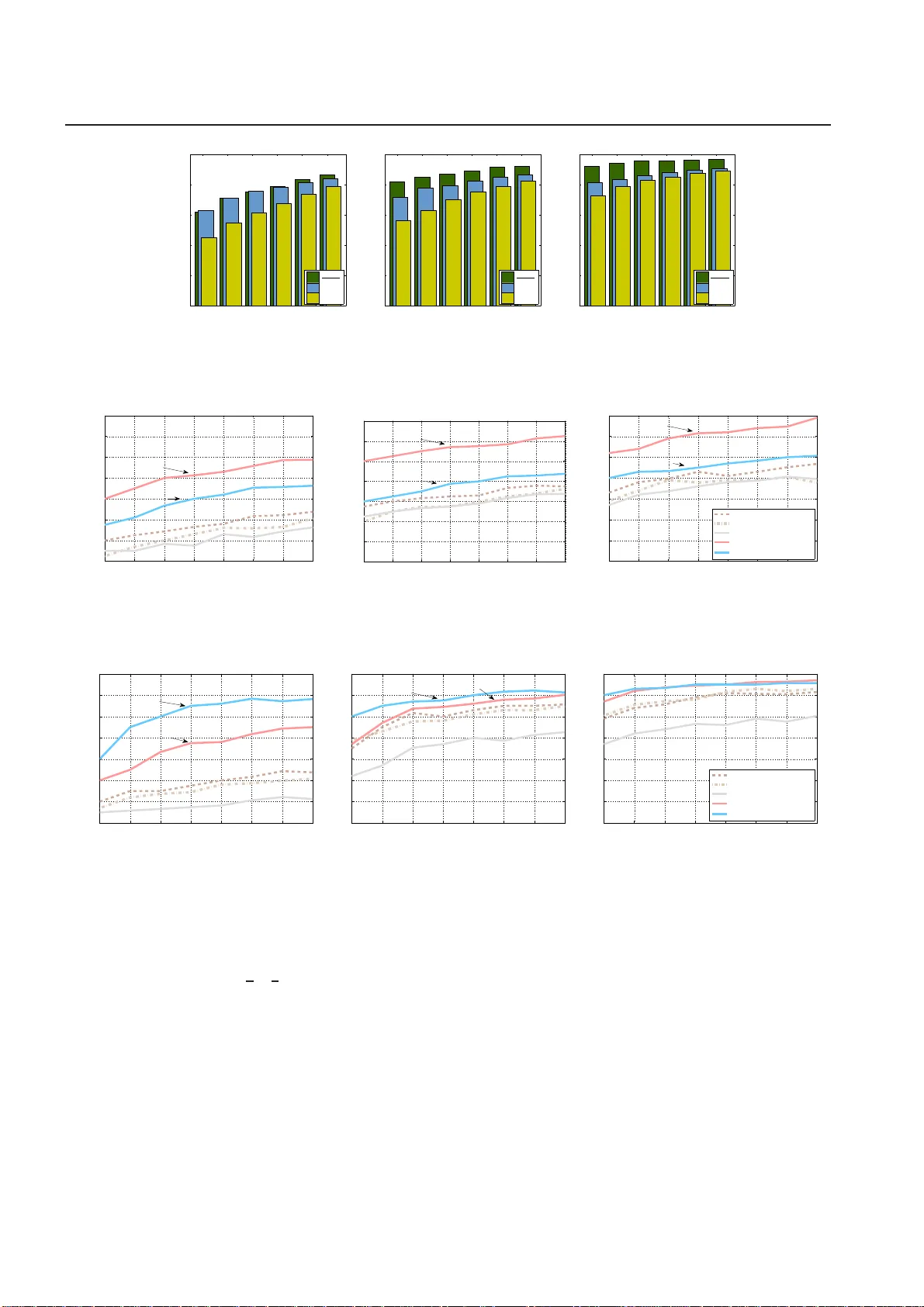

Real-world data typically contain repeated and periodic patterns. This suggests that they can be effectively represented and compressed using only a few coefficients of an appropriate basis (e.g., Fourier, Wavelets, etc.). However, distance estimatio…

Authors: Michail Vlachos, Nikolaos Freris, Anastasios Kyrillidis