Partitioning Networks with Node Attributes by Compressing Information Flow

Real-world networks are often organized as modules or communities of similar nodes that serve as functional units. These networks are also rich in content, with nodes having distinguishing features or attributes. In order to discover a network’s modular structure, it is necessary to take into account not only its links but also node attributes. We describe an information-theoretic method that identifies modules by compressing descriptions of information flow on a network. Our formulation introduces node content into the description of information flow, which we then minimize to discover groups of nodes with similar attributes that also tend to trap the flow of information. The method has several advantages: it is conceptually simple and does not require ad-hoc parameters to specify the number of modules or to control the relative contribution of links and node attributes to network structure. We apply the proposed method to partition real-world networks with known community structure. We demonstrate that adding node attributes helps recover the underlying community structure in content-rich networks more effectively than using links alone. In addition, we show that our method is faster and more accurate than alternative state-of-the-art algorithms.

💡 Research Summary

The paper introduces a novel community‑detection framework called the Content Map Equation (CME), which extends the original Map Equation of Rosvall and Bergstrom by incorporating node attributes into the description of information flow on a network. Traditional community‑detection methods focus solely on the topology of links, treating all nodes as indistinguishable except for their connectivity. While several recent approaches have attempted to blend attribute information, they typically require ad‑hoc parameters to balance the influence of links versus attributes, or they rely on generative models that are computationally intensive and often limited to low‑dimensional attribute spaces.

CME addresses these shortcomings by treating links and attributes on an equal footing within an information‑theoretic compression paradigm. The authors first recall the Map Equation, which models a random walk on a graph and assigns codewords to nodes and modules. The expected description length of a step in an infinite random walk is L(M)=q·H(Q)+∑p_i·H(P_i), where q is the total probability of exiting modules, H(Q) the entropy of module‑exit events, p_i the probability of being inside module i, and H(P_i) the entropy of movements within that module. Minimizing L(M) yields a partition where information flow is most efficiently compressed, naturally revealing densely connected communities.

To embed node attributes, each node α is associated with a normalized attribute vector x_α (∑j x{αj}=1). For a given module i, the authors compute a weighted sum of the attribute vectors of its constituent nodes, x^{(i)}j = ∑{α∈i} p_α x_{αj}, where p_α is the stationary visit probability of node α in the random walk. The entropy of this attribute distribution, H(X_i)=−∑_j (x^{(i)}_j / p^{(i)}) log₂ (x^{(i)}j / p^{(i)}), quantifies the amount of information needed to describe the attribute state when the walk is inside module i. Adding this term, weighted by the module’s visitation probability p^{(i)} = ∑{α∈i} p_α, leads to the Content Map Equation:

L_C(M)= q·H(Q) + ∑{i=1}^{m} p_i·H(P_i) + ∑{i=1}^{m} p^{(i)}·H(X_i).

Because the attribute entropy term is naturally scaled by visitation frequency, no extra hyper‑parameter is required to balance structural and attribute contributions. The optimal partition is obtained by minimizing L_C(M).

The paper proposes two optimization strategies. The first is a greedy bottom‑up approach identical to the original Map Equation algorithm: each node starts in its own module, and pairs of modules are merged whenever the merge reduces L_C. This method, while simple, scales poorly (approximately O(k·m²), where k is the number of iterations and m the current number of modules). To overcome this limitation, the authors design a top‑down search with random restarts. The network is initially divided into a modest number of large modules (e.g., via a coarse spectral split). Each large module is then recursively refined: nodes are moved between modules, modules are split, or merged, always accepting changes that lower L_C. Multiple random initializations mitigate the risk of getting trapped in poor local minima, and the algorithm exhibits near‑linear scaling on large graphs.

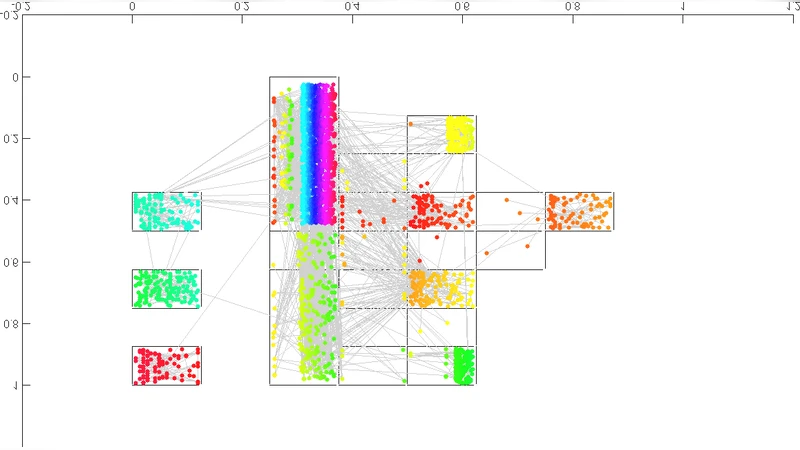

Empirical evaluation is conducted on several real‑world, attribute‑rich datasets, including political blog networks, citation graphs with textual abstracts, and social media interaction graphs with user profile features. The authors compare CME (both bottom‑up and top‑down variants) against: (1) the original Map Equation (link‑only), (2) generative mixed‑membership stochastic block models extended with attributes, (3) hybrid methods that first construct attribute‑similarity links and then apply traditional community detection, and (4) matrix‑factorization approaches that jointly factorize adjacency and attribute matrices. Performance metrics include Normalized Mutual Information (NMI) against known ground‑truth communities, precision/recall, and runtime.

Results show that CME consistently outperforms link‑only Map Equation, achieving an average NMI improvement of 10–15 %. The advantage is most pronounced when attribute similarity aligns with community structure, confirming that the attribute entropy term effectively guides the partition toward semantically coherent groups. Compared with generative and hybrid baselines, CME attains comparable or higher NMI while requiring substantially less computational time. Notably, the top‑down algorithm reduces runtime by a factor of 5–10 relative to the greedy bottom‑up method, enabling the analysis of networks with hundreds of thousands of nodes and millions of edges within minutes.

The authors discuss several strengths of their approach: (i) parameter‑free operation eliminates the need for cross‑validation or manual tuning; (ii) the compression‑based objective provides a clear, interpretable criterion for community quality; (iii) the method naturally extends to multi‑dimensional attribute spaces without modification. Limitations include sensitivity to the preprocessing of attributes (e.g., choice of weighting scheme, dimensionality reduction) and potential redundancy when attributes are highly correlated, which can inflate the attribute entropy term. The current formulation assumes static networks; extending CME to dynamic or streaming settings, where both topology and attributes evolve, is identified as future work.

In conclusion, the Content Map Equation offers a conceptually simple yet powerful framework for community detection in content‑rich networks. By unifying structural and attribute information through an information‑theoretic compression lens, it delivers high‑quality partitions without the burden of hyper‑parameter selection and scales efficiently to large real‑world graphs. This work opens avenues for applying compression‑based reasoning to other network‑analysis tasks, such as anomaly detection, hierarchical clustering, and visualization of heterogeneous graph data.

Comments & Academic Discussion

Loading comments...

Leave a Comment