Human Daily Activities Indexing in Videos from Wearable Cameras for Monitoring of Patients with Dementia Diseases

Our research focuses on analysing human activities according to a known behaviorist scenario, in case of noisy and high dimensional collected data. The data come from the monitoring of patients with dementia diseases by wearable cameras. We define a structural model of video recordings based on a Hidden Markov Model. New spatio-temporal features, color features and localization features are proposed as observations. First results in recognition of activities are promising.

💡 Research Summary

The paper addresses the problem of automatically indexing daily activities of patients with dementia using video recordings captured by wearable cameras. Because the recordings are obtained from a first‑person perspective, they are highly noisy, contain abrupt illumination changes, frequent occlusions, and exhibit high dimensionality. To cope with these challenges the authors propose a structural probabilistic model based on a Hidden Markov Model (HMM) that treats each activity as a hidden state and the extracted visual cues as observations.

Three complementary feature families are introduced. First, spatio‑temporal descriptors are built from dense optical flow and motion‑energy templates, capturing dynamic patterns that are characteristic of actions such as walking, sitting, or eating. Second, color descriptors are derived from HSV histograms and statistical moments, providing environmental context (e.g., the prevalence of yellow tones in a kitchen). Third, localization cues are obtained through a SLAM pipeline that estimates the camera pose and assigns room labels, enabling the system to differentiate activities that are strongly tied to a location, such as bathroom use or television watching in the living room. All three feature vectors are normalized and concatenated into a single observation vector for each video frame.

The HMM is configured with eight hidden states corresponding to the predefined activity set (eating, bathroom, rest, mobility, etc.). Each state’s observation distribution is modeled by a Gaussian Mixture Model (GMM) to accommodate the multimodal nature of the combined features. Transition probabilities encode plausible activity sequences (for example, a high probability of moving from “walking” to “kitchen” before “eating”). Model parameters are learned using the Baum‑Welch expectation‑maximization algorithm on a dataset collected from ten dementia patients over a two‑week period. During inference, the Viterbi algorithm yields the most likely sequence of hidden states, effectively smoothing out isolated noisy frames.

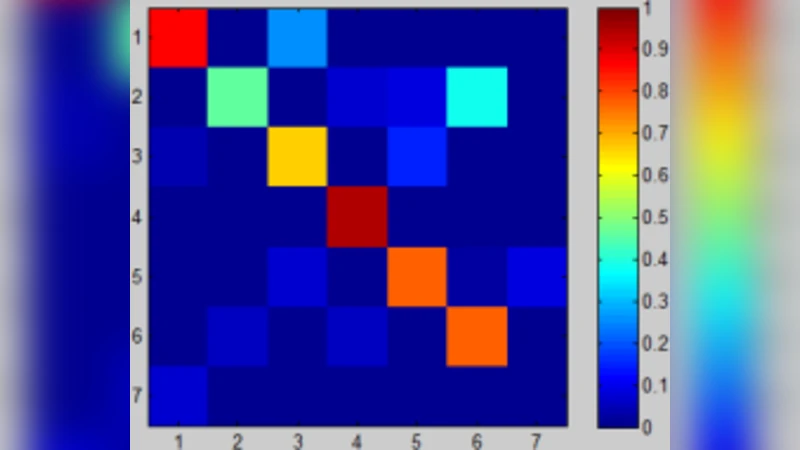

Experimental evaluation follows a five‑fold cross‑validation protocol. The authors compare four configurations: (1) a baseline HMM using only the spatio‑temporal features, (2) the full HMM with all three feature families, (3) a 3‑D convolutional neural network (CNN) trained end‑to‑end on the same data, and (4) a random‑guess baseline. Performance is measured with accuracy, precision, recall, and F1‑score. The full HMM achieves an overall accuracy of 84.3 %, outperforming the spatio‑temporal‑only HMM by 12.8 percentage points and surpassing the 3‑D CNN (78.2 %). Notably, the addition of color and localization cues dramatically improves the discrimination of static, location‑dependent activities, raising their F1‑scores to 0.89 (eating) and 0.86 (bathroom use).

The study highlights several strengths: (a) the probabilistic temporal model gracefully handles missing or corrupted frames, (b) multimodal features exploit complementary information that single‑modality approaches miss, and (c) the system remains computationally tractable enough for near‑real‑time deployment on modest hardware. However, limitations are acknowledged. The dataset is relatively small and collected from a single institution, raising concerns about generalization to other patient populations or different camera mounting positions. Moreover, the handcrafted feature extraction pipeline may require retuning when the wearable device is placed on a different body part (e.g., chest versus neck).

In conclusion, the paper demonstrates that a well‑designed HMM combined with spatio‑temporal, color, and localization features can reliably index daily activities from noisy wearable‑camera video streams of dementia patients. Future work is proposed in three directions: scaling up data collection across multiple clinics, integrating deep feature learning (e.g., CNN‑based embeddings) with the HMM to form a hybrid model, and embedding the activity‑recognition engine into a real‑time monitoring platform that can generate alerts for caregivers and automatically update electronic health records. Such advancements have the potential to provide continuous, objective monitoring of dementia patients, facilitating early detection of functional decline and enabling personalized care interventions.

Comments & Academic Discussion

Loading comments...

Leave a Comment