Learning modular structures from network data and node variables

A standard technique for understanding underlying dependency structures among a set of variables posits a shared conditional probability distribution for the variables measured on individuals within a group. This approach is often referred to as modu…

Authors: Elham Azizi, James E. Galagan, Edoardo M. Airoldi

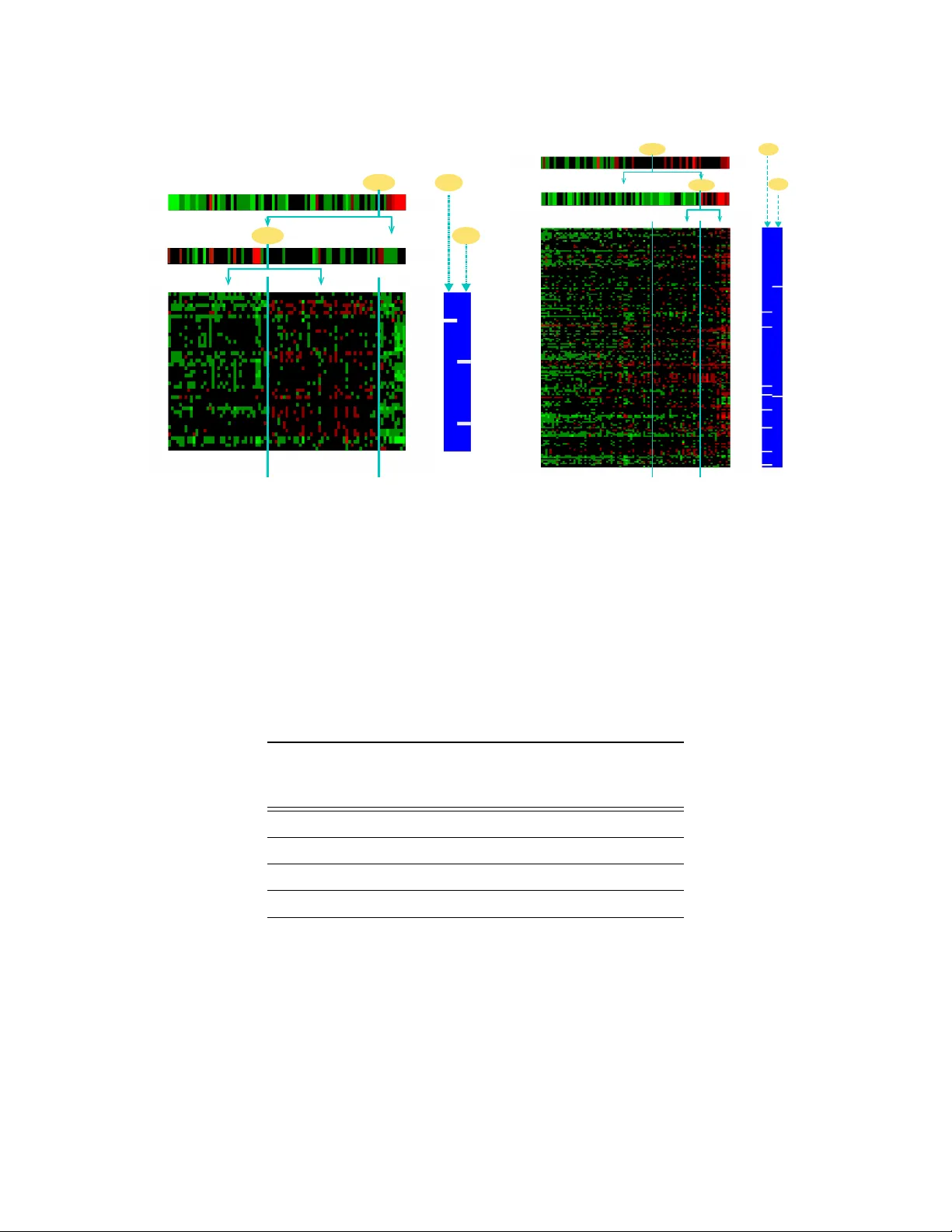

Learning Modular Structur es fr om Network Data and Node V ariables Elham Azizi E L H A M @ B U . E D U James E. Galagan J G A L AG @ B U . E D U Departments of Biomedical Engineering and Micr obiology Boston University Boston, MA 02215, USA Edoardo M. Airoldi A I RO L D I @ FA S . H A RV A R D . E D U Department of Statistics Harvar d University Cambridge, MA 02138, USA Abstract A standard technique for understanding underlying dependency structures among a set of v ariables posits a shared conditional probability distrib ution for the v ariables measured on individuals within a group. This approach is often referred to as module networks, where individuals are represented by nodes in a network, groups are termed modules, and the focus is on estimating the network structure among modules. Howe ver , estimation solely from node-specific variables can lead to spurious dependencies, and unv erifiable structural assumptions are often used for regularization. Here, we propose an extended model that leverages direct observations about the network in ad- dition to node-specific variables. By integrating complementary data types, we av oid the need for structural assumptions. W e illustrate theoretical and practical significance of the model and de- velop a reversible-jump MCMC learning procedure for learning modules and model parameters. W e demonstrate the method accuracy in predicting modular structures from synthetic data and ca- pability to learn influence structures in twitter data and regulatory modules in the Mycobacterium tuber culosis gene regulatory network. Keyw ords: Module Networks, Blockmodels, Gene Regulatory Networks, ChIP-Seq, Rev ersible- Jump MCMC, Data Integration 1. Introduction There is considerable interest in modeling dependency structures in a variety of applications. Exam- ples include reconstructing regulatory relationships from gene expression data in gene networks or identifying influence structures from activity patterns such as purchases, posts, tweets, etc in social networks. Common approaches for learning dependencies include using Bayesian networks and factor analysis (K oller and Friedman, 2009). Module networks (Segal et al., 2005, 2003) have been widely used to find structures (e.g. gene regulation) between groups of nodes denoted as modules, based on measurements of node-specific v ariables in a network (e.g. gene expression). The motiv ation lies in that nodes that are influenced or regulated by the same parent node(s), have the same conditional probabilities for their variables. For example, in gene regulatory networks, groups of genes respond in concert under certain en viron- mental conditions (Qi and Ge, 2006) and are thus likely to be regulated by the same mechanism. In 1 other domains, such as social networks, communities with similar interests or affiliations may hav e similar activity in communicating messages in response to news-outbreaks or similar purchases in response to marketing adv ertisements (Kozinets, 1999; Aral et al., 2009). Ho wever , inferring dependencies merely from node-specific variables can lead to higher rate of false positives (Michoel et al., 2007). For example, a dependency might be inferred between two unrelated nodes due to existing confounding v ariables. This can introduce arbitrary or too many parents for a module. T o a void over -fitting in inferring module networks, additional structural assumptions such as setting the maximum number of modules or maximum number of parents per module may be required. This in turn presents additional inducti ve bias and results become sensitiv e to assumptions. Moreover , searching through the entire set of candidate parents for each module is computationally infeasible. a b d c e a b d c e a b d c e Context (i) (iii) (ii) P a1: A c tiv a t or P a2: Repr essor Node V ariables γ Hi 1 γ Lo 1 γ Lo 2 γ Hi 2 γ Hi 1 + + + µ Pa2 c µ Pa1 c µ M4 c 0 0 0 Combinatorial Program > 0 < 0 γ Hi 2 Model for variables π 4 1 π 4 2 Network Data Model for network data b a e d M 4 b a b c M 3 M 4 M 1 M 2 a d e Network Data ( B ) Node V ariable Data ( X ) (i) (ii) (iii) Node V ariables Model Network Data Model Modular Structures e d Figure 1: Illustration of proposed model: Modular structures are learned from node variables (e.g. gene expression) and network data (e.g. protein-DN A interactions). Node variables are color-coded ranging from green (lo w) to red (high). A number of parents are assigned to each module (orange links). A combinatorial program is inferred for each module; example sho wn for module M 4 . Alternati vely , we can take advantage of existing network data and by integrating node interac- tions with node v ariables, we can avoid structural assumptions. For example, to learn gene regula- tory networks, we can use protein-DN A interaction data, which shows physical interactions between proteins of genes (known as Transcription Factors) with promoter regions of other genes, leading to regulation of transcription (and expression) of the latter genes. This data can be measured using chromatin immunoprecipitation of DN A-bound proteins, i.e. ChIP-ChIP or ChIP-Seq technologies, which hav e shown to be informati ve of regulation (Galagan et al., 2013; Liu et al., 2013; Celniker et al., 2009; Y eang et al., 2004). As another e xample, to learn influence structures in a twitter network, we can inte grate the network of who-follows-who with measurements of users acti vities. Identifying modules or block structures from network data has been well-studied, e.g., using stochastic blockmodels (W ang and W ong, 1987; Snijders and Nowicki, 1997; Airoldi et al., 2008, 2013a) in the area of social network modeling (Goldenber g et al., 2009; Azari Soufiani and Airoldi, 2012; Choi et al., 2012). Stochastic blockmodels assume that nodes of a network are members 2 of latent blocks, and describe their interactions with other nodes with a parametric model. Ho w- e ver , models for inferring modular structures from both data node variables and network data are relati vely unexplored and of interest in man y applications. 1.1 Contributions In this paper , we propose an inte grated probabilistic model inspired by module netw orks and stochas- tic blockmodels, to learn dependency structures from the combination of network data and node v ariables. W e consider network data in terms of directed edges (interactions) and model network data using stochastic blockmodels. Intuitively , by incorporating complementary data types, a node which is likely to hav e directed edges to members of a module as well as correlation with variables of module will be assigned as parent. The use of network data enhances computational tractability and scalability of the method by restricting the space of possible dependency structures. W e also sho w theoretically that the integration of network data leads to model identifiability , whereas node v ariables alone can not. Our model captures two types of relationships between variables of modules and their parents, including small changes of variables due to global dependency structure and condition-specific large ef fects on v ariables based on parent activities in each condition. Based on these relationships, we infer a combinatorial program (Y eang and Jaakkola, 2006; Segal et al., 2003) for each module, sho wing how multiple parents interact in regulating the module. For estimation of parameters, we use a Gibbs sampler instead of the deterministic algorithm employed by Segal et al. to overcome some of the problems regarding multi-modality of model likelihood (Joshi et al., 2009). W e also solve the problem of sensitivity to choice of maximum number of modules using a rev ersible-jump MCMC method which infers the number of modules and parents based on data. The probabilistic frame work infers posterior distributions of assignments of nodes to modules and thus does not face restrictions of non-overlapping modules (Airoldi et al., 2008, 2013b). 1.2 Related W ork Other works have also proposed inte grating different data types, mostly as prior information, for im- prov ement in learning structures (W erhli and Husmeier, 2007; Imoto et al., 2003; Mitra et al., 2013). Assumptions such as sparse priors hav e been used in other works to improv e modeling of network interactions between groups of nodes (Y an et al., 2012). Our approach is dif ferent in that we con- sider additional data types also as observ ations from a model of dependency structures. Our model thus considers both network edges and node variables as data observed from the same underlying structure, providing more flexibility for the model. Moreover , we utilize data integration to identify structures between groups of nodes (modules) as opposed to individual nodes. Despite the similar- ity in the framew ork of our model to module networks, our model for variables has differences in relating modules to their parents, giving more accurate and interpretable dependencies. Also, the integration of network data is nov el. Regarding the learning procedure, prior work has been done on improving module network inference by using a Gibbs sampling approach (Joshi et al., 2009). W e take a step further and use a re versible-jump MCMC procedure to learn the number of modules and parents from data as well as parameter posteriors. Our method can also allo w restricting the number of modules based on context, with a narrow prior . By adjusting this prior, we ha ve multi-resolution module detection. 3 2. Model of Modular Structures In the framew ork of module networks, dependencies are learned from profiles of node variables (e.g. gene expressions) for each node (e.g. gene), as random variables { X 1 , ..., X N } . The idea is that a group of nodes with common parents (e.g. co-regulated genes) are represented as a module and have similar probability distributions for their variables conditioned on their shared parents (regulators). Figure 1 sho ws a toy example where node variable data are sho wn in green-to-red heatmaps and network data with dashed arrows (Airoldi, 2007). A module assignment function A maps nodes { 1 , ..., N } to K non-overlapping modules. A dependency structure function S assigns a set of parents P a j from { 1 , ..., R } kno wn candidate parents (possible regulators/influencers), which are a subset of the N nodes, to module M j (figure 1). In the to y example, nodes d, e are assigned to the same module M 4 and b, a are assigned as their parents. In cases where multiple parents dri ve a module, e.g. a, b af fecting M 4 , combinatorial ef fects are represented as a decision tree (regulatory program) and each combination of parents activities, defined as a context, is assigned to a cluster of conditions (experiments). In figure 1, parent b has an acti vating effect while a represses M 4 , hence, e, d are acti ve in context ( ii ) where only b is activ e and a is not. Inferring this decision tree in the context of different applications sho ws how multiple parents act together in influencing a group of nodes, e.g. in a gene network, multiple transcription-factor (TF) proteins act as regulators together to express a group of genes. Gi ven this frame work, our model considers v ariables and network data as two types of observ a- tion from the same underlying modular structure. This structure is encoded based on assignments to modules ( A ) and parents for each module ( S ). In the example of gene networks, in each module, TF-gene interactions are likely to be observed between TFs and upstream regions of genes in the module while combinations of expressions of TFs e xplain expressions of genes. 2.1 Modeling Node V ariables W e model variables for nodes { 1 , ..., N } in each condition or sample c ∈ 1 , ..., C with a multiv ariate normal represented as X c ∼ N ( µ c , Σ) , where X c is a N × 1 vector , with N being the total number of nodes. The cov ariance and mean capture two dif ferent aspects of the model regarding global dependency structures and conte xt-specific effects of parents, respecti vely , as described belo w . W e define the cov ariance Σ to be independent of conditions and representing the strength of potential ef fects of one variable upon another, if the former is assigned as a parent of the mod- ule containing the latter . In the example of gene expressions, Σ may represent the af finity of a T ranscription-Factor protein to a target gene promoter . The modular dependencies between v ari- ables imposes a structure on Σ . T o construct this structure, we relate node v ariables to their parents through a re gression X c = W X c + where = N ( m c , I ) . W is a N × N sparse matrix in which element W nr is nonzero if variable r is assigned as a parent of the module containing variable n . Here we assume W nr has the same v alue for ∀ n ∈ M k , ∀ r ∈ P a k , which leads to identifiability of model (as explained in section 6. Then, assuming I − W is in vertible, X c = ( I − W ) − 1 which implies Σ = ( I − W ) − T ( I − W ) − 1 . Therefore, we impose the modular dependency structure over Σ through W , which is easier to interpret based on A , S assignments. W e define v ariable means µ c , based on parents as described below . First, based on the modular structure of nodes, we can partition the mean vector as µ c = [ µ 1 c ... µ K c ] T , where each µ k c for k = 1 , ..., K is a 1 × N k vector with N k equal to the number of nodes in module k . In modules where there is more than one parent assigned, combinations of dif ferent activities of parents, creating a 4 W X Σ µ c c µ c R π r k B r n Γ c C R k N k K Z Figure 2: Graphical repr esentation of model: The assignments of nodes to modules A and par- ents for modules S represent modular dependency structures, from which we observe node variables X c in each condition c and network data B r → n between a parent r and a node n . Means of node variables µ c are determined from parent means µ R c with mixing coef ficients Γ determined based on parent split-points Z . context, can lead to different ef fects. The binary state of parent r ∈ P a k is defined by comparing its mean to a split-point z r k , corresponding to a mixture coefficient for that state γ r Lo or γ r H i , as: γ r c = γ r Lo H ( z r k − µ r c ) + γ r H i H ( µ r c − z r k ) , where H ( · ) is a unit step function. The combination of dif ferent activities are represented as a decision tree for each module k (figure 1). W e represent a context-specific program as dependencies of variable means on parents acti vities in each context, such that µ k c for module k is a linear mixture of means for parents of that module: µ k c = P R k r =1 γ r c µ P a k c where R k is the number of parents P a k and γ r c are similar for all conditions c occurring in the same context. Thus, in general we can write µ c = Γ c µ R c , where µ R c contains the means of parents 1 , ..., R in condition c . The N × R matrix Γ c has identical rows for all v ariables in one module based on the assignment functions A , S . The graphical model is summarized in figure 2. Thus the model for object v ariables would be: X c ∼ N (Γ c µ R c , ( I − W ) − T ( I − W ) − 1 ) . Gi ven independent conditions, the probability of data X = [ X 1 , ..., X C ] for C conditions gi ven parameters can be written as multiplication of multiv ariate normal distributions for each condi- tion: P ( X |A , S , Θ , Σ , Z S ) = Q C c =1 P ( X c |A , S , θ c , Σ , Z S ) , where Θ = { θ 1 , ..., θ C } denotes the set of condition-specific parameters θ c = { µ R c , Γ c } for c = 1 , ..., C and Z S denotes the set of parent split-points for all modules. Then for each condition we hav e: P ( X c |A , S , θ c , Σ , Z S ) = 1 (2 π ) N/ 2 | Σ 1 / 2 | exp ( − 1 2 ( X c − µ c ) T Σ − 1 ( X c − µ c )) . Hence, this model provides interpretations for two types of influences of parents. By relating the distribution mean for variables in each module and in each condition to means of their assigned parents (figure 1.B), we model condition-specific effects of parents. Based on the states of parents in different contexts (partitions of conditions), this leads to a bias or large signal variations in node v ariables. Whereas, small signal changes (linear term) are modeled through the co variance matrix Σ which is independent of condition and is only af fected by the global wiring imposed by dependenc y structures. 5 2.2 Modeling Network Data Network data, as a directed edge between a parent r ∈ { 1 , ..., R } and node n ∈ M k , when r is assigned as a parent of the module r ∈ P a k is defined as a directed link B r → n where P ( B r ∈ P a k → n ∈ M k |A , S , π r k ) ∼ B er noulli ( π r k ) (1) The parameter π r k defines the probability of parent r influencing module M k (figure 2). In the gene network example, an interaction between a Transcription Factor protein binding to a motif sequence, upstream of target genes, which is common in all genes of a module can be observed using ChIP data. Therefore, directed interactions from parents to all nodes in a module would be P ( B M k |A , S , π k ) = Q r ∈ P a k Q n ∈ M k P ( B r → n |A , S , π r k ) , where π k is the vector of π r k for all r ∈ P a k and for all nodes we hav e: P ( B |A , S , π ) = K Y k =1 Y r ∈ P a k Y n ∈ M k P ( B r → n |A , S , π r k ) = K Y k =1 Y r ∈ P a k ( π r k ) s rk (1 − π r k ) | M k |− s rk Y r 0 6∈ P a k ( π 0 ) s r 0 k (1 − π 0 ) | M k |− s r 0 k (2) with π = { π 1 , ..., π K } and s rk = P n ∈ M k ( B r → n ) is the sufficient statistic for the network data model and | M k | is the number of nodes in module k and π 0 is the probability that any non-parent can hav e interaction with a module. In gene regulatory networks, π 0 can be interpreted as basal le vel of physical binding that may not necessarily af fect gene transcription and thus regulate a gene. In the context of stochastic blockmodels, the group of parents assigned to each module can be considered as an individual block and thus our model can represented as overlapping blocks of nodes. The likelihood of the model M = {A , S , Θ , Σ , Z S , π } giv en the integration of node v ariables and network data is: P ( X , B |M ) = P ( X |A , S , Θ , Σ , Z S ) P ( B |A , S , π ) . W ith priors for parame- ters M the posterior likelihood is: P ( M| X , B ) ∝ P ( M ) P ( X , B |M ) . 3. Theory: Model Identifiability Our method uses network data to avoid extra structural assumptions. In this section we formalize this idea through the identifiability of the proposed model. This property is important for inter- pretability of learned modules. Module networks and generally multiv ariate normal models for object variables can be un-identifiable, and imposing extra structural assumptions is necessary to ov ercome this. Here, we illustrate that the integrated learning proposed in this paper resolves the un-identifiability issue. First, we sho w that modeling node v ariables alone is identifiable only under very specific conditions. Then, we will restate some results from Latouche et al. (2011) on the iden- tifiability of ov erlapping block models. Using this result we show the identifiability of the model under some reasonable conditions. Lemma 1 Node V ariables Model: F or the model of node-specific variables X , if we have: P ( X |{A , S } 0 , Θ 0 , Σ 0 ) = P ( X |{A , S } , Θ , Σ) 6 1. Then, we can conclude: µ 0 = µ and Σ 0 = Σ . 2. If we further assume {A , S } = {A , S } 0 and that each module has at least two non par ent nodes and P k | P a k | < N and the covariance matrix Σ is in vertible, we can conclude: Θ = Θ 0 , W = W 0 (Pr oof in Appendix A). The above lemma provides identifiability for the case where the structure {A , S } is assumed to be known. Ho wever , in the case where we don’t have the structure, the parameterizations of multi variate normal ( µ and Σ ) can be written in multiple ways in terms of Θ and {A , S } . This is due to existence of multiple decompositions for the covariance matrix. In the following, we will use a theorem for identifiability of ov erlapping block models from Latouche et al. (2011) which is an extension of the results in Allman et al. (2009). The results provide conditions for ov erlapping stochastic block models to be identifiable. Theorem 1 Netw ork Data Model: If we have P ( B |{A , S } , π ) = P ( B |{A , S } 0 , π 0 ) , then: {A , S } = {A , S } 0 with a permutation and π = π 0 (except in a set of parameters which have a null Lebesgue measur e) (Pr oof in Appendix B). Using the above Theorem and Lemma 1 we can have the following Theorem for the identifia- bility of the model. Theorem 2 Identifiability of Model: If we have: P ( B |{A , S } , π ) = P ( B |{A , S } 0 , π 0 ) and P ( X |{A , S } 0 , Θ 0 , Σ 0 ) = P ( X |{A , S } , Θ , Σ) with assuming that each module has at least two non-par ent nodes and P k | P a k | < N and the covariance matrix Σ is in vertible, then: {A , S } = {A , S } 0 with a permutation, π = π 0 , Θ = Θ 0 and W = W 0 (except in a set of parameters which have a null Lebesgue measur e) (Pr oof in Appendix C). This Theorem, states the theoretical effect of integrated modeling on identifiability of modular struc- tures, giv en that the sum of number of parents is less than the number of nodes (as is common in gene regulatory netw orks). 4. Parameter Estimation using RJMCMC W e use a Gibbs sampler to obtain the posterior distribution P ( M| X , B ) and design Metropolis- Hastings samplers for each of the parameters Θ , Σ , π conditioned on the other parameters and data X , B . W e use Reversible-Jump MCMC (Green, 1995) for sampling from conditional distributions of the assignment and structure parameters A , S . 4.1 Learning P arameters Θ , Σ , Z S , π . T o update the means, we only need to sample one value for means of parents assigned to the same module. This set of means of distinct parents µ R c are sampled with a normal proposal (Algorithm 1). Similarly we sample the parameters γ r c , z r k and π r k , corresponding to parent r ∈ P a k of module k , from normal distributions. The conditions required for identifiability (from Theorem 1) are enforced in each iteration, such that samples violating the conditions are rejected. T o update cov ariance Σ , each distinct element of the regression matrix W corresponding to a module k , denoted as w k , is updated. Due to the symmetric proposal distribution, the proposal is accepted with probability P mh = min { 1 , P ( M ( i +1) | X,B ) P ( M ( i ) | X,B ) } where M ( i ) = {A , S , Θ , Σ , Z S π } ( i ) . 7 Algorithm 1 RJMCMC for sampling parameters Inputs: Node V ariables Data X Network Data B f or iterations i = 1 to I do Sample A ( i +1) gi ven A ( i ) using Alg 2 in appendix Sample S ( i +1) gi ven S ( i ) using Alg 3 in appendix f or modules k = 1 to K ( i ) do Propose w ( i +1) k ∼ N ( w ( i ) k , I ) Accept with probability P mh ; update Σ ( i +1) f or parents r = 1 to R k do Propose z r ( i +1) k ∼ N ( z r ( i ) k , I ) ; accept with P mh Propose π r ( i +1) k ∼ N ( π r ( i ) k , I ) ; accept with P mh end f or end f or f or condition c = 1 to C do Propose µ R ( i +1) c ∼ N ( µ R ( i ) c , I ) ; accept with P mh Propose γ R ( i +1) c ∼ N ( γ R ( i ) c , I ) ; accept with P mh end f or end f or 4.2 Learning assignments A , S . Learning the assignment of each node to a module, in volves learning the number of modules. Changing the number of modules howe ver , changes dimensions of the parameter space and there- fore, densities will not be comparable. Thus, to sample from P ( A|S , Θ , Σ , , Z S π , X , B ) , we use the Rev ersible-Jump MCMC method (Green, 1995), an extension of the Metropolis-Hastings al- gorithm that allows moves between models with different dimensionality . In each proposal, we consider three close mov e schemes of increasing or decreasing the number of modules by one, or not changing the total number . For increasing the number of modules, a random node is mov ed to a ne w module of its o wn and for decreasing the number , two modules are mer ged. In the third case, a node is randomly mov ed from one module to another module, to sample its assignment (Algorithm 2 in Appendix D). T o sample from the dependency structure (assignment of parents) P ( S |A , Θ , Σ , Z S π , X , B ) , we also implement a Reversible-Jump method, as the number of parents for each module needs to be determined. T wo proposal moves are considered for S which include increasing or decreasing the number of parents for each module, by one (Algorithm 3 in Appendix E). 5. Results 5.1 Synthetic Data W e first tested our method on synthetic node-variables and network data generated from the pro- posed model. A dataset was generated for N = 200 nodes in K = 4 modules with C = 50 conditions for each node variable. Parents were assigned from a total of R = 10 number of can- 8 didates. Parameters π , γ and W were chosen randomly , preserving parameter sharing of modules. The inference procedure was run for 20,000 samples. Exponential prior distributions were used for number of parents assigned to each module, to avoid over -fitting. Figure 3 sho ws the autocorrelation 0.05 0.1 0.15 0.2 10,000 20,000 15,000 0 2 4 6 8 0 2000 4000 6000 8000 10,000 −0. 2 0 1 Lag µ Autocorrelation µ 0. 2 Iterations K TPR 0.5 0.25 0.75 1 0 10,000 20,000 15,000 False Positive Rate True Positive Rate 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Variables model Integrated model Figure 3: Results for sythetic data: Autocorrelation for an example v ariable mean (top); gibbs samples and posterior after burn-in period (actual mean shown with red line); number of modules (purple) and true positiv e rate of recovered links (green), ROC curve for integrated model and v ariables model (bottom) for samples of v ariable mean µ n c for an example gene. The samples become independent after a lag and thus we remo ved the first 10 , 000 iterations as burn-in period. Samples from posteriors, includ- ing the number of modules K , exhibit standard MCMC movements around the actual value (actual K = 4 ). W e also calculated the true positi ve rate and false positive rates based on actual dependency links. W e repeated the estimation of true positiv e and false positiv e rates for 100 random datasets with the same size as mentioned and computed the average R OC for the model (figure 3). As com- parison, for each generated dataset, we also tested the sub-model for variable data (excluding the model for network data) to infer links (figure 3). W e performed bootstrapping on sub-samples with size 1000 to compute variance of A UC (area under curve) and paired t-tests confirmed improved performance of integrated model compared to the v ariables sub-model ( p < 0 . 05 ). The parameter sharing property in modular structures allows parallel sampling of parameters w k and γ r ( k ) , z r k , π r k for each module k , in each iteration and in different conditions. W e used Matlab-MPI for this implementation. It takes an a verage of 36 ± 8 seconds to generate 100 samples for N = 200 , C = 50 , R = 10 on an i5 3 . 30 GHz Intel(R). For further enhancement, module assignments were initialized by k-means clustering of v ariables. 5.2 M. tuberculosis Gene Regulatory Netw ork W e applied our method to identify modular structures in the Mycobacterium tuberculosis (MTB) regulatory network. MTB is the causative agent of tuberculosis disease in humans and the mech- anisms underlying its ability to persist inside the host are only partially known (Flynn and Chan, 9 2001). W e used interaction data identified with ChIP-Seq of 50 MTB transcription factors and ex- pression data for different induction lev els of the same factors in 87 experiments, from a recent study by Galagan et al. (2013). Only bindings of factors to upstream intergenic regions were considered. W e tested our method on 3072 MTB genes which had binding from at least one of these factors and performed 100,000 number of iterations on the combination of the two datasets. For each gene, we inferred the mode of its assignments to modules (after removing burn-in samples) and obtained 29 modules in total. The largest modules and the assigned re gulators are shown in figure 4. M25 M8 M2 8 Rv2021 Rv3124 M1 M11 M22 6 M9 M13 M14 M20 M5 Rv2034 Rv1990 M6 Rv0767 M24 DosR M2 7 M10 Lsr2 M7 M29 KstR M2 3 M18 M4 Rv2324 M21 Rv3249c M3 M17 Rv0494 M2 Rv0081 Ener gy P r o duc tion A A tr ansp or t/metab olism T r anscription C ell w all C ell motilit y T r anscription P osttr anslational mo dic ation H yp o xic/Nitr ositiv e str ess r esp onse M26 M13 M11 Lipid & C arb oh y dr at e metab olism Figure 4: Regulatory structures between largest modules inferred f or MTB : Regulators as- signed to each module are shown; the size of circles are proportional to number of genes assigned to the module. Enriched functional annotations are highlighted (details in table 1). W e found functional enrichment of modules using Gene Ontology (GO) terms and COG cate- gory annotations from the TBDB database (Reddy et al., 2009) (enrichments indicate higher prob- ability of observing a function in module compared to other modules). Out of 29 modules, 26 were enriched for at least one COG category with Bonferroni corrected p < 0 . 05 . The enrichments for the top major identified modules are shown in table 1. For each module, the number of assigned genes and examples of pre viously studied genes are presented. The identified regulators of each 10 Module ID Number of Genes Example Genes As- signed to Module Regulators Enriched COG Catergories ( p < 0 . 05 ) Enriched GO terms ( p < 0 . 05 ) M21 291 KstR, Rv3249c, sigI, relA, helZ, recG Rv0081 Replication, recombination and repair; T ranscription extracellular region; growth; plasma membrane M24 258 DosR, sigA, sigL, clpP1,2 Rv2034 Intracellular trafficking, secre- tion, and vesicular transport; Secondary metabolites biosyn- thesis, transport and catabolism extracellular region; plasma membrane M7 250 Rv0324, sigE, rpoA, icl, sucC, narK1, nuoAB, nuoDEFG Rv0081, Lsr2 Energy production and con ver- sion; Inorganic ion transport and metabolism N ADH dehydrogenase (ubiquinone) activity; growth; plasma membrane M5 214 inhA, fabH Rv1990c Posttranslational modification, protein turnover , chaperones growth; plasma membrane M25 161 ideR, sigB, nusG, argR, lipP , Rv2021c, Rv3124 Rv0081, DosR T ranscription; Defense mecha- nisms plasma membrane; succi- nate dehydrogenase activity M10 154 lysA, dapF , fprA, lipO, fadD7, fadD30, fadA6 Rv3249 Amino acid transport and metabolism; plasma membrane M26 148 sugA,B,C; mutA,B KstR Carbohydrate transport and metabolism; Lipid transport and metabolism growth; propionate metabolic process, methyl- malonyl pathway M1 144 fabG4, fadD8 DosR Secondary metabolites biosyn- thesis, transport and catabolism cellular response to ni- trosativ e stress; gro wth; plasma membrane M22 60 fas, fadA4, pcaA, metB Rv3249c, Rv2034 Cell wall/membrane/en velope biogenesis plasma membrane M27 59 kasA-B, fabD, accD6 Lsr2 Cell motility plasma membrane M11 48 fadA3, fadD4, lipC, lipW , nuoH-N, narI,J,H Rv0081, KstR Ener gy production and cov- ersion; Lipid transport and metabolism N ADH dehydrogenase (ubiquinone) activity; nitrate reductase activity M3 36 Rv0081, Rv0232, Rv1990c, fadE4, fadE5 DosR Energy production and con ver- sion - T able 1: Enrichment of functional annotations for largest modules controlled by major MTB regu- lators module and enriched annotations confirm kno wn functions for some regulators, such as the role of KstR (Rv3574) in regulating lipid metabolism (Kendall et al., 2007), confirmed in modules M26 and M11; and DosR (Rv3133c) in nitrosativ e stress response (V oskuil et al., 2003) (module M1) and transcription (Rustad et al., 2008) (module M25). Nov el functions for other regulators and the combinations of regulators acting together are also presented. As sho wn in figure 4, many modules are controlled by more than one regulator , highlighting the significance of combinatorial regulations in controlling gene expressions in this network. The inferred structure identifies multiple feed-forwards loops (FFLs), many of which inv olve a hub regulator Rv0081 and another regulator . FFLs are known to lead to dynamic transient responses or time delays in gene expression (Mangan and Alon, 2003) and the role of Rv0081 in driving multiple FFLs in MTB can be further studied. Also, two auto-regulating feedbacks were inferred from Rv0081 to its module M3, and from Rv2034 to M24, which may contribute to stabilizing and 11 noise-reduction (Kærn et al., 2005) in transcription of the hub regulators. One inferred module is M11 shown in figure 5 which is regulated by Rv0081 and KstR (Rv3574). KstR is known to be in volv ed in cholesterol and lipid catabolism (Kendall et al., 2007) and the module is enriched for ”Energy production and conv ersion” and ”Lipid transport and metabolism” COG categories (table 1). The inferred program depicted in figure 5 shows that either of the two regulators can repress the e xpression of the 48 genes assigned to this module, which include lipases and genes in volv ed in fatty acid β -oxidation and triacylglycerides cycle metabolic pathways. KstR itself is also regulated by Rv0081, forming another FFL and the roles of both factors in repressing these pathways can be further inv estigated. Thus, a hypoxic (oxygen depriv ation) regulator Rv0081, regulates lipid metabolism genes through KstR. The two factors of hypoxic adaptation and lipid catabolism are two main factors in volved in MTB persistence (Flynn and Chan, 2001; Galag an et al., 2013). Figure 5 shows module M25 containing 161 genes, with an interesting regulatory program in- volving two MTB hypoxic adaptation regulators: Rv3133c (DosR) and Rv0081. DosR is well kno wn to activ ate the initial response of MTB in hypoxic conditions (Park et al., 2003). As table 1 sho ws, M25 is enriched for ”Transcription” in COG categories. The genes assigned to this module include other regulators such as Rv2021c, Rv3124 known to be induced in later time points (after 24 hours) in hypoxia. The mechanism driving this enduring hypoxic response is not well known (Rustad et al., 2008). The inferred regulatory program for this module predicts induction of most genes in the module in conditions where both DosR and Rv0081 are expressed (context (c) in figure 5). This combinatorial regulation could be acting as either a logical AND gate, where both factors are required, or Rv0081 might be the only necessary activ ator of the module. Howe ver , Rv0081 itself is also regulated by DosR, which creates a feed-forward loop structure dri ving this module (see figure 4). Hence, this program illustrates the significance of Rv0081 and DosR in the form of a FFL in mediating the induction of a second hierarchy of regulators with a time delay , leading to a later hypoxic response. W e showed in section 6 that integration of network data has theoretical adv antages in terms of model identifiability . Here, we show that it can also reduce the number of false positiv e regulatory links in MTB data. As a gold standard, we used pre viously validated links (by EMSA, R Tq-PCR) for two MTB regulators, including 48 known links for DosR from V oskuil et al. (2003) and 72 known links for KstR from Kendall et al. (2007). W e calculated the area under precision-recall for our method by comparing posterior probabilities for DosR and KstR links to known links (table 2). As comparison, we also applied common methods shown to hav e best performance in DREAM chal- lenge contests (Marbach et al., 2012) in inferring regulatory networks from gene expression only . These include Mutual Information between expression profiles (MI), CLR (Faith et al., 2007)and GENIE3 (Irrthum et al., 2010). W e applied these on the abov e MTB expression data, and compared the inferred links to the gold standard set. As the number of validated links in MTB are small, we also scored the predictions from co-expression methods to the MTB ChIP-Seq data (Galagan et al., 2013) for the same two re gulators. Also, none of these methods assume modular structures. W e then applied Module Networks (Se gal et al., 2005) to the same expression dataset and com- pared predictions to kno wn links and ChIP-Seq data (table 3). W e set the maxmimum number of modules to 10 and constrained the candidate pool of regulators to the 50 ChIPped regulators only . On average 2 . 8 ± 0 . 63 number of regulators were assigned to each module, with a mode of 3, whereas the ChIP-Seq netw ork shows a mode of 1 for in-de gree of genes (Galagan et al., 2013), i.e. most genes ha ve only one re gulator binding. As the predicted links from module netw orks are deter- ministic, an A UPR score can not be reported, thus we compared to precision and recall of posterior 12 KstR Gene Expressions TF-Gene Interactions Rv0081 KstR Rv0081 context (a) (b) (c) (c) (b) context (a) Gene Expressions DosR DosR Rv0081 TF-Gene Interactions DosR Rv0081 Figure 5: Examples of inferred regulatory programs: (Left) module M11 of fig. 4 showing that either of Rv0081 and KstR can repress the module in contexts (a) and (c); (Right) module M25 of fig. 4 sho wing the induction of these genes by DosR is mediated through Rv0081 in context (c) T able 2: Area under precision-recall A UPR( % ) calculated for link prediction using proposed method and other common co-expression methods, applied to MTB data. The predictions are scored vs kno wn and ChIP-Seq links for two regulators Gold Standard V alidated Links ChIP-Seq Links Regulator DosR KstR DosR KstR No. of T argets (48) (72) (528) (503) MI 39.04 9.24 25.00 17.85 CLR 48.25 9.37 21.44 16.77 GENIE3 62.26 31.37 21.55 19.44 Proposed Model 72.13 65.72 79.62 70.06 mode from our models. Note small precision values are due to small number of validated links, i.e. if a link is not v alidated experimentally it may not be wrong. For a f air comparison of models with- out the effect of interaction data, we also compared to performance of our model for variables data only (table 3). These results show that module networks and in general co-expression methods ha ve many false positiv es and integrating interaction data is necessary for inference of direct regulatory relationships. 13 T able 3: Percentage of Precision (P) and Recall (R) for link prediction using module networks and proposed models. Gold Standard V alidated Links ChIP-Seq Links Regulator DosR KstR DosR KstR P R P R P R P R Module Networks 3.8 81.2 6.5 86.1 40.1 76.3 35.8 67.4 Proposed Model for V ariables (mode) 4.6 77.1 7.2 77.8 55.0 83.7 52.5 80.5 Proposed Integrated Model (mode) 6.5 89.6 10.6 84.7 75.4 93.4 83.6 95.6 5.3 T witter Network As a second application, we used our method to find influence structures in a social network. In social networks such as twitter , the activity of users, e.g. number of tweets posted by a user in a time windo w , can be influenced by other users. T o find these influence patterns, one approach would be to search for all other users that ha ve correlated activity , e.g. same number of posts in a day . Ho wever , gi ven that users are more likely to be influenced by users whom they are follo wing, integrating the social graph of who-follows-who would improve accuracy and speed in finding influential users that af fect a large community (module) of users. W e applied our method on integration of two types of data from twitter . Number of user posts (tweets) are considered as node variables, time windows of one day are considered as conditions, and the network of followings is considered as network information. The dataset of tweets during a period of 4 months from (June to Sept 2009) (Y ang and Leskovec, 2011) was combined with the social graph of who-follows-who (Kwak et al., 2010) and 450 number of users were randomly selected that had data in both datasets. Figure 6 shows the inferred modular structures of influence between users with circle sizes proportional to number of users assigned to each module. The results present interesting structures of influence for each community . For example, module (community) M13 is influenced by best-selling authors such as Brian Solis and C.C. Chapman which are assigned to M5, while users in module M10 are mostly influenced by well-known web designers and developers in M3 including Ethan Marcotte ( @ beep), Garett Dimon and Michael Lopp ( @ ranks). Module M16 is mostly influenced by famous technologists including T antek Celik ( @ t) and Andy Baio ( @ waxpancake). Module M6 contains computer scientists such as Bradle y Horowitz ( @ elatable). The most influential users (largest fan-out degrees) in this subnetwork were famous blogger Robert Scoble ( @ Scobleizer) and @ Starbucks. These results also identify communities with diverse interests such as M8, i.e. follow users belonging to div erse communities. The top hashtags posted by users of each community is consistent with interests and professions of their influencers and highlights major e vents of that period in 2009, such as launch of Google W ave, Iran election and Michael Jackson’ s death (figure 6). Thus this method can identify communities with common influencers in social networks. 14 M18 11333 24303 @Starbucks M12 @Tojosan M11 @Scobleizer M13 @philcampbell @cc_chapman @briansolis M9 @demis M8 @chrisheuer 25993 @rands @dkr M10 @al3x M16 10923 @t @photomatt M15 @waxpancake @elatable 13046 11848 @anildash M14 11422 @beep @garrettdimon 12716 11552 @thomas @gruber M1: technologists M3: web design M4 M2: digital media M5: authors #musicmonday #iranelection #cnnfail #mj M6: computer science #google #wave Figure 6: Influence structures inferred for a twitter subnetwork : T op posted hashtags and user interests are highlighted 6. Conclusion W e proposed a model for learning dependency structures between modules, from network data and node variables. W e sho wed that the assumption of shared parents and parameters for nodes in a module, together with inte gration of network data deals with under-determination and un- identifiability , impro ves statistical robustness and av oids over -fitting. W e presented a reversible- jump inference procedure for learning model posterior . Our results showed high performance on synthetic data and interpretable structures on real data from M. tuberculosis gene network and twitter social network. Results for MTB gene re gulatory network re vealed feed-forw ard loops and insights into condition-specific regulatory programs for lipid metabolism and hypoxic adaptation. Inferred modules in a twitter subnetwork identified influencing users for dif ferent communities. 15 Acknowledgments W e acknowledge funding from the Hariri Institute for Computing and Computational Science & En- gineering, the National Institute of Health under grants HHSN272200800059C and R01 GM096193, the National Science Foundation under grant IIS-1149662, the Army Research Of fice under grant MURI W911NF-11-1-0036, and from an Alfred P . Sloan Research Fellowship. References E.M. Airoldi. Getting started in probabilistic graphical models. PLoS Computational Biology , 3 (12):e252, 2007. E.M. Airoldi, D.M. Blei, S.E. Fienberg, and E.P . Xing. Mixed membership stochastic blockmodels. The J ournal of Machine Learning Resear ch , 9:1981–2014, 2008. E.M. Airoldi, T .B. Costa, and S.H. Chan. Stochastic blockmodel approximation of a graphon: Theory and consistent estimation. In Advances in Neural Information Pr ocessing Systems (NIPS) , volume 26, pages 692–700, 2013a. E.M. Airoldi, X. W ang, and X. Lin. Multi-way blockmodels for analyzing coordinated high- dimensional responses. Annals of Applied Statistics , 7(4):2431–2457, 2013b. E.S. Allman, C. Matias, and J.A. Rhodes. Identifiability of parameters in latent structure models with many observ ed variables. The Annals of Statistics , 37(6A):3099–3132, 2009. S. Aral, L. Muchnik, and A. Sundararajan. Distinguishing influence-based contagion from homophily-dri ven dif fusion in dynamic networks. Pr oceedings of the National Academy of Sci- ences , 106(51):21544–21549, 2009. H. Azari Soufiani and E.M. Airoldi. Graphlet decomposition of a weighted network. J ournal of Machine Learning Resear ch , (W&CP 22 (AIST A TS)):54–63, 2012. S.E. Celniker , L. Dillon, M.B. Gerstein, K.C. Gunsalus, S. Henikoff, G.H. Karpen, M. Kellis, E.C. Lai, J.D. Lieb, D.M. MacAlpine, et al. Unlocking the secrets of the genome. Natur e , 459(7249): 927–930, 2009. D.S. Choi, P .J. W olfe, and E.M. Airoldi. Stochastic blockmodels with a gro wing number of classes. Biometrika , 99(2):273–284, Jun. 2012. J.J. Faith, B. Hayete, J.T . Thaden, I. Mogno, J. W ierzbowski, G. Cottarel, S. Kasif, J.J. Collins, and T .S. Gardner . Large-scale mapping and validation of escherichia coli transcriptional regulation from a compendium of expression profiles. PLoS biology , 5(1):e8, 2007. J.L. Flynn and J. Chan. T uberculosis: latency and reactiv ation. Infection and immunity , 69(7): 4195–4201, 2001. J.E. Galagan, K. Minch, M. Peterson, Anna L yubetskaya, Elham Azizi, Linsday Sweet, Antonio Gomes, T ige Rustad, Gregory Dolganov , Irina Glotov a, et al. The mycobacterium tuberculosis regulatory netw ork and hypoxia. Natur e , 499(7457):178–183, 2013. 16 A. Goldenber g, A. X. Zheng, S. E. Fienberg, and E. M. Airoldi. A surve y of statistical network models. F oundations and T r ends in Machine Learning , 2(2):129–233, Feb . 2009. P .J. Green. Reversible jump markov chain monte carlo computation and bayesian model determi- nation. Biometrika , 82(4):711–732, 1995. S. Imoto, T . Higuchi, T . Goto, K. T ashiro, S Kuhara, , and S Miyano. Combining microarrays and biological kno wledge for estimating gene networks via bayesian networks. Pr oc. Computational Systems Bioinformatics , 2003. A. Irrthum, L. W ehenkel, P . Geurts, et al. Inferring regulatory networks from expression data using tree-based methods. PLoS One , 5(9):e12776, 2010. A. Joshi, R. De Smet, K. Marchal, Y . V an de Peer , and T . Michoel. Module networks revisited: computational assessment and prioritization of model predictions. Bioinformatics , 25(4):490– 496, 2009. M. Kærn, T .C. Elston, W .J. Blake, and J.J. Collins. Stochasticity in gene expression: from theories to phenotypes. Natur e Revie ws Genetics , 6(6):451–464, 2005. S.L. K endall, M. W ithers, C.N. Soff air, N.J. Moreland, S. Gurcha, B. Sidders, R. Frita, A. T en Bokum, G.S. Besra, J.S. Lott, et al. A highly conserved transcriptional repressor controls a large regulon in volved in lipid degradation in mycobacterium smegmatis and mycobacterium tuberculosis. Molecular micr obiology , 65(3):684–699, 2007. D. K oller and N. Friedman. Pr obabilistic Graphical Models: Principles and T ec hniques . MIT Press, 2009. R.V . Kozinets. E-tribalized marketing?: The strategic implications of virtual communities of con- sumption. Eur opean Management J ournal , 17(3):252–264, 1999. H. Kwak, C. Lee, H. Park, and S. Moon. What is Twitter, a social network or a news media? In WWW ’10: Pr oceedings of the 19th international conference on W orld wide web , pages 591–600, Ne w Y ork, NY , USA, 2010. A CM. ISBN 978-1-60558-799-8. P . Latouche, E. Birmel ´ e, and C. Ambroise. Overlapping stochastic block models with application to the french political blogosphere. The Annals of Applied Statistics , 5(1):309–336, 2011. Y . Liu, N. Qiao, S. Zhu, M. Su, N. Sun, J. Boyd-Kirkup, and J.-D. Han. A nov el bayesian net- work inference algorithm for integrati ve analysis of heterogeneous deep sequencing data. Cell Resear ch , 23(3):440–443, 2013. S. Mangan and U. Alon. Structure and function of the feed-forward loop network motif. Pr oceed- ings of the National Academy of Sciences , 100(21):11980–11985, 2003. D. Marbach, J.C. Costello, R. K ¨ uf fner, N.M. V eg a, R.J. Prill, D.M. Camacho, K.R. Allison, M. Kel- lis, J.J. Collins, G. Stolovitzky , et al. W isdom of crowds for robust gene network inference. Natur e methods , 2012. 17 T . Michoel, S. Maere, E. Bonnet, Y . Joshi, A.and Saeys, T . V an den Bulcke, K. V an Leemput, P . V an Remortel, M. Kuiper , K. Marchal, et al. V alidating module network learning algorithms using simulated data. BMC Bioinformatics , 8(Suppl 2):S5, 2007. K. Mitra, A. Carvunis, S.K. Ramesh, and T . Ideker . Inte grativ e approaches for finding modular structure in biological networks. Natur e Reviews Genetics , 14(10):719–732, 2013. H. Park, K.M. Guinn, M.I. Harrell, R. Liao, M.I. V oskuil, M. T ompa, G.K. Schoolnik, and D.R. Sherman. Rv3133c/dosr is a transcription factor that mediates the hypoxic response of mycobac- terium tuberculosis. Molecular micr obiology , 48(3):833–843, 2003. Y . Qi and H. Ge. Modularity and dynamics of cellular networks. PLoS Computational Biology , 2 (12):e174, 2006. T .B.K. Reddy , R. Riley , F . W ymore, P . Montgomery , D. DeCaprio, R. Engels, M. Gellesch, J. Hub- ble, D. Jen, H. Jin, et al. Tb database: an integrated platform for tuberculosis research. Nucleic acids r esear ch , 37(suppl 1):D499–D508, 2009. T .R. Rustad, M.I. Harrell, R. Liao, and D.R. Sherman. The enduring hypoxic response of mycobac- terium tuberculosis. PLoS One , 3(1):e1502, 2008. E. Segal, M. Shapira, A. Rege v , D. Pe’er , D. Botstein, D. K oller , and N. Friedman. Module net- works: identifying regulatory modules and their condition-specific regulators from gene expres- sion data. Natur e genetics , 34(2):166–176, 2003. E. Segal, D. Pe’er , A. Rege v , D. K oller , and N. Friedman. Learning module networks. Journal of Machine Learning Resear ch , (6):557–588, 2005. T .A.B. Snijders and K. No wicki. Estimation and prediction for stochastic blockmodels for graphs with latent block structure. J ournal of Classification , 14(1):75–100, 1997. M.I. V oskuil, D. Schnappinger , K.C. V isconti, M.I. Harrell, G.M. Dolganov , D.R. Sherman, and G.K. Schoolnik. Inhibition of respiration by nitric oxide induces a mycobacterium tuberculosis dormancy program. The Journal of e xperimental medicine , 198(5):705–713, 2003. Y .J. W ang and G.Y . W ong. Stochastic blockmodels for directed graphs. J ournal of the American Statistical Association , 82(397):8–19, 1987. A.V . W erhli and D. Husmeier . Reconstructing gene re gulatory networks with bayesian networks by combining expression data with multiple sources of prior kno wledge. Statistical Applications in Genetics and Molecular Biology , 6(1):15, 2007. F . Y an, Z. Xu, and Y . Qi. Sparse matrix-variate gaussian process blockmodels for network modeling. arXiv pr eprint arXiv:1202.3769 , 2012. J. Y ang and J. Leskovec. Patterns of temporal variation in online media. In Pr oceedings of the fourth A CM international confer ence on W eb sear ch and data mining , pages 177–186. A CM, 2011. C.-H. Y eang and T . Jaakkola. Modeling the combinatorial functions of multiple transcription factors. J ournal of Computational Biology , 13(2):463–480, 2006. 18 C.-H. Y eang, T . Ideker , and T . Jaakkola. Physical network models. Journal of computational biology , 11(2-3):243–262, 2004. A ppendix A. Proof of Lemma 1 Lemma 1 Node V ariables Model: F or the model of node variables X , if we have: P ( X |{A , S } 0 , Θ 0 , Σ 0 ) = P ( X |{A , S } , Θ , Σ) (3) 1. Then, we can conclude: µ 0 = µ and Σ 0 = Σ . 2. If we further assume {A , S } = {A , S } 0 and that each module has at least two non par ent nodes and P k | P a k | < N and the covariance matrix Σ is in vertible, we can conclude: Θ = Θ 0 , W = W 0 . Proof sk etch: 1. Considering that distributions of X are multiv ariate Normal under both parameter sets, it is straight forward that the mean and cov ariance parameters of two Normals should be the same. This can be formally sho wn by finding maximum of the distribution and curvature at any point for both sides, hence, µ 0 = µ and Σ 0 = Σ . 2. From the identifiability of µ and Σ , it is suf ficient to sho w that µ and Σ uniquely define Θ , W gi ven {A , S } . Starting from Γ c , we can consider the follo wing set of linear equations: µ c = Γ c µ R c This is a set of equations with N equations and P k | P a k | unkno wns. Hence, when P k | P a k | < N this set of linear equations will lead to a unique solution if a solution exists. For the Σ , gi ven that it is in vertible, we hav e: Σ − 1 = ( I − W ) T ( I − W ) (4) Considering that parents hav e the same value for W nr for ∀ n ∈ M k . Then, we can simply find W nr by solving | P a k | ∗ W nr 2 = Σ − 1 ij where i, j are two genes that are non parents and belong to the module M k . A ppendix B. Proof of Theor em 1 Theorem 1 Netw ork data Model: If we have: P ( B |{A , S } , π ) = P ( B |{A , S } 0 , π 0 ) (5) Then: {A , S } = {A , S } 0 with a permutation and π = π 0 (except in a set of parameter s which have a null Lebesgue measur e). Proof sketch: Our network data model is an ov erlapping stochastic block model, where the blocks are parents and modules, with a specific parametrization among the modules and parents. Hence, we hav e the identifiability using the Theorem 4.1 in Latouche et al. (2011). 19 A ppendix C. Proof of Theor em 2 Theorem 2 Identifiability of model: If we have: P ( B |{A , S } , π ) = P ( B |{A , S } 0 , π 0 ) (6) P ( X |{A , S } 0 , Θ 0 , Σ 0 ) = P ( X |{A , S } , Θ , Σ) (7) with assuming that each module has at least two non-par ent nodes and P k | P a k | < N and the covariance matrix Σ is invertible , then: {A , S } = {A , S } 0 with a permutation, π = π 0 , Θ = Θ 0 and W = W 0 . Proof sketch: This theorem is an immediate result from combination of Theorem 1 and Lemma 1. Using (6), according to Theorem 1 we have: {A , S } = {A , S } 0 with a permutation and π = π 0 . No w , knowing {A , S } = {A , S } 0 and equation (7) we can apply Lemma 1 leading to Θ = Θ 0 and W = W 0 . This concludes the proof. A ppendix D. Learning Module Assignment A . Learning the assignment of each gene to a module, in volv es learning the number of modules. Changing the number of modules howe ver , changes dimensions of the parameter space and there- fore, densities will not be comparable. Thus, to sample from P ( A|S , Θ , Σ , , Z S π , X , B ) , we use the Rev ersible-Jump MCMC method (Green, 1995), an extension of the Metropolis-Hastings algo- rithm that allo ws moves between models with dif ferent dimensionality . In each proposal, we consider three close mov e schemes of increasing or decreasing the number of modules by one, or not changing the total number . For increasing the number of modules, a random gene is mov ed to a new module of its o wn and for decreasing the number , two modules are merged. In the third case, an gene is randomly moved from one module to another module, to sample its assignment. W e design transformation of parameters using Green’ s method to extend model dimensions (Algorithm 2) The acceptance ratio for the split mov e is P split = min { 1 , P ( M ( i +1) | X,B ) P ( M ( i ) | X,B ) × 1 K +1 1 K × p +1 p − 1 × 1 p ( u ) p ( u 0 ) × J ( i ) → ( i +1) } where J ( i ) → ( i +1) is the Jacobian of the transformation from the pre vious state to the proposed state, and the acceptance ratio for the merge move is P merg e = min { 1 , P ( M ( i +1) | X,B ) P ( M ( i ) | X,B ) × 1 K − 1 1 K × p − 1 p +1 × J ( i ) → ( i +1) } . 20 Algorithm 2 RJMCMC to update A 1: Find K : number of distinct modules in A ( i ) 2: Propose mov e ν from {− 1 , 0 , +1 } with probabilities p − 1 , p 0 , p +1 , respecti vely . 3: switch ( ν ) 4: case +1 : 5: Select random gene n ∈ M k uniformly 6: Assign n to new module M K +1 7: Assign parents P a K +1 = P a k 8: Dra w vectors u , u 0 ∼ N (0 , 1) 9: Propose parameters: 10: π P a K +1 k 1 = π P a k k − u , π P a k k 2 = π P a k k + u 11: γ P a K +1 k 1 = γ P a k k − u 0 , γ P a k k 2 = γ P a k k + u 0 12: Compute { Θ , Σ , π } 13: Accept A ( i +1) with P split 14: case − 1 : 15: Select two random modules M k 1 and M k 2 16: Mer ge into one module M k 1 17: Assign parents P a k 1 = P a k 1 ∪ P a k 2 18: f or ∀ r ∈ P a k 1 ∩ P a k 2 do 19: Propose π r k 1 = ( π r k 1 + π r k 2 ) / 2 20: and γ r k 1 = ( γ r k 1 + γ r k 2 ) / 2 21: end f or 22: Compute { Θ , Σ , π } 23: Accept A ( i +1) with P merg e 24: case 0 : 25: Select two random modules M k 1 , M k 2 26: Mo ve a random gene n from M k 1 to M k 2 27: Compute { Θ , Σ , π } 28: Accept A ( i +1) ( n ) = k 2 with P mh 29: end switch A ppendix E. Learning Dependency Structure S . T o sample from the dependency structure P ( S |A , Θ , Σ , Z S π , X , B ) (assignment of parents), we also implement a Rev ersible-Jump method, as the number of parents for each module needs to be determined. T wo proposal moves are considered for S which include increasing or decreasing the number of parents for each module, by one (Algorithm 3). In the case of addition of a parent to a module, we propose mixture coefficients γ and interaction parameters π for the added regulator , based on its learned v alues in another module, where it has already been assigned as a parent, with an additional noise term. The acceptance ratio for the add proposal is P add = min { 1 , P ( M ( i +1) | X,B ) P ( M ( i ) | X,B ) × 1 R k +1 1 R − R k × p S 1 − p S × 1 p ( u ) p ( u 0 ) × J ( i ) → ( i +1) } where R k is the number of parents for module k in the 21 i − th state, and the acceptance ratio for the remov e proposal is P rem = min { 1 , P ( M ( i +1) | X,B ) P ( M ( i ) | X,B ) × 1 R − R k +1 1 R k × 1 − p S p S × J ( i ) → ( i +1) } . Algorithm 3 RJMCMC to update S 1: Set p S 2: f or module k = 1 to K do 3: Propose ν from { +1 , − 1 } with p S 4: switch ( ν ) 5: case +1 : 6: Add a random parent r ∈ 1 , ..., R to P a k 7: Draw u, u 0 ∼ U nif (0 , 1) 8: if r is also a parent of another module P a k 0 then 9: Propose π r k = π r k 0 + u , γ r k c = γ r k 0 c + u 0 ( c ) for all c ∈ { 1 , ..., C } 10: else 11: Propose π r k = u , γ r k c = u 0 ( c ) for all c 12: end if 13: Compute { Θ , Σ , π } 14: Accept S ( i +1) with P add 15: case − 1 : 16: Remove a random parent r from P a k 17: Compute { Θ , Σ , π } 18: Accept S ( i +1) with P rem 19: end switch 20: end f or 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment